The eighteen-month cliff

Your AI program launched a year ago. It works. Customers use it. Engineering ships features on the same platform.

And yet, last week, a senior engineer told you they'd rather move off the AI team. The reason wasn't the work. The reason was the on-call. The deploys take too long. The cost dashboard refreshes weekly. The eval lives in someone's notebook. Every model swap takes a meeting. The platform is shipping value, but operating it has become more painful than building it.

This is what month eighteen looks like for programs that didn't invest in LLM ops up front. The model works. The system around the model has accumulated debt nobody scheduled to repay.

If you're at month six or month nine, the cliff is visible from where you're standing. The decision is whether to build the operating model now, while you still have margin, or pay the debt later, when senior engineers are leaving and the CFO is asking why velocity has dropped.

Real Estate Marketing Attribution

A single attribution mistake led to a 22% pipeline drop. Here’s how real estate teams fix it with full-funnel visibility.

The CTO question most LLM ops content gets wrong

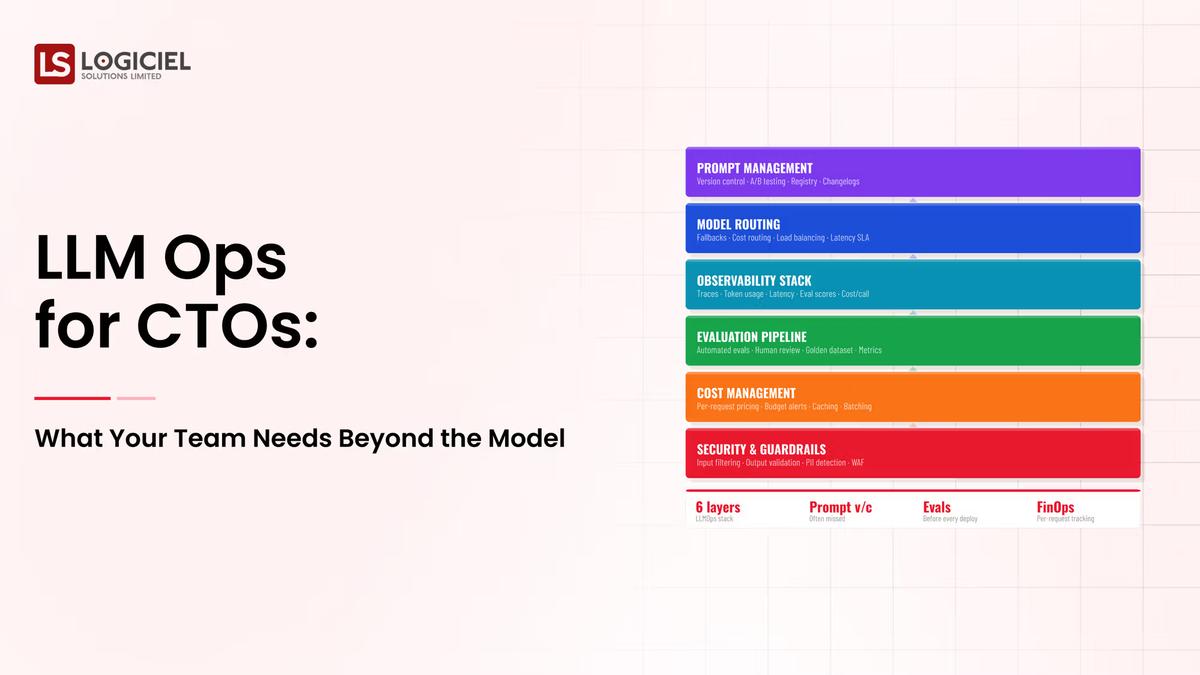

Most content on LLM ops describes tools. Eval platforms. Observability stacks. Cost dashboards. Vector stores. Useful, but the wrong abstraction for the CTO conversation.

The CTO question is not "what tools do we need." It's "what discipline does my team need to operate this for two years without burning out, without losing the senior engineers, without surprising the CFO, and without failing the next regulator visit." The tools support the discipline. The discipline is the actual deliverable.

Five operational practices distinguish programs that survive to year two from programs that hit the cliff at month eighteen. None of them are exotic. All of them require somebody to make them somebody's job before the launch, not after.

The five practices that hold the program up

Practice 1: Eval as production code, not as a notebook

The single most predictive practice. Teams whose eval harness lives in CI, runs at minimum daily, and blocks promotion on quality regression don't have the "is it getting worse" debate every week. They have a number on a dashboard.

The bar to hit: eval cases curated continuously from production failures, scoring methods tested against human review, automated regression detection wired to deploy gates. Build that and your model swap question goes from heroic to routine. Skip it and every change becomes a debate.

Practice 2: Observability that catches the silent failures

The reliability layer most enterprise AI programs are missing. Standard SRE tooling catches the system failing. AI-specific observability catches the model behaving differently than yesterday without anything traditional alerting.

Industry surveys put 67% of enterprises at least once a year running misaligned AI for over a month before noticing the issue. That number drops to single digits in programs running input distribution monitoring, output anomaly detection, and continuous quality scoring. The discipline difference is the difference between catching drift in the dashboard and catching it in customer complaints.

Practice 3: A cost dashboard refreshed daily, owned by a named person

The CFO conversation that's coming, the one we discussed in the cost optimization piece, depends on this practice. Teams that have a daily cost dashboard, broken out by feature and tenant, with a named owner whose job it is to argue against drift, defend their budgets. Teams without it explain budget variances after the fact.

The dashboard is not a finance project. It's an engineering project, owned by engineering, used in weekly engineering reviews. Building it is a 1-2 week effort using gateway logs your team already produces. The reason most programs don't have one isn't capability. It's that nobody made it a priority.

Practice 4: Rollback automation that runs without a meeting

Mature programs swap models, prompts, and pipelines on a Friday afternoon. The promotion goes through CI. The eval gates it. If regression hits, the rollback is automated. Total elapsed time from "let's revert" to "reverted" is under fifteen minutes.

Immature programs schedule meetings to discuss the rollback. Three engineers, two managers, and a security partner debate the risk. The reversal takes a sprint. By the time it ships, the metric has moved.

The practical implication: programs without rollback automation are programs that don't experiment because experimentation is too risky. Innovation velocity drops. The team gets conservative. By month eighteen, the program is shipping more cautiously than the engineering team would want.

Practice 5: An on-call rotation tuned for LLM failure modes

Generic SRE on-call rotations are not enough. LLM failures look different. Hallucinations, drift, prompt injection, partial outputs, cost spikes. The on-call engineer needs runbooks for each of these, recent practice on each of them, and an escalation path that includes someone who actually understands the model layer.

The pattern in struggling programs: on-call gets staffed by whoever is on the broader engineering rotation. The engineer paged at 3am doesn't know what the eval harness shows, can't find the cost spike attribution, and has no rollback authority. The incident gets handed off, the senior engineer wakes up, and by morning everyone is exhausted. The team that does this twice asks why they're on the AI team.

The fix is dedicated runbooks, paired on-call (one platform engineer plus one ML-aware engineer), and quarterly tabletop exercises. The cost is real. The cost of not doing it is the senior engineers leaving.

Ask yourself: Of the five practices, how many does your team run today as a daily discipline? If the number is less than four, you've already started accumulating the debt that will surface at month eighteen.

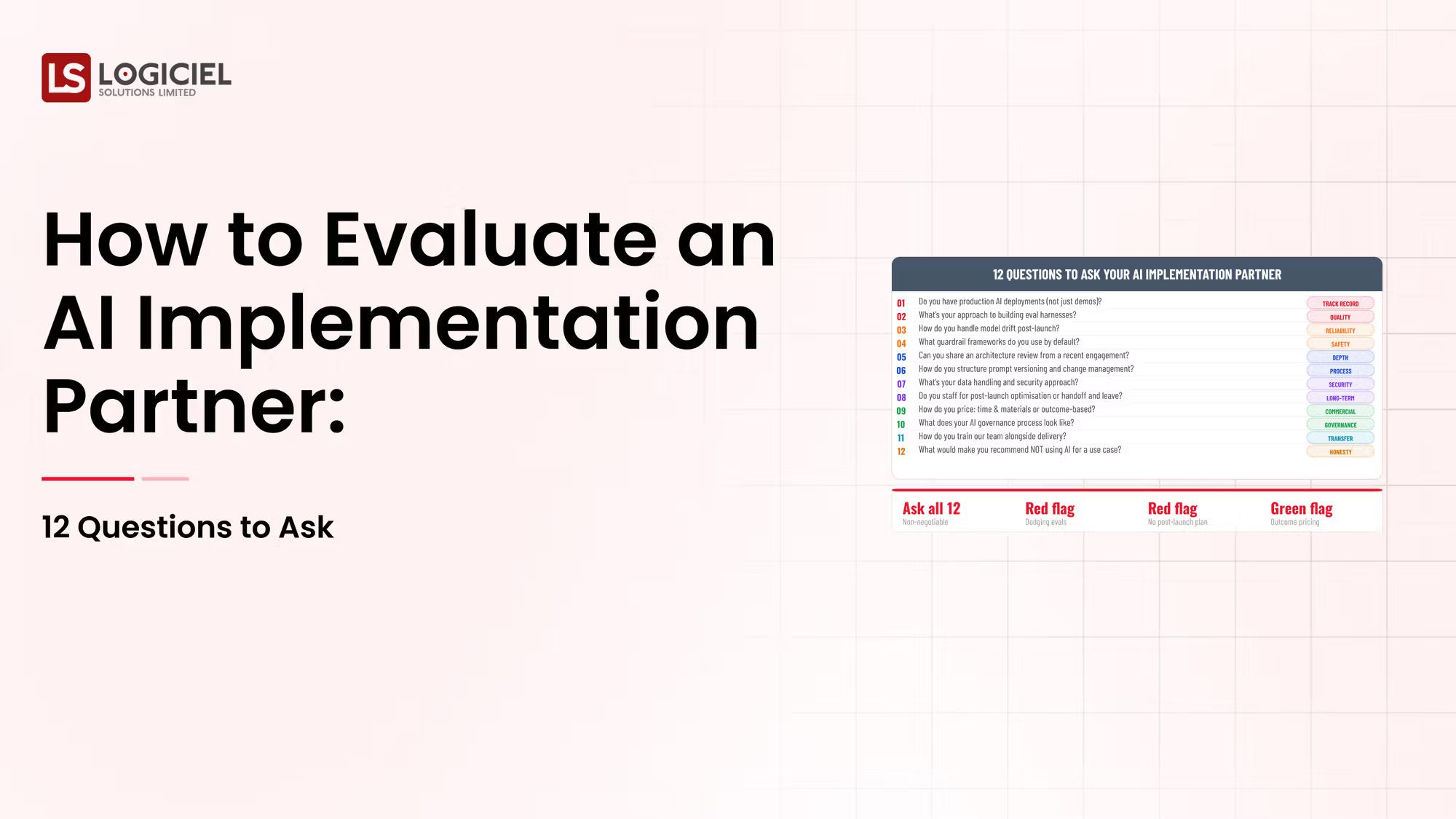

What the CFO and CISO are about to ask

Three questions are coming from outside engineering in 2026. Get ahead of them.

The CFO will ask: what did AI cost yesterday, broken out by feature and team, and what does the trend look like? Answer: a screen, refreshed daily, with named ownership. If the answer is "let me get back to you," the CFO's confidence in the program drops.

The CISO will ask: what's our exposure if a vendor outage takes down our LLM provider for a week? Answer: a documented fallback path, tested, with named alternatives. If the answer is "we'd manage it," that's a follow-up email from the audit committee.

The board will ask: what's the audit trail for the last AI-mediated customer decision? Answer: a queryable record produced in 15 minutes. If the answer involves a forensic reconstruction from logs, the EU AI Act's 1%-of-turnover penalty for misleading information to regulators is the risk you're now carrying without realizing it.

Each of these three questions is downstream of the five practices. Build the practices, you have the answers. Skip them and you'll be improvising the answers under pressure.

The investment that pays back the fastest

Of the five practices, the highest-leverage starting investment is eval as production code. Everything else compounds on it.

Without continuous eval, you can't measure whether your changes improve quality, so you can't ship with confidence, so model swaps become risky, so the program loses optionality. With it, every other practice has a quality signal to anchor to.

Most enterprise programs can stand up CI-integrated eval in 2-4 weeks of focused work for two senior engineers. That investment pays back inside the first quarter through better deploy velocity, fewer rollbacks, and a quality conversation grounded in numbers instead of opinions.

The other four practices follow naturally: observability extends the eval signal, the cost dashboard is plumbing on top of gateway logs you already have, rollback is automation around the deploy that eval now gates, and on-call runbooks document what the dashboard already shows you.

Build eval first. The rest is sequencing.

How Logiciel fits this conversation

Most CTOs who reach out to us about LLM ops have a working AI feature in production and are starting to feel the eighteen-month cliff coming. They've shipped, the system works, and the operational debt is becoming visible in deploy times, in on-call burden, in the CFO's questions.

The work we do is the operating model. The eval harness in CI. The observability stack tuned for LLMs. The cost dashboard with named ownership. The rollback automation. The on-call runbooks and tabletop exercises. We build it alongside your team, so the engineers operating the platform at month twenty-four are your engineers, with the muscle memory we've trained together.

The economic case usually lands cleanly. Most engagements pay back through retained senior engineers (the cliff prevented), recovered deploy velocity, and the avoided CFO/CISO/board surprises. The first program is the expensive one. Programs two through five run on the operating model we built together.

Data Infrastructure ROI Calculator

Use this ROI calculator to measure maintenance cost, inefficiencies, and hidden losses in your data stack.

Call to Action

Two specific moves before month eighteen

If you're at month nine and the senior engineers are still happy, you have a six-month window to fix this before the cliff is visible from leadership.

Use the AI Velocity Blueprint to score your program against the five practices. It takes about an hour with your engineering lead. The report tells you which practice is highest-leverage to build first.

Download the AI Velocity Blueprint →

Or skip the worksheet and book a 30-minute working session with a senior Logiciel engineer. Bring your engineering lead. We'll walk through your program against the five practices and the three questions the CFO/CISO/board are about to ask. No deck.

Book the 30-minute LLM ops session →

Frequently Asked Questions

We don't have an AI program in production yet. Is this premature?

It's the right time. Programs that build the operating model alongside the first deployment avoid the eighteen-month cliff entirely. Programs that retrofit it hit the cliff and pay double. The five practices cost less when designed in than when added later.

How does LLM ops differ from traditional MLOps?

Different failure modes, different cost shape, different governance requirements. MLOps was built around trained models with predictable retraining cycles. LLM ops is built around hosted and prompted models with vendor dependencies, dynamic cost, and audit traceability requirements. The shapes are different enough that the tooling and operating model are different.

We use vendor LLM APIs. Doesn't the vendor handle most of this?

The vendor handles their side of the line. The line is at the model. Everything past it (eval, observability, cost attribution, rollback, on-call) is yours. Vendor-hosted doesn't mean vendor-operated on your behalf. The five practices live on your side regardless of where the model runs.

What does this cost?

For a mid-sized engineering team, building the five practices is 8-16 weeks of focused work for 2-3 engineers, or roughly half that with a partner who has done it before. Ongoing operation is 4-8 hours per week across the team to run the cadence. The avoided cost (retained engineers, faster deploys, defensible CFO/CISO conversations) typically pays back within two quarters.

Which practice should we build first?

Eval as production code. Every other practice compounds on it. Without continuous eval, you can't ship with confidence; with it, every change becomes measurable. Build the eval harness first. The other four follow.