The slide deck arrives at week twelve

It's a beautiful deck. Eighty-six pages, custom illustrations, three appendices. The recommendations are well-reasoned. It will look good in any board meeting you take it into.

What it won't do is ship a feature your customers can use.

Real Estate Investment AI

Your models aren’t wrong. Your data is. Here’s how real estate teams fix AI failures before they cost millions.

That's the moment most CTOs realize they hired the wrong kind of AI implementation partner. The partner wasn't bad, exactly. They just sold a category of work the CTO thought was implementation, and it turned out to be advice.

This is the field guide I wish more CTOs read before signing.

The numbers are not on your side

BCG's 2024 research found that 74% of companies investing in AI failed to generate tangible value. HFS Research's enterprise survey says 65% of buyers find traditional consulting models no longer worth what they cost. 68% of AI projects blow their initial budget by an average of 42%, with scope creep named as the primary cause more than half the time.

That last one's worth sitting with. The data isn't saying AI is failing. It's saying enterprises are paying for AI implementation and receiving something else.

In 2025, this got loud enough that the consulting industry started restructuring itself. McKinsey laid off 200 technology and support staff after automating non-client-facing work with AI. Accenture cut roughly 11,000 roles. The category of partner that built its business on advising clients on AI is now being disrupted by the AI it used to advise on.

There's something darkly funny in there. If your AI partner can't stop their own internal operations from being replaced by the thing they're selling you, what is the implementation actually buying you?

The reframe most procurement processes miss

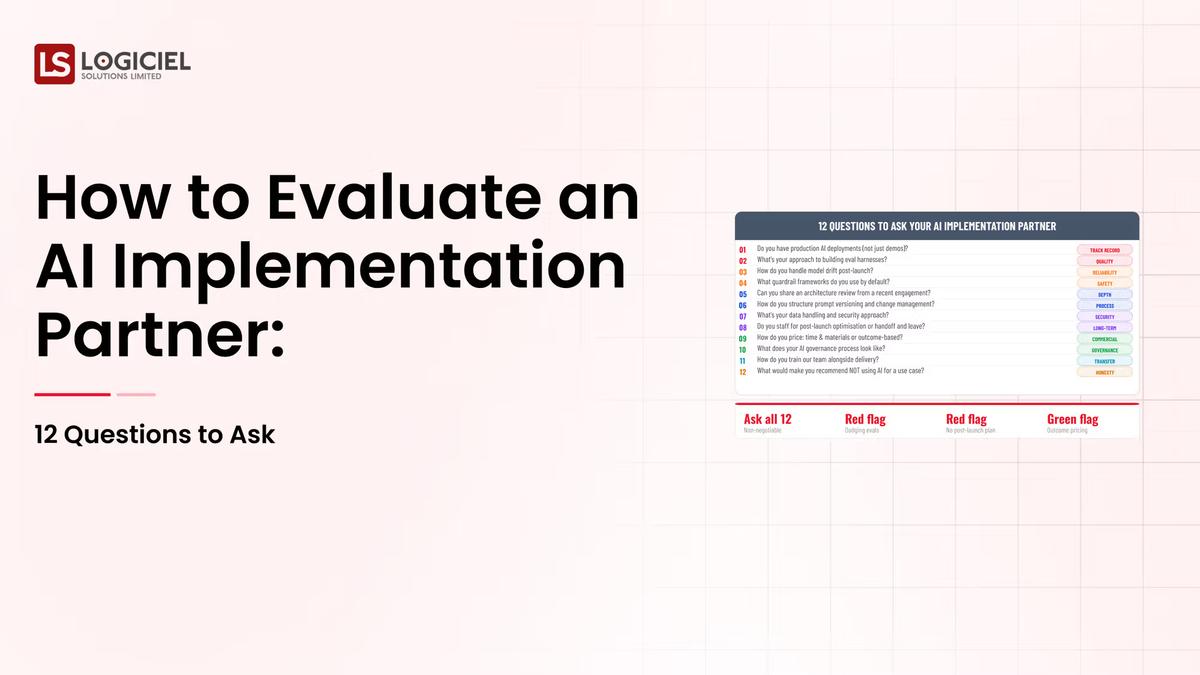

The classic AI partner evaluation has twelve questions. Capability, method, governance, references, cost, exit, knowledge transfer, security, roadmap, team, contract terms, escalation. All valid. None of them is the question that decides whether your program ships.

The question that matters: at day 90 of the engagement, how much production code exists that wouldn't have existed without them?

That's it. Everything else is supporting evidence.

If the honest answer is "we'll be in design phase through month four, then we move to build," you hired a consultancy. Different category, different economics, different outcome. Sometimes that's the right thing. Often it's not what you signed up for.

If the answer is "the gateway service is in CI by week three, the eval harness ships in week six, the first agent endpoint goes to a controlled production population in week nine," you hired an implementation partner. They cost less than the consultancy in total. They produce code that runs on a Tuesday.

The difference between those two categories is what I'm calling the consultancy tax. The premium you pay for slides instead of deploys. Most enterprise AI budgets pay it twice: once in fees, once in the program never shipping.

Seven questions that surface the category

These replace the standard twelve. Each is designed to make a salesperson uncomfortable, which is the only reliable signal you've found a real one.

1. Show me the last three production systems you shipped in environments like ours. Walk me through the architecture.

Not case studies. Not glossy reference decks. The actual architecture, the actual repo structure, the actual on-call rotation that runs them today.

Real implementation partners can do this in fifteen minutes. They'll pull up a whiteboard, draw the gateway, the eval harness, the observability stack, name the engineer who shipped the rollback automation. Consultancies will offer to "share a similar engagement" and send you a PDF later.

The PDF is the answer.

2. What's the last customer-impacting incident your team handled? What shipped to fix it? How long did it take?

A partner that's actually operating production systems has incidents. They have post-incident reviews. They can describe the specific failure mode, the specific fix, and the architectural change that came out of it.

A partner that's been doing strategy work for the same customer for eighteen months has none of this. The honest answer there is "we don't handle incidents, the client's team does that." Which tells you who's actually running the system.

3. At day 90 of a comparable engagement, how many engineers from your team were committing code to the client's repo? How many were running workshops?

The ratio is the diagnostic. A strong implementation partner has 80% of their team in the repo and 20% in workshops at day 90. A consultancy pretending to implement is the inverse. The contract will not show you this ratio. The question will.

4. What does your average engagement cost in change orders versus the signed statement of work?

Industry data has 68% of AI projects exceeding budget with average overruns of 42%. The right partner has a number, and it's under 10%. The wrong partner says "every engagement is different" or "we work on a time-and-materials basis."

T&M with no change-order discipline is the financial structure of a partner that has no incentive to ship on time. Worth knowing.

5. If we ended the engagement at month nine, what would we own that we don't own today?

Ask this exactly. Then watch the pause.

A strong partner will list specific assets: the gateway service code, the eval harness, the runbook documentation, the IaC modules, the named engineers on your team who can operate it after they leave. They've thought about this because their model assumes you will eventually outgrow them.

A weak partner lists "frameworks," "playbooks," "best practices." None of those are operable assets. They're deliverables. You bought consulting.

6. Who from your team would I be on Slack with at 2am during a launch week incident?

Not "we have 24/7 support." Not "we have an escalation process." A name. A specific senior engineer who has shipped this kind of system before and who will be the one in the war room.

If the partner won't give you a name during the sales process, you won't get one when the system breaks. The partner who introduces you to the engineer before you sign is the one treating the engagement as engineering.

7. What would make you fire us as a client?

This is the most diagnostic question in the seven. Strong partners have an answer because they've fired clients. Unclear scope. Sponsor turnover. Demands for unrealistic timelines. Refusal to invest in operating model after the initial build. Those are the conditions under which a good partner walks away.

A partner who can't fire a client doesn't have standards. A partner without standards optimizes for billable hours.

Ask yourself: Of the three partners on your current shortlist, how many would actually fire you for the wrong reasons? Probably one. That's the one.

What this looks like when it goes wrong

I've watched this play out more than once. Every engagement is messier than the description, but the pattern is consistent enough to be worth naming.

CTO A signs with a top-three consultancy. Kickoff is a four-week discovery, output is a 90-page report. Build phase starts at month four with a different team than the one that did the discovery. (That handoff alone is where about a third of these go sideways, in my experience.) At month nine, the gateway exists but the eval harness is in a Jupyter notebook and the on-call rotation is still being negotiated. At month eighteen, the consultancy hands over a "platform" that runs but no one on the client's team can confidently swap a model in it. The contract renews. Nobody wants to start over.

CTO B signs with an engineering partner. Discovery is one week. Code starts shipping in week two. The eval harness lives in CI by week six. A controlled production rollout happens at week nine. The on-call rotation is shared between the partner's senior engineer and the client's lead by week twelve. At month nine, the client's team is operating the system. The partner is starting the second use case on the platform they built together. By month eighteen, the client has shipped four AI programs on the original platform.

I'm not going to pretend these stories represent neat percentages of the market. They don't. The point is that the divergence is real, and it's traceable to one decision the CTO made before signing: which kind of partner they bought, whether or not the contract labeled it correctly.

What you actually buy when you buy implementation

You buy a working system in production. Not a design for one. The system runs at the end of the engagement, has been operated for at least six weeks by your team, and has at least one full incident-and-recovery cycle in its history.

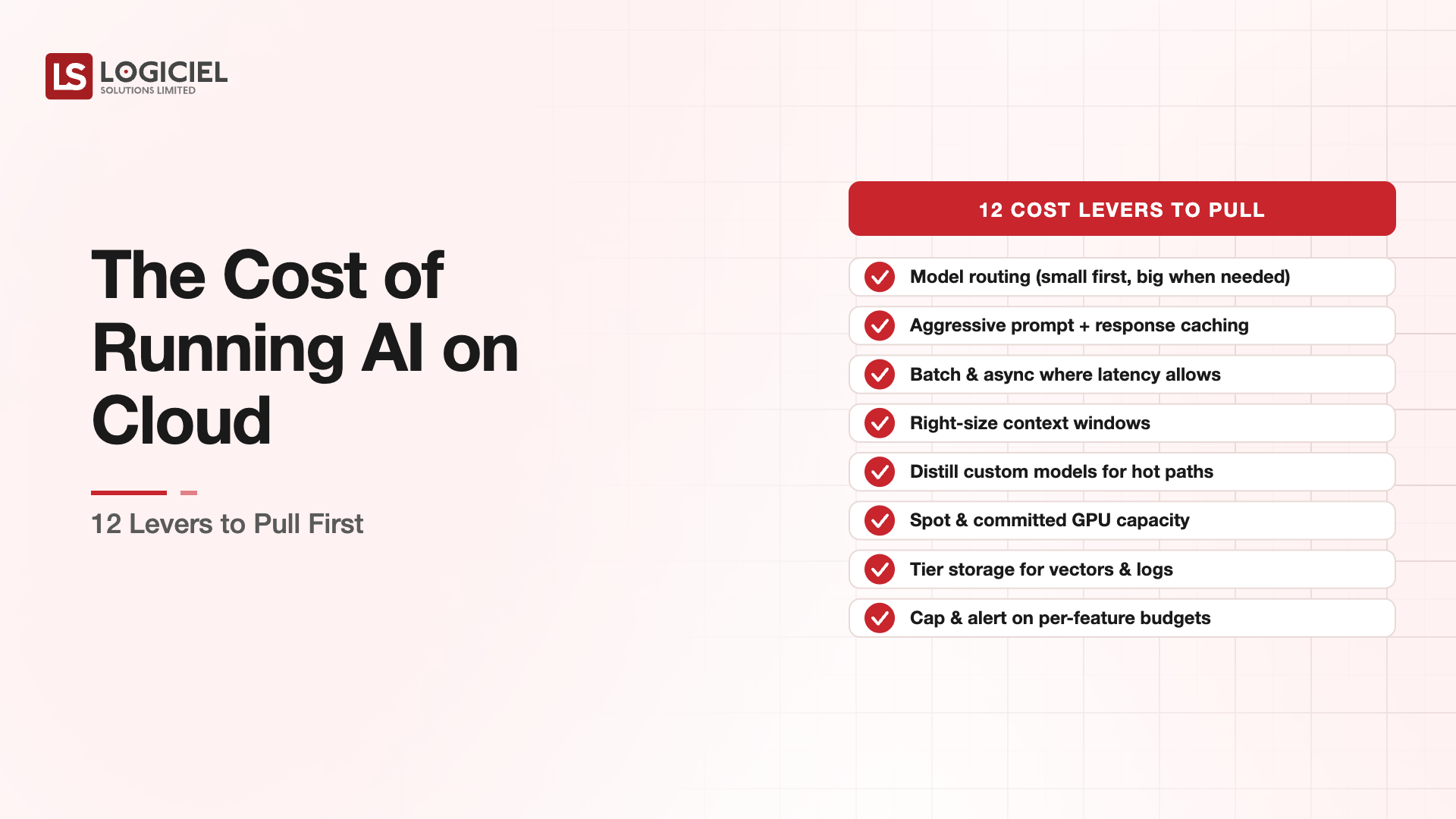

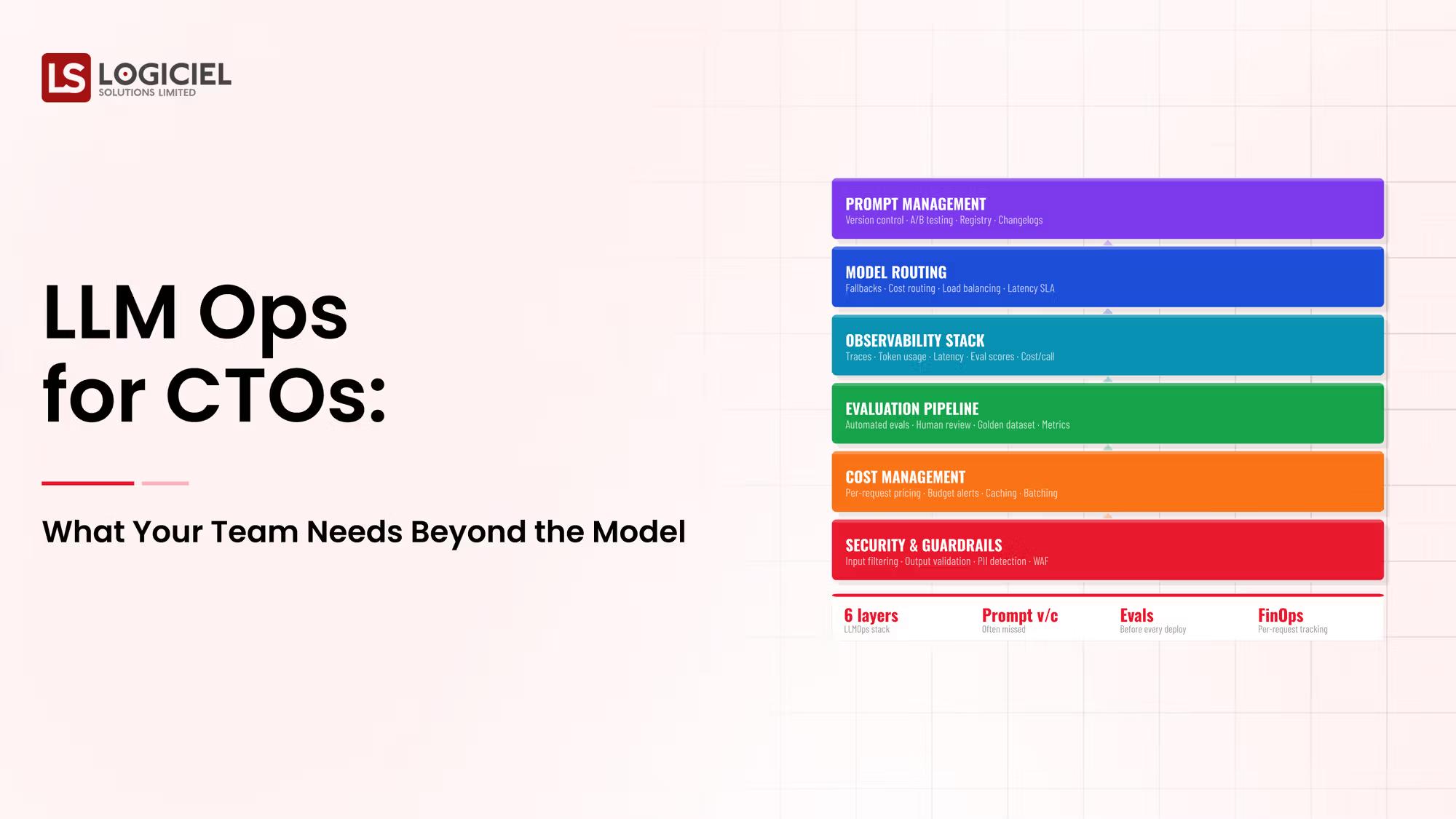

You buy a platform layer that supports the next four programs. Gateway, eval harness, observability stack, audit trail. Not as documentation. As code your team owns and can extend without calling anyone.

And you buy operational muscle memory. Your team has shipped, debugged, rolled back, and operated AI in production. They can do it again without supervision. The partner becomes optional, not load-bearing.

If your draft contract doesn't itemize these with acceptance criteria, you're paying for the third one and getting the first one's slide deck. Renegotiate before signing.

How Logiciel fits this conversation

Most engineering leads who reach out to us have just finished a discovery phase with a different partner and are wondering why no code has shipped yet. The common thread isn't that the previous partner was bad. It's that the previous partner was a consultancy, and the CTO assumed implementation.

We don't sell strategy. We don't sell discovery phases. We ship the engineering, the gateway, the eval harness, the observability layer, the rollback automation, the operating runbooks, alongside your team, in a way that leaves your team able to operate it after we're done. The first program is the expensive one. Programs two through five ride on what we put down.

If you've read this far and three names came to mind on your shortlist, the seven questions above will probably eliminate two of them. We may or may not be the right one for the third. The conversation worth having is which kind of partner you actually need.

Real Estate Identity Resolution

Duplicate records are hiding your best leads. Identity resolution reveals true buyer intent and fixes your pipeline.

Call to Action

A 30-minute conversation, not another 30-page evaluation

Use Logiciel's Evaluation Differentiator Framework to score your shortlist against the seven questions. It's a one-page worksheet, takes about half an hour with your engineering lead, and produces something defensible you can take into procurement.

Download the Evaluation Differentiator Framework →

Or skip the worksheet and book a 30-minute working session with a senior Logiciel engineer. Bring your engineering lead. We'll go through your three candidates against the seven-question test in real time. No deck.

Book the 30-minute partner review →

Frequently Asked Questions

We've already signed with a partner. Is this still useful?

Arguably more useful. Run the seven questions against your current partner at the next quarterly review. The pattern of their answers tells you whether you're being implemented or consulted to. If it's the latter, you have nine to twelve months to renegotiate scope before the contract becomes the program.

How do these seven questions map to standard procurement frameworks?

They don't. Procurement evaluates contract terms, security posture, financial stability, commercial flexibility. Necessary, not sufficient. The seven questions evaluate whether the partner can ship. Use both. Engineering owns the seven, procurement owns the rest.

Aren't some of these questions adversarial?

They're diagnostic. A partner who can answer them confidently is the one you want. A partner who gets defensive is the one whose engagement will surprise you in eighteen months. The conversation is supposed to be sharp. If your shortlist can't handle sharp questions in a sales process, what happens when something breaks in production?

We're a smaller company. Most of this is written for Fortune 500 buyers.

The questions scale down. A smaller company hiring its first AI implementation partner has more to lose from picking wrong, not less. The seven take about an hour to run. The cost of skipping them is paid in fees and missed launch dates either way.

What if our partner is a hybrid that does strategy and implementation?

Most claim this. Few do it well. Run the seven specifically against their implementation track record, not their strategy work. If they can't separate the two in their own answers, the engagement won't either. You'll get strategy hours billed at implementation rates. --- Sources cited: - BCG: 74% of AI investments fail to produce tangible value / HFS Research 65% buyer dissatisfaction - 68% of AI projects exceed budget, 42% average overrun - McKinsey 200-person AI layoff and consulting model disruption - Gartner: 30% of GenAI projects abandoned after PoC