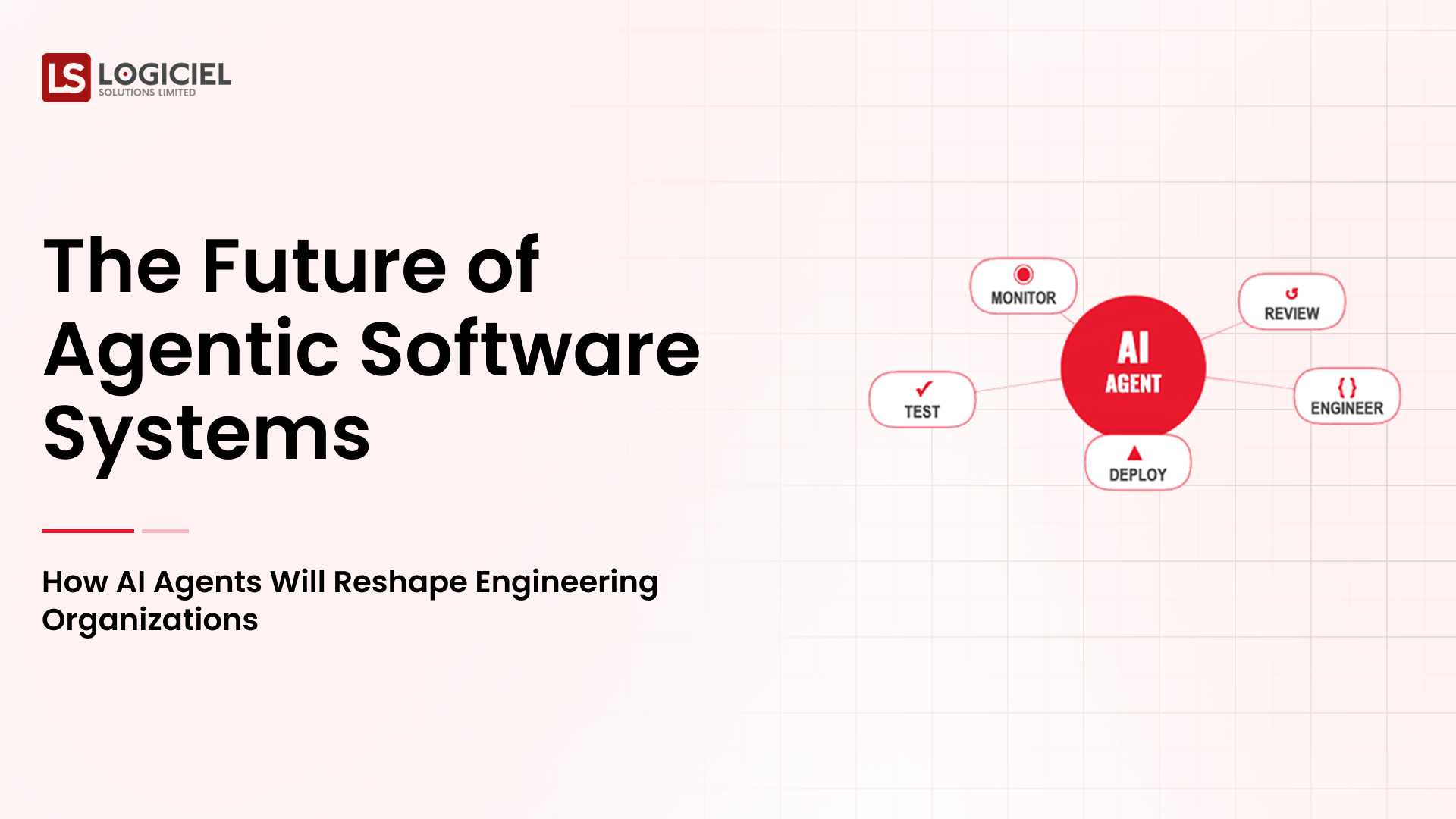

Artificial intelligence agents are becoming an increasingly important part of modern software systems. From debugging assistants and infrastructure monitoring tools to autonomous workflow automation, agents are beginning to reshape how engineering organizations build and operate software.

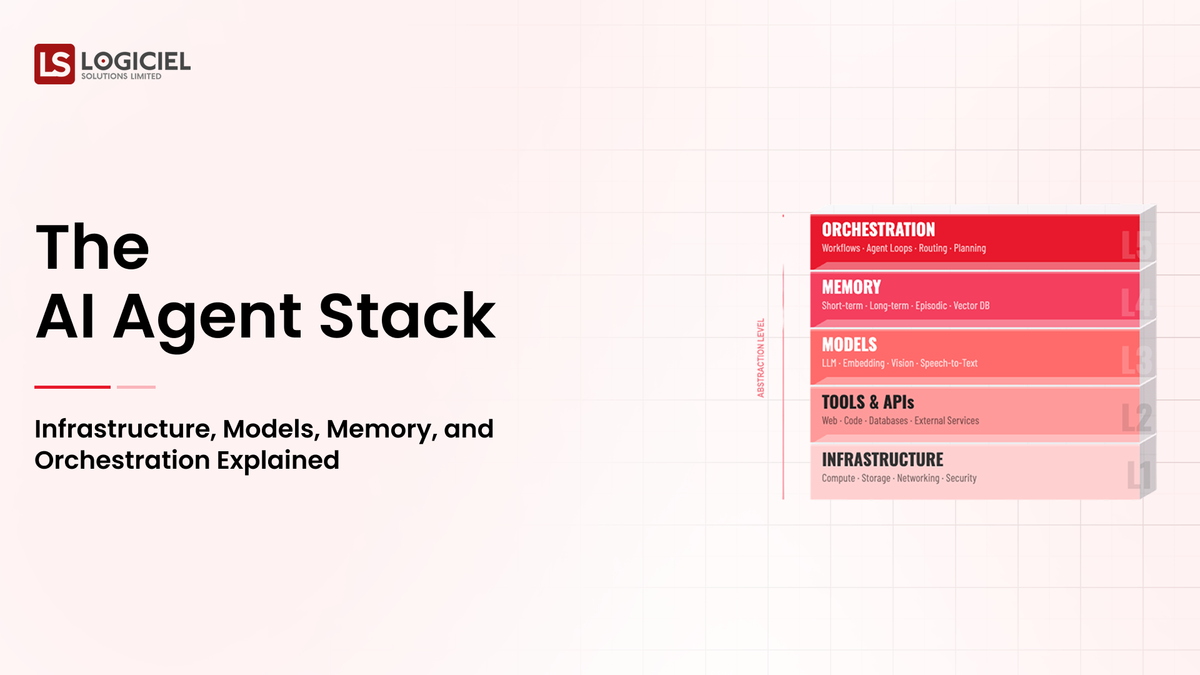

However, building reliable AI agents requires much more than connecting a language model to a prompt interface. Production-ready agent systems rely on a structured technology stack that integrates multiple components working together.

This stack includes reasoning models, memory systems, orchestration frameworks, tool integrations, observability layers, and infrastructure platforms.

For CTOs, platform engineers, and system architects, understanding this stack is essential for designing scalable and reliable agent systems.

This article explores the major components of the AI agent stack and explains how these layers work together to enable intelligent automation.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

Why AI Agents Require a Technology Stack

Traditional software systems follow deterministic logic.

Developers define rules, workflows, and data structures, and the system executes those instructions consistently.

AI agents operate differently.

They interpret goals, reason through possible actions, and interact dynamically with external systems. Because of this dynamic behavior, agent systems require multiple layers of infrastructure.

A typical agent workflow might involve retrieving relevant context, generating a plan, calling external APIs, evaluating results, and adjusting actions based on feedback.

Each of these steps relies on different technologies.

Without a structured stack, managing these interactions becomes difficult.

The AI agent stack organizes these capabilities into layers that allow engineering teams to build maintainable and scalable systems.

Layer 1: The Reasoning Model

At the core of every AI agent is a reasoning model.

This model interprets instructions, analyzes context, and generates responses or action plans.

Large language models are commonly used as reasoning engines because they can process natural language and generate structured outputs.

For example, when a developer asks an agent to investigate a system failure, the reasoning model interprets the request and determines which steps may be required.

It may decide to retrieve logs, analyze error messages, and search documentation for related issues.

The reasoning model is responsible for generating these decisions.

However, the model alone cannot execute actions or access external systems. That is where other layers of the stack become important.

Layer 2: Context and Retrieval Systems

AI agents rely heavily on context.

Without access to relevant information, reasoning models produce unreliable results.

Context systems provide the knowledge that agents use to perform reasoning.

In engineering environments, this context may include:

- source code repositories

- technical documentation

- system logs

- architecture diagrams

- monitoring metrics

Retrieval systems allow agents to access this information when needed.

Many organizations implement retrieval systems using vector databases that store semantic embeddings of documents.

When an agent receives a request, it retrieves the most relevant information from these databases and includes it in the reasoning process.

This technique, often called retrieval-augmented reasoning, significantly improves agent accuracy.

Layer 3: Memory Systems

Memory allows AI agents to maintain continuity across tasks.

Without memory, an agent would treat each interaction as an isolated event.

Most agent architectures include several types of memory.

Short-term memory stores context related to the current task. For example, it may track the steps an agent has already performed during an investigation.

Long-term memory stores persistent knowledge such as previous incidents or architectural documentation.

Some systems also include structured state memory that tracks workflow progress.

By combining these memory types, agents can maintain context across interactions and perform more sophisticated reasoning.

Memory systems are essential for building agents that behave consistently over time.

Layer 4: Tool Integration

One of the defining characteristics of AI agents is their ability to interact with external tools.

Tools allow agents to move beyond conversation and perform real actions.

For software engineering environments, these tools may include:

- Git repositories for code analysis

- CI/CD systems for build and deployment diagnostics

- monitoring platforms for infrastructure metrics

- ticketing systems for incident tracking

- cloud APIs for infrastructure management

The agent decides when to call these tools based on the reasoning process.

For example, if an agent detects that a deployment failure may be related to configuration changes, it may query the CI/CD platform to retrieve build logs.

Tool integrations therefore enable agents to interact with real systems rather than simply generating text responses.

Layer 5: Orchestration Frameworks

Orchestration is the layer that coordinates the agent’s workflow.

While the reasoning model generates ideas about what to do, the orchestration framework determines how those actions are executed.

For example, if an agent needs to diagnose a system failure, the orchestration layer may perform the following sequence:

- Retrieve recent logs

- Analyze error messages

- Retrieve documentation about the affected service

- Generate a diagnostic summary

The orchestration framework ensures that these steps occur in the correct order.

It also manages retries, error handling, and workflow state.

Without orchestration, agent systems would struggle to coordinate complex multi-step tasks.

Layer 6: Observability and Monitoring

Observability is one of the most important components of the AI agent stack.

Because agent reasoning is probabilistic, engineers must be able to understand how decisions are made.

Observability systems record detailed execution traces.

These traces may include:

- prompts sent to the reasoning model

- context retrieved from knowledge bases

- tool interactions

- generated outputs

By examining these traces, engineers can understand how an agent arrived at a particular decision.

Monitoring systems also track performance metrics such as response time, task success rates, and computational cost.

These insights help engineering teams improve reliability and efficiency.

Layer 7: Safety and Governance

Enterprise agent systems must operate within strict governance frameworks.

Agents interact with sensitive systems and data, so organizations must ensure that these interactions remain safe and controlled.

Safety layers implement guardrails that restrict agent behavior.

For example, an agent analyzing infrastructure metrics may be allowed to retrieve monitoring data but not modify production configurations.

Governance frameworks may also include approval workflows that require human oversight for critical actions.

These controls ensure that agent automation remains aligned with organizational policies.

Layer 8: Infrastructure and Deployment

The final layer of the AI agent stack is infrastructure.

Agent systems require compute resources to run reasoning models, retrieval systems, and orchestration frameworks.

Infrastructure platforms manage these resources and ensure that agents can scale as workloads increase.

For example, organizations may deploy agents within containerized environments that allow workloads to scale dynamically.

Infrastructure monitoring systems track system performance and ensure that resource usage remains efficient.

A well-designed infrastructure layer ensures that agent systems remain responsive and cost-effective.

How the Layers Work Together

Although the AI agent stack contains multiple layers, these components work together as a unified system.

When a user or system signal triggers an agent task, the orchestration layer initiates the workflow.

The reasoning model interprets the request and determines which actions may be required.

Context retrieval systems provide relevant knowledge, while memory systems track workflow state.

The agent calls external tools to gather information or perform actions.

Observability systems record each step of the process, while governance layers ensure that operations remain safe.

Finally, infrastructure platforms provide the compute resources required to run the system.

Together, these layers enable AI agents to operate as structured software systems rather than experimental prototypes.

Designing a Practical AI Agent Stack

Engineering teams designing AI agent systems should begin by identifying the core layers required for their use cases.

Simple agents may require only reasoning models, retrieval systems, and basic orchestration.

More advanced deployments may include multiple memory layers, observability platforms, and governance frameworks.

Organizations should also consider how agent systems integrate with existing infrastructure.

For example, engineering agents may need access to version control systems, monitoring tools, and deployment pipelines.

Designing the stack with these integrations in mind ensures that agents operate effectively within the broader engineering ecosystem.

The Evolution of the Agent Stack

The AI agent stack is still evolving rapidly.

As adoption grows, new tools and frameworks will likely emerge to simplify the process of building agent systems.

Cloud platforms may introduce native support for agent orchestration and memory systems.

Developer tools may begin integrating agents directly into software development workflows.

Over time, these innovations may standardize the architecture of agent systems much like cloud infrastructure standardized application deployment.

Organizations that develop expertise in agent architectures today will be better positioned to take advantage of these future developments.

Closing Perspective

AI agents represent a new class of software systems capable of reasoning, interacting with tools, and executing complex workflows.

However, building reliable agents requires more than powerful models.

It requires a structured technology stack that integrates reasoning engines, context retrieval systems, memory layers, orchestration frameworks, observability tools, and governance controls.

For CTOs and engineering leaders, understanding this stack is essential for designing scalable and reliable agent systems.

As agent technologies continue to mature, organizations that adopt disciplined architectural approaches will unlock the full potential of intelligent automation.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.