Your line-of-business leader has heard about AI as a Service from a vendor demo and wants to know if it is the fast path. Your board has heard about in-house build and wants to know if it is the moat. Engineering has heard about both and is quietly worried about owning the result either way.

This is more than a procurement decision. It is a framing decision about how your company captures value from AI over the next five years.

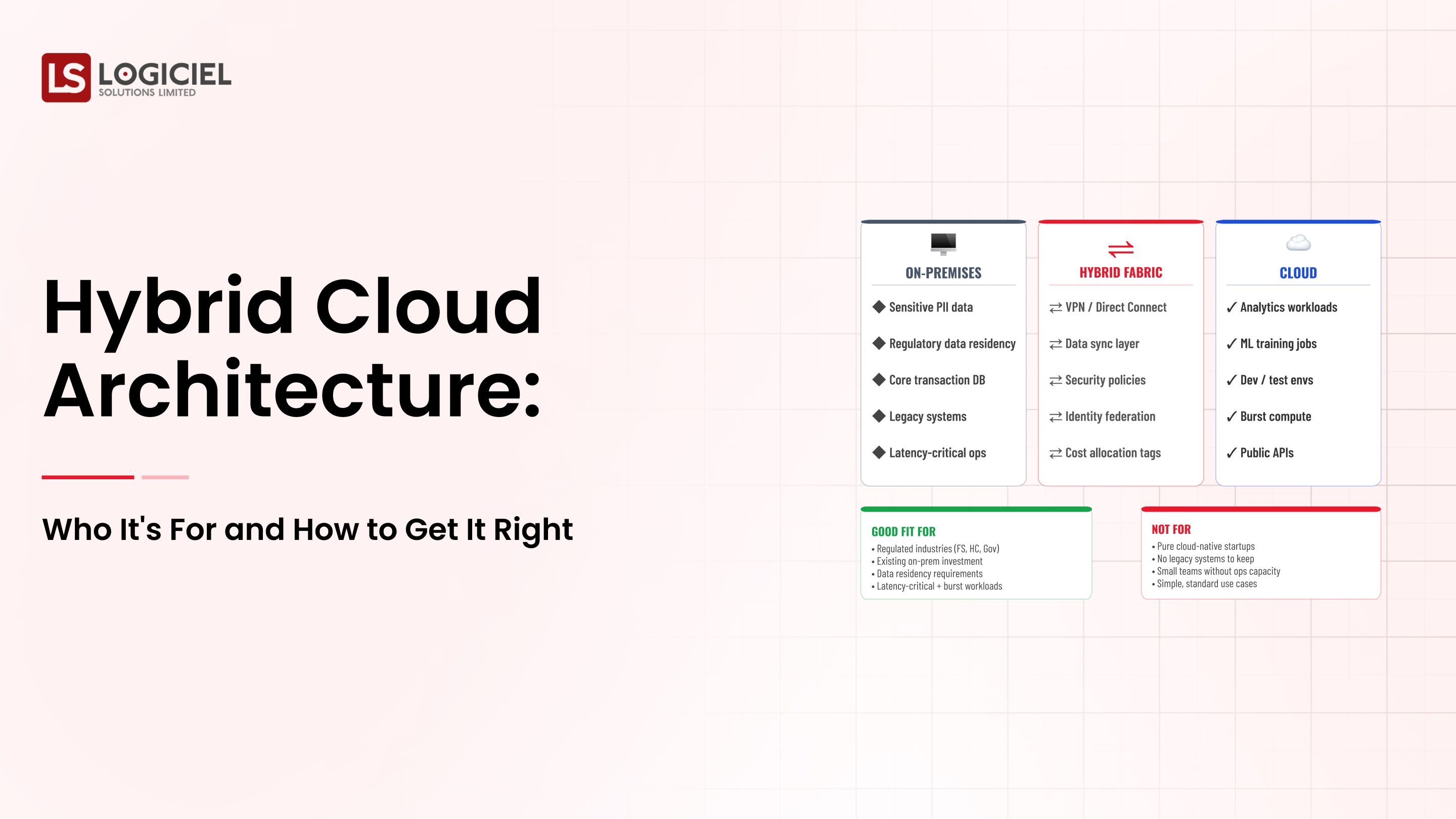

A modern AI strategy is rarely a binary build-vs-buy answer. It is a layered portfolio: some layers you buy, some you wrap, some you build.

From Data Chaos to Data Confidence

Inside a 6-month plan that turned 47 fragile pipelines into 98.7% reliability.

However, most CTOs are still being asked to choose one path or the other, which is the wrong framing.

If you are a CTO/VP Engineering and are responsible for building or scaling your AI program portfolio, the intent of this article is:

- Define what AI as a Service actually means at the layer level

- Understand where buy, build, and wrap each fit in your stack

- Design a portfolio that survives leadership and vendor turnover

To do that, let's start with the basics.

What Is AI as a Service? The Basic Definition

At a high level, AI as a Service means accessing AI capabilities through a vendor-managed API, where the vendor owns the model and parts of the orchestration. In-house build means owning the orchestration, eval, observability, and integration code yourself.

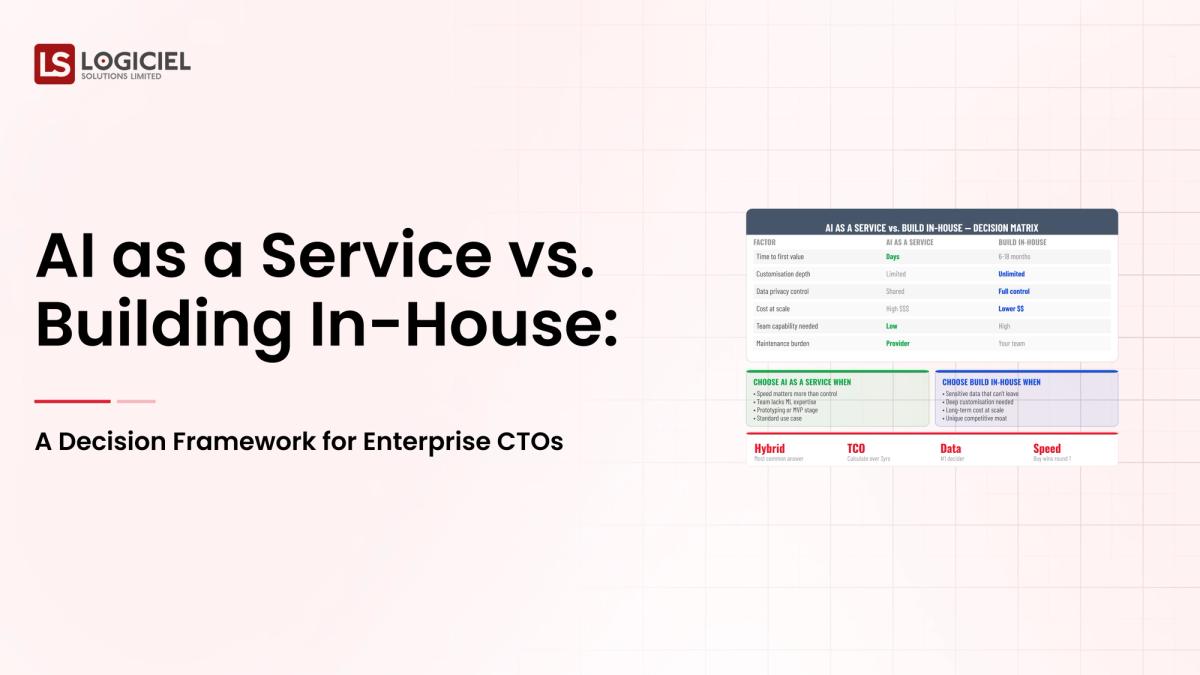

To compare:

If buying is renting an apartment and building is constructing a house, the wrap is the renovation work that makes either one yours. Most enterprise programs need all three, layer by layer.

Why Is AI as a Service Necessary?

Issues that AI as a Service addresses or resolves:

- Resolving the speed-vs-control trade-off without picking the wrong end

- Giving finance a defensible cost shape under multiple usage scenarios

- Avoiding lock-in that becomes expensive when the vendor landscape shifts

Resolved Issues by AI as a Service

- Decomposes a fuzzy decision into layer-specific choices

- Separates capability questions from procurement questions

- Forces the exit conversation before the contract is signed

Core Components of AI as a Service

- Outcome and metric layer (always you)

- Workflow integration layer (build or wrap)

- Orchestration and routing (build or wrap)

- Eval, observability, and audit (build or wrap)

- Model layer (buy in almost all cases)

Modern AI as a Service Tools

- OpenAI, Anthropic, AWS Bedrock, and Google Vertex for AI as a Service

- Hugging Face TGI, vLLM, and Together AI for hybrid hosting

- LangChain, LlamaIndex, and DSPy for orchestration

- LangSmith, Arize, Galileo for evaluation and observability

- Internal platform stacks built on Kubernetes, Ray, and Triton

Most enterprises end up with a portfolio across these categories rather than a single vendor.

Other Core Issues They Will Solve

- Enables vendor switching without product rewrite

- Gives security and compliance teams predictable review surfaces

- Aligns engineering hiring with the layers you actually own

In Summary: AI as a Service is one layer of a portfolio decision, not a wholesale replacement for in-house engineering.

Importance of AI as a Service in 2026

The build-vs-buy decision has shifted from a one-time call to an ongoing portfolio practice. Four reasons explain why CTOs are revisiting this in 2026.

1. Vendor pricing models keep shifting.

Per-token, per-request, per-user, per-seat. The pricing model that fits today may not fit in twelve months. Decisions made on this year's pricing rarely survive next year's renewal.

2. Foundation models keep shipping.

Locking your code to a single model API is now the most expensive form of lock-in. Abstraction at the model layer is no longer optional.

3. Regulatory clarity makes hybrid more viable.

With the EU AI Act and US state-level rules in effect, the controls you need around any AI capability are similar whether you bought it or built it. The cost difference between buy and build narrows when you must build the wrap either way.

4. Talent shape is changing.

The skills required to operate hybrid AI portfolios are different from the skills required to write models from scratch. Hire for the operating model, not for the past.

Traditional vs. Modern AI as a Service Concepts

- Binary build-vs-buy choice vs. layer-by-layer portfolio decision

- Single-vendor commitment vs. abstracted, swappable model layer

- Cost forecast on today's pricing vs. sensitivity analysis under multiple scenarios

- Procurement-led evaluation vs. technical-led evaluation with procurement support

In summary: AI as a Service is one layer in a portfolio of decisions, not a strategic identity for your AI program.

Details About the Core Components of AI as a Service: What Are You Designing?

Let's go through each layer.

1. Outcome and Metric Layer

Always your team. Vendors who claim they can define your outcome are selling something else.

Responsibilities at this layer:

- Business outcome and success metric

- Failure threshold and redress process

- Owner accountability for the result

2. Workflow Integration Layer

Almost always build or wrap. Buying this layer creates the worst lock-in.

What lives here:

- Customer-facing AI surfaces

- Integration with operating systems of record

- User-experience contracts that survive model changes

3. Orchestration and Routing Layer

The control point that lets you change models without re-architecting.

What this layer decides:

- Which model handles which request

- Retry policies, fallback paths, cost-aware routing

- Per-tenant and per-feature quota enforcement

4. Eval, Observability, and Audit Layer

Build or wrap. The eval cases themselves should always be yours.

What this layer produces:

- Continuous quality measurement

- Drift detection on inputs and outputs

- Audit trail that survives a regulator visit

5. Model Layer

Buy in almost all cases. The exception is small specialized models with stable use cases.

What you decide here:

- Which provider and which model tier

- Hosted vs. self-hosted depending on data and cost

- Upgrade cadence and contract terms

Benefits Gained from Layer Decomposition and Exit Planning

- Decisions become reviewable rather than implicit

- Vendor renewals become negotiations rather than rubber stamps

- Strategy survives the next leadership transition or vendor pivot

How It All Works Together

Outcomes drive integration design. Integration constrains orchestration. Orchestration calls into the model layer. Eval and audit run alongside every layer. The wrap is the part you own regardless of who the vendor is. The model is the part that gets swapped.

Common Misconception

AI as a Service vs. in-house build is a single, binary decision.

It is a portfolio of layered decisions. You will buy some, you will wrap some, you will build some. The mix is explicit, not implicit.

Key Takeaway: Each layer requires its own build-vs-buy analysis, with its own scorecard.

Real-World AI as a Service in Action

Let's take a look at how ai as a service operates with a real-world example.

We have a CTO who is scoping the AI strategy for a mid-market enterprise that wants:

- Customer-facing AI features that ship in two quarters

- Cost predictability through year three

- An exit path if the chosen vendor underperforms

Step 1: Write the Outcome

One sentence with a number attached. Signed off by the line-of-business leader and the CFO.

- Business outcome

- Measurable success threshold

- Cost ceiling for year one

Step 2: Decompose the Stack into Layers

Use the five-layer model. For each layer, name the function it has to perform in your environment.

- List specific systems and data shapes per layer

- Identify the layers where differentiation is possible

- Identify the layers where it is not

Step 3: Estimate the Usage Curve

Three scenarios: half your estimate, your estimate, double. Run the cost math for each path under each scenario.

- Per-request cost shape

- People-cost shape under each path

- Operating burden over twenty-four months

Step 4: Score Each Layer

Differentiation potential, lock-in risk, cost shape, time-to-value, operating burden.

- Buy candidates: low differentiation, high operating burden

- Build candidates: high differentiation, low operating burden

- Wrap candidates: everything in between

Step 5: Write the Exit Plan and Sign

Before the contract, write what it would take to remove the vendor in eighteen months. The migration shape, the rough cost, the named alternatives.

- Documented exit cost in dollars and weeks

- Named alternative vendors per layer

- Annual review trigger that revisits the decision

Where It Works Well

- Layer-level decisions documented and reviewed quarterly

- Thin abstraction at the model layer that supports vendor swaps

- Cost model run against multiple usage scenarios, not just the vendor template

Where It Does Not Work Well

- Procurement-led evaluation that skips the technical conversation

- Single-model lock without abstraction in product code

- Multi-year contract without a documented exit plan

Key Takeaway: Build-vs-buy is a portfolio practice, not a single decision. The teams that treat it as ongoing review keep their leverage.

Common Pitfalls

i) Vendor-led scoping

Letting the vendor sales conversation define your scope is the most expensive shortcut in enterprise AI.

- Scope expands to fit the vendor product

- Outcome becomes whatever the demo showed

- Switching cost grows quietly

ii) Single-model lock without abstraction

If your application code calls a vendor API directly, you have signed a contract you did not negotiate. Abstract the call.

iii) Building everything because in-house feels safer

Building parts of the stack that are not differentiating creates operational debt that compounds. Buy what is undifferentiating.

iv) Skipping the exit plan

An eighteen-month contract without an exit plan is not procurement. It is a bet your future self has to pay for.

Takeaway from these lessons: Most build-vs-buy mistakes are framing mistakes, not technology mistakes. Frame at the layer level and the answers improve.

AI as a Service Best Practices: What High-Performing Teams Do Differently

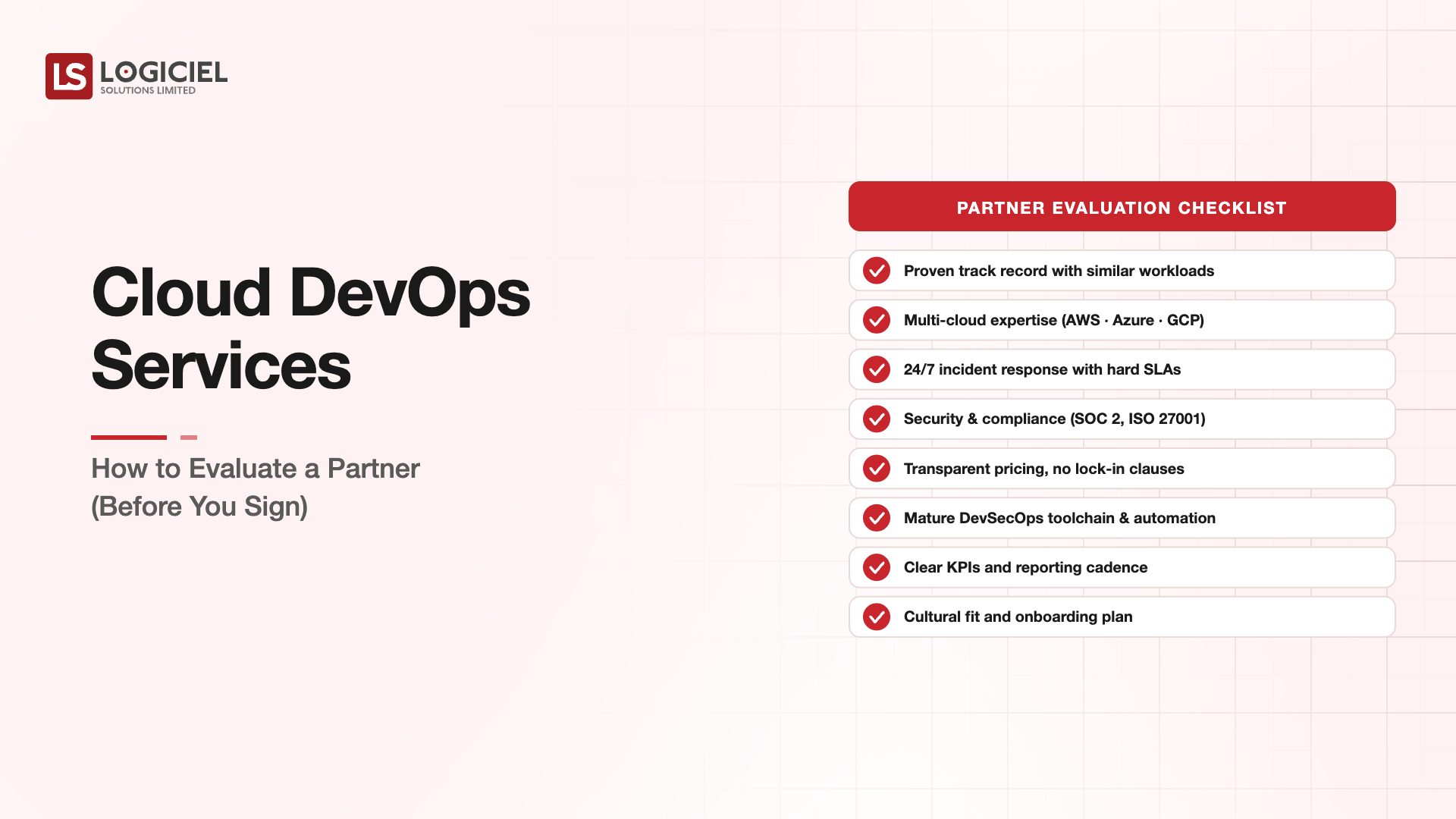

1. Run the layer-by-layer scorecard

For each layer, score on differentiation, lock-in risk, cost shape, time-to-value, and operating burden. Update quarterly. Do not let the scorecard become annual.

2. Build the wrap before the model goes live

Whichever path you chose, build the wrap first. The wrap survives model and vendor changes. Programs that build the wrap last rebuild it twice.

3. Run sensitivity analysis on cost

Three scenarios on the usage curve. Three providers in the comparison. The vendor with the cleanest cost curve under the worst case is often the right answer.

4. Define exit criteria before contract signing

What conditions would trigger a switch. What the migration would look like. What the named alternatives are. Vendors that cannot have this conversation are vendors to avoid.

5. Hire for the operating model

The skills you need to operate a hybrid AI portfolio are different from the skills you needed five years ago. Hire integration, eval, and platform talent. Buying skills as a service rarely scales.

Logiciel's value add is helping CTOs run the layer-by-layer analysis, write defensible exit plans, and design the wrap that lets the model layer remain substitutable.

Takeaway for High-Performing Teams: The portfolio that holds up is the one that is reviewed every quarter, not negotiated every three years.

Signals You Are Designing AI as a Service Correctly

How do you know the ai as a service program is set up to succeed? Not in a board deck or a celebration, but in the daily evidence the team produces. Below are the signals that distinguish programs on the path from programs that look like progress.

- The team can describe failure modes without flinching. People who actually run ai as a service systems will tell you the last three things that broke. People who have only read about it will not.

- Cost is observable in real time. The team can tell you, today, how much they spent yesterday on this and what drove the change.

- Change is boring. New versions, new models, new pipelines all roll forward and roll back the same way. Heroic deploys signal an immature system.

- Eval is continuous, not ceremonial. A live dashboard refreshed at least daily, not a quarterly slide.

- Vendor lock-in is a known quantity. The team can name the dependencies that would hurt to remove and the rip-and-replace cost in dollars and weeks.

Adjacent Capabilities and Connected Work

This work does not exist in isolation. AI as a Service depends on, and feeds into, several adjacent capabilities. Building one without thinking about the others is the most common scoping mistake.

In most enterprise programs, ai as a service shares infrastructure with the data platform, the observability stack, and the security review process. It shares team capacity with platform engineering, applied ML, and SRE. And it shares leadership attention with whatever the next AI initiative is on the roadmap. Naming these adjacencies upfront helps the program scope realistically and helps leadership see the work as a portfolio rather than a one-off project.

The most common mistake in adjacent-capability scoping is treating each adjacency as someone else's problem. The integration with the data platform is your problem. The security review of the runtime is your problem. The on-call rotation that covers the system you ship is your problem. Pretending otherwise pushes work to teams that did not plan for it, and the work returns to you later as a delay or an incident. Own the adjacencies you depend on; partner with the teams that own them; share the timeline.

Conclusion

AI as a Service vs. in-house build is the wrong question. The right question is which layer of your stack you should own and which you should rent, and how you keep both reviewable.

Key Takeaways:

- The decision is layered, not binary

- Buy what is undifferentiating, build what is, wrap everything

- Write the exit plan before the contract

When the build-vs-buy portfolio is designed deliberately, the benefits compound:

- Faster time-to-value through reuse of the wrap

- Predictable cost shape across vendor changes

- Reduced lock-in and stronger negotiating position at renewal

- Consistent governance posture across all AI work

Reactive to Proactive Incident Elimination

Inside a 6-month transition that took emergency incidents from monthly to zero.

Call to Action

If your team is debating AI as a Service vs. in-house build, the move is to do the layer-by-layer analysis before any vendor conversation.

Learn More Here:

- Hybrid Delivery Model Ctos AI First Engineering 2026

- When to Build vs Refactor Decision Framework for Tech Leaders

- AI Software Development Service

At Logiciel Solutions, we run build-vs-buy reviews with engineering and AI leaders, including the layer scoring, exit-plan drafting, and reference architecture work that turns a fuzzy strategy question into a defensible portfolio.

Explore how to design your AI portfolio.

Frequently Asked Questions

What is AI as a Service?

A managed AI capability accessed by API, where the vendor owns the model and parts of the orchestration. You own the wrap, the integration, the data, and the outcome.

What is the difference between AI as a Service and an in-house build?

AI as a Service shifts spend from people to vendor. In-house build shifts it to people, model APIs, and platform tooling. Most enterprise programs end up with a hybrid that mixes both at different layers of the stack.

When does AI as a Service make sense?

When the capability is undifferentiating in your context, when speed-to-value matters more than per-unit cost, when usage is uncertain, or when your hiring path cannot deliver the team in your timeline.

What is the biggest hidden cost of AI as a Service?

Switching cost. If your code calls vendor APIs directly, the cost of changing vendor grows quietly. The fix is a thin abstraction at the model layer from day one.

What is the biggest mistake in AI as a Service decisions?

Skipping the exit plan. A multi-year contract without a documented exit path is a bet your future self pays for. Write the exit before the signature.