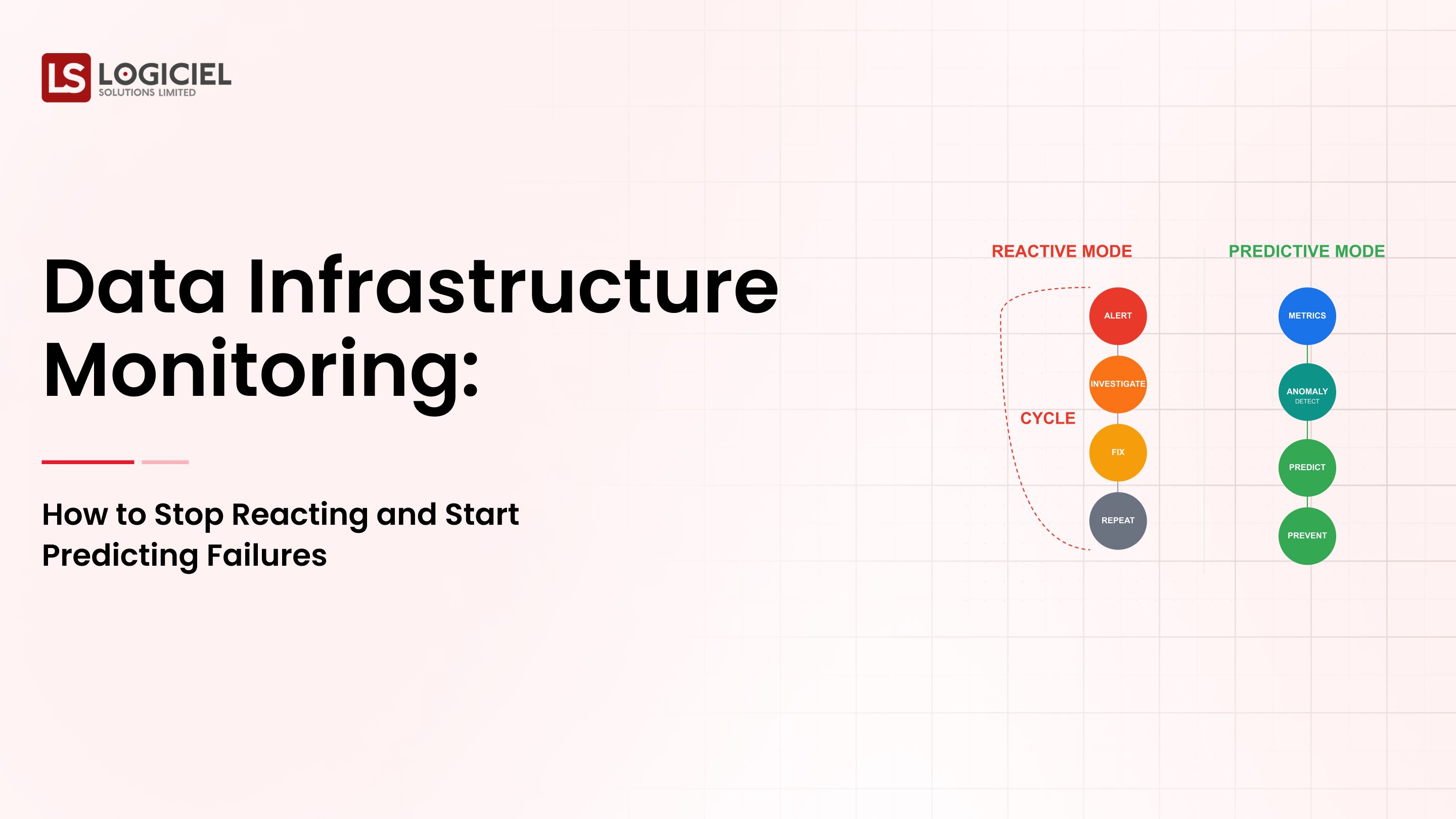

Every data team has experienced this same fate at some point.

As your pipeline(s) grow, so do their dependencies. As the number of moving parts multiplies, troubleshooting failures becomes much more complicated. Ultimately, your SLAs are going to slip.

What started off as 3 or 4 scripts will have now turned into a web of cron jobs, retrials, and fragile workflows.

It is during this stage of the pipeline(s) development that data orchestration needs to become part of the conversation.

Once you recognize that orchestration is necessary, there arises yet another question.

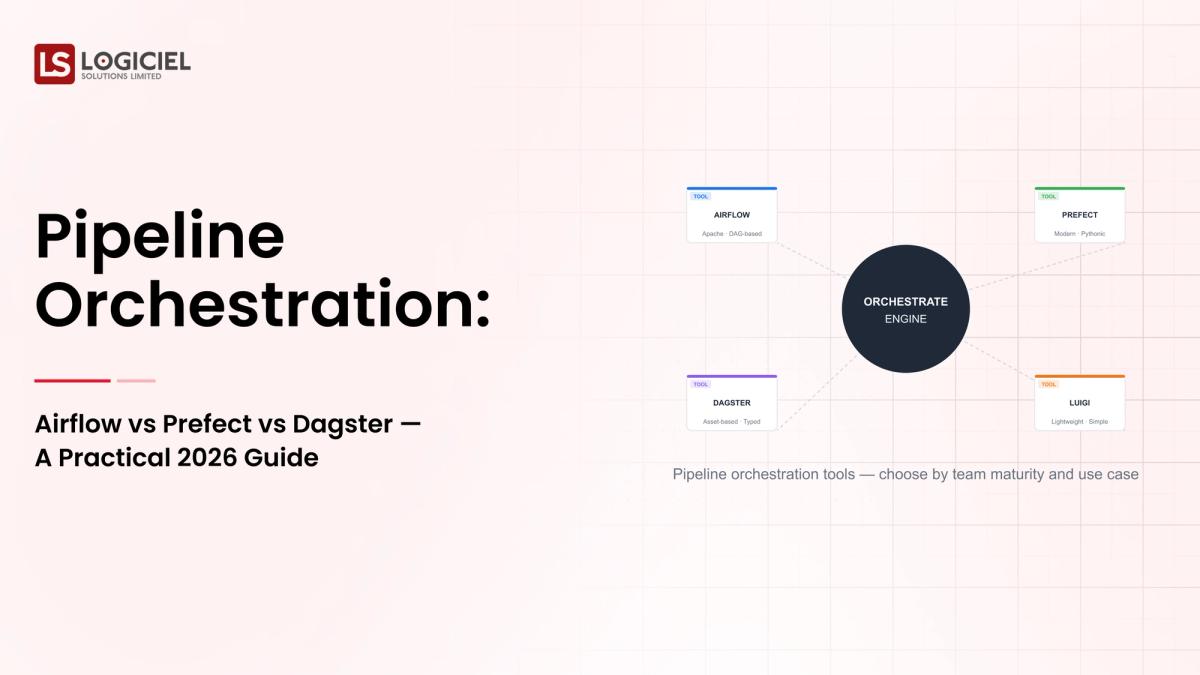

Which tool should you use for orchestration - Airflow, Prefect, or Dagster?

The decision for a Staff or Principal Engineer is not simply a tooling decision, but rather an architectural decision that affects:

- Reliability

- Development productivity

- Observability

- Scalability for future growth

This guide is a comprehensive decision-making guide beyond the high-level comparisons. It will give you an actionable decision-making framework for 2026.

AI – Powered Product Development Playbook

How AI-first startups build MVPs faster, ship quicker, & impress investors without big teams.

What is Data Orchestration?

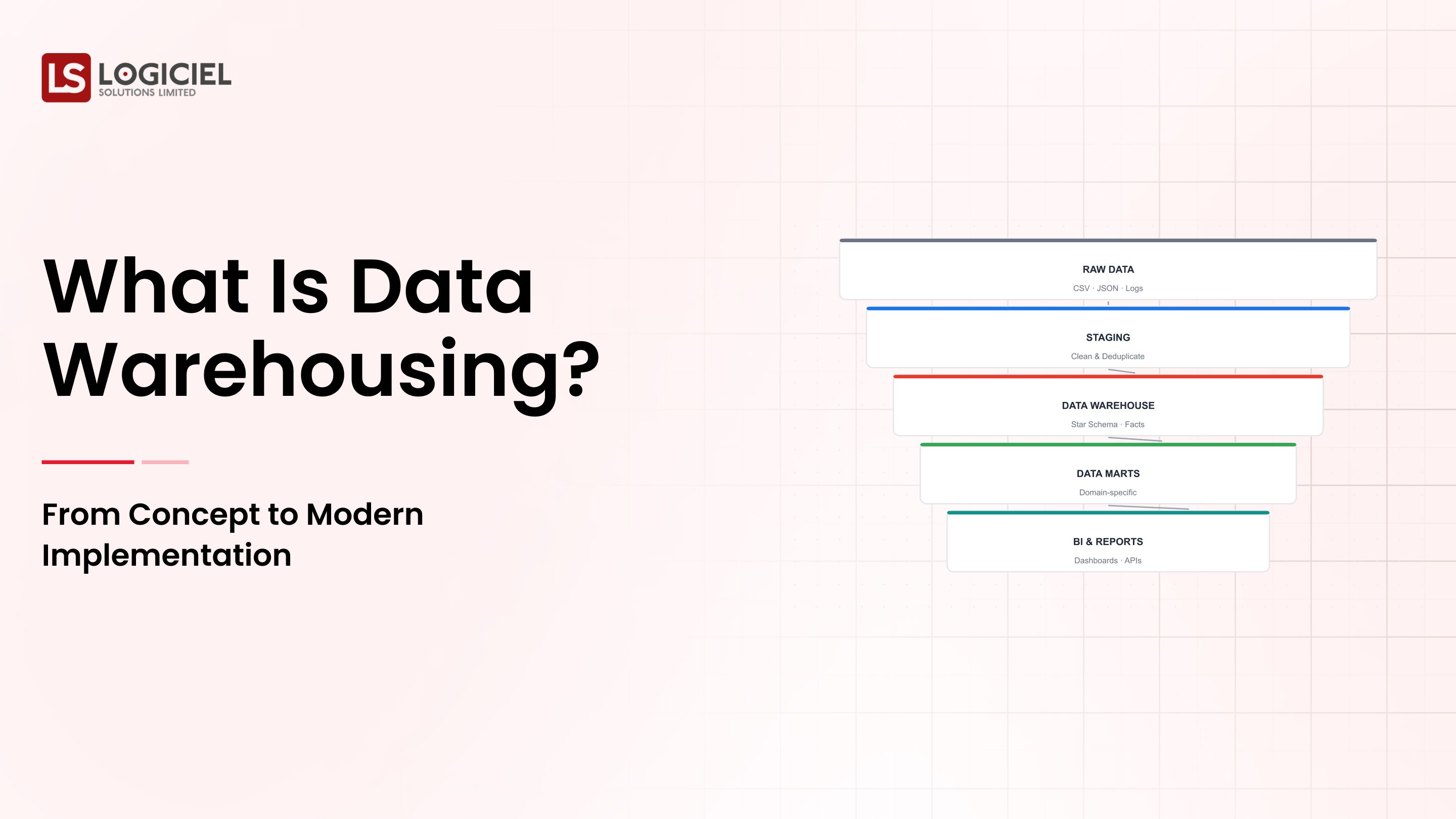

At its core, coordinating, scheduling, and managing the flow of data between various applications or systems is what we call orchestration within the data world.

Basic Definition

Data orchestration is responsible for making sure:

- Tasks are executed properly in sequence

- All dependencies are honored

- Failures can be managed in the appropriate manner

Essential Elements of Data Orchestration

Most current data orchestration solutions consist of:

- Data workflow definitions (DAGs or graphs)

- Job scheduling and job triggering

- Retrying and a graceful method of handling errors

- Visibility into job executions via logs

- Dependency management

Importance of Data Orchestration

Without orchestration:

- Your pipelines will break silently, with no indication why they broke

- Task dependencies are unclear

- Debugging your pipelines will be difficult or impossible.

Key Insight

Data orchestration is NOT just about the scheduling of jobs.

Rather, it is focused on managing complexity at scale.

How Data Orchestration Functions

Step-by-Step Data Orchestration Workflow:

- Define your workflows as a DAG

- Establish dependencies between all your tasks

- Schedule or trigger the running of your tasks

- Monitor the state of the execution

- Handle failures and retry

Example:

A simple pipeline:

- Extract Data

- Transform Data

- Load Data to a Warehouse

As an orchestrator, your pipeline will ensure that your first task (extract) runs prior to your second task (transform), and only when yours runs successfully will your third dependently executes (load).

Key Insight

The orchestrator is the control layer in your data system.

Importance of Data Orchestration

1. Reliability

Ensures the running of pipelines

2. Scalability

Expands as your workloads grow

3. Observability

Show you when an execution of your pipeline failed

4. Automation

Reduces required human intervention

5. Interoperability

Integrates:

- Data Warehouses

- APIs

- Streaming Systems

Key Insight

Without orchestration, scaling and managing data systems will be impossible.

Summary of Marketplace Leaders

When evaluating data orchestration tools, three stand above the others:

- Apache Airflow

- Prefect

- Dagster

Apache Airflow

Airflow is an open-source tool that has the highest adoption of any data orchestration tool on the market today.

Strengths

Massive ecosystem Industry-ready Community-driven support

Weaknesses

Installation is complex Limited logging and tracking without additional software DAG architecture-based

Best Used For

Older technology Major corporations that currently have an Airflow installation

Key Takeaway

Airflow is a robust tool but can be very complicated.

Prefect

Prefect is a modern orchestration tool created to allow for easier workflow management.

Strengths

Pure Python interface Workflows can be created at any time Having cloud-based solutions that are top notch

Weaknesses

Smaller than the Airflow ecosystem Less "enterprise-ready" for some use-cases

Best Used For

Developers that want a great experience Cloud-based environments

Key Takeaway

Prefect emphasizes simplicity and agility.

Dagster

Dagster is a new orchestration solution that emphasizes data and the ability to monitor/manage.

Strengths

Works with assets, not just processes Has excellent visibility Insights about how data is used is included in the processing of data

Weaknesses

Requires time to learn Small ecosystem

Best Used For

People that manage large data projects People building sophisticated processes by asset

Key Takeaway

Dagster changes the way you think about orchestration with the focus on data.

Airflow vs. Prefect vs. Dagster: Comparison Overview

1. Architecture

Airflow: DAG-based Prefect: Flow-based Dagster: Asset-based

2. Developer Experience

Airflow: Medium Prefect: High Dagster: High (with learning curve)

3. Observability

Airflow: Average Prefect: Improved Dagster: Excellent

4. Scalability

All have similar scalability, different approaches

5. Flexibility

Prefect and Dagster outperform Airflow

Key Takeaway

Your choice will be based on your architectural philosophy, not features.

Which Tool is Best for Enterprise Data Orchestration?

Decision Criteria

Selection criteria:

- Team competencies.

- Existing infrastructure.

- Ability to scale.

- Required level of observability.

In General:

Airflow = stability + ecosystem. Prefect = simplicity + flexibility. Dagster = modern data representation/architecture.

Key Insight

The best tool will be one that best fits your design principles.

Comparison of Popular Data Orchestration Tools

Airflow - good for existing ecosystems but complex to operate. Prefect - good for rapidly evolving teams but small ecosystem. Dagster - good for data-centric architectural design but has steep learning curve.

Core Elements of a Modern Data Orchestration System

The following are the core components/elements of a modern data orchestration system:

- Workflow Engine (executes tasks, manages dependencies)

- Scheduler (fires workflow)

- State Management (tracks execution)

- Observability Layer (logs, metrics, alerts)

- Integration Layer (connects to external sources).

Key Insight

Orchestration is more than just a tool; it is a system.

Automating Data Workflows Across Multiple Platforms

Many modern pipelines cross many platforms. Key challenges include:

- Diverse API's

- Different data types

- Latency.

Solutions include:

- Orchestration as a single layer of management

- Standardization of a workflow.

- Retry capability.

Key Insight

For automation to be successful it must be performed uniformly across all platforms.

Best Data Orchestration Tools for Cloud Environments

Cloud-native orchestration is becoming the standard.

Key features you should look for include:

- Managed offerings

- Automation Scaling

- Connection to Cloud Storage/Compute.

Popular Choices include:

- Prefect Cloud

- Managed Airflow

- Dagster Cloud

Key Insight

Orchestration done in the Cloud will reduce your operational costs.

The advantages of Automated Data Pipelines:

- Decreased Time to Deliver Data

- Fewer Errors

- More Reliability

- Greater Resource Utilization

Takeaway

Automation is essential for building scalable data platforms.

Typical Pricing Models for Data Orchestration Services:

A key consideration to think about as an organization looks for an enterprise data orchestration solution is the typical cost. Below are the common pricing models available:

- Open-source (e.g., Airflow)

- Subscription (e.g., Prefect Cloud, Dagster Cloud)

- Based on the amount of usage

Costing Elements

- Infrastructure

- Compute usage

- Team overhead

Important Consideration

The most significant cost relative to a data orchestration solution is operational complexity; not the tooling itself.

Some of the most common errors relative to Data Orchestration:

- Thinking of Orchestration as Scheduling

- Not Considering Observability

- Overengineering Pipelines

- Selecting Tools based on Trendy Technology

- No Standards

The Role of Data Orchestration in the Future of Data

Current Trends in Emerging Technologies in Data Orchestration:

- Asset-based orchestration

- Optimizing pipelines using AI

- Event-driven architectures

What this Means

In the future, Orchestration will evolve from:

Executing Workflows --> Data Awareness

Strategic Insight

The future consists of data aware orchestration systems.

Conclusion: How To Choose The Right Tool in 2026

Airflow, Prefect and Dagster represent three different approaches:

Airflow --> A tried and true solution Prefect --> A flexible and developer-friendly solution Dagster --> A modern data aware orchestration solution

For a Principal Engineer, the decision is not only about the features of the tools but the following factors:

- Your team's approach to building systems

- The flow of your data

- The evolution of your platform

Point of View From Logiciel

Logiciel Solutions assists organizations with the design and implementation of modern, scalable data orchestration systems. Our focus is on:

- A platform-first architecture

- Workflow that is observability driven and

- AI-enabled optimization.

When pipelines become difficult to manage, you need to rethink your

orchestration as a transition from just tooling to the foundational layer of your data platform.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Frequently Asked Questions

What is a data orchestration tool?

A data orchestration tool is a software platform that manages the workflow, dependencies and execution of data pipeline execution.

How are Data Orchestration and ETL different?

Data Orchestration manages the workflow, while ETL is focused on moving data.

Is Kafka a data orchestration tool?

No, it isn't. Kafka is a streaming platform, not an orchestration tool.

What is a data orchestration platform?

A data orchestration platform is a system to coordinate workflows across multiple systems.

Which tool is best to use?

Which tool is the best to use depends on your architecture, your team and your scale.