Your data pipelines are operating as expected, and dashboards are producing live data, yet something is not functioning properly.

The data produced by measures taken to visualise real time activities is frequently viewed with a lack of trust by stakeholders, engineers are required to constantly put out multiple fires, and every additional feature that is added is causing new instabilities.

The fact that your organisation is experiencing signs of these issues indicates it is lacking intelligent data infrastructure best practices.

Real Estate Investment AI

Your models aren’t wrong. Your data is. Here’s how real estate teams fix AI failures before they cost millions.

As a Head or VP of Data, you will learn from this article how to:

- Differentiate between high performing data teams and reactive data teams

- Identify key, non-negotiable best practices for modern intelligent data infrastructure

- Recognise the silent anti-patterns that are degrading your systems reliability

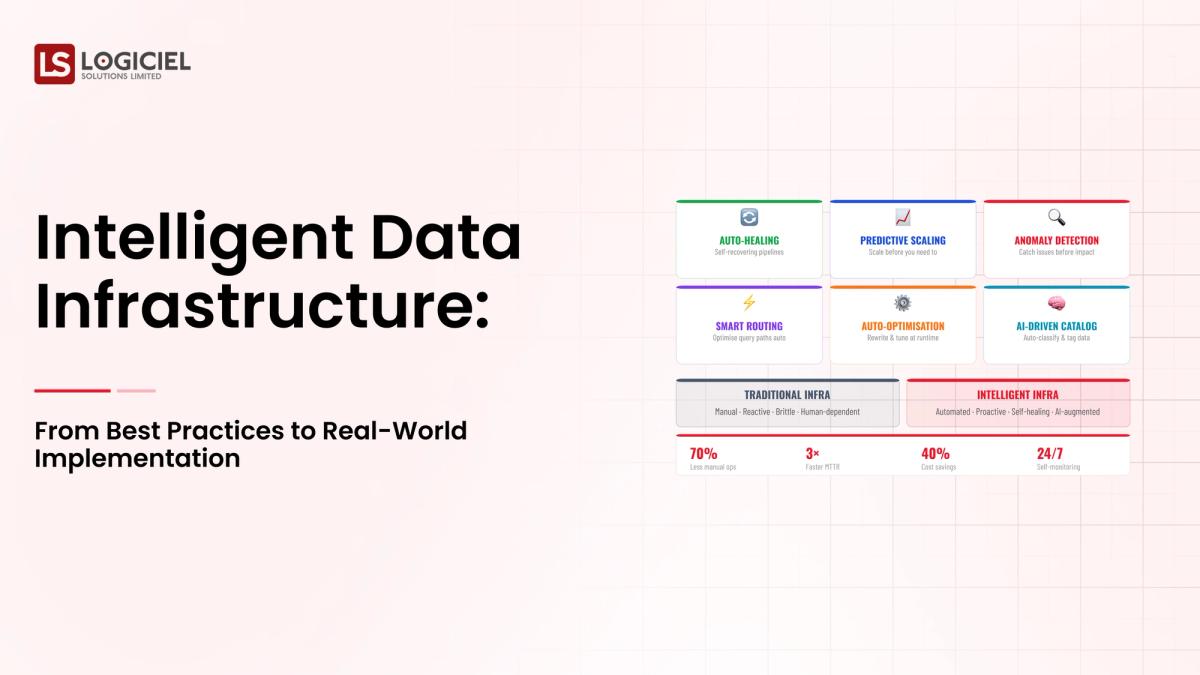

As we progress through to the year 2026, data infrastructure will not simply represent scale; rather, it must also represent intelligence, resilience and adaptability.

The State of Intelligent Data Infrastructure in 2026 and Why the Best Practices Also Matter Now

The data infrastructure landscape has become extremely different than it has previously.

What has Changed Current Day

Modern Data Systems Are:

- Distributed Across Many Platforms

- Facilitating Real-Time Workloads (i.e. AI)

- Handling Huge Amounts of Data

The Long-Term Effects of These Differences Have Been:

- Increased Dependencies on Systems to Perform

- Increased Points of Failure

- Increased Expectations From Users/Systems

The Gap Between Data Teams

How High Performing Teams Work

- Built from Reliability from the First Day

- Prioritised Observability

- Have Automated Their Critical Processes

How Unsuccessful Performing Teams Work

- React to Incidents

- Have Manually Check Processes

- Have Built up Technical Debt

Important Data Point

Research from the Industry States that organisations that Work in Observability and Automation Will Decrease the Time to Resolve Issues by 60% Or More.

The Importance of Best Practices

Without an organized and intelligent data model, best practices lead to:

- Fragile systems

- Slow debugging

- Reduced trust

With an organized and intelligent data model, best practices lead to:

- Reliable and scalable systems

- Faster team movement

- An opportunity for data to be leveraged as a competitive advantage

Best Practices are Non-Negotiable: All Teams Must Get These Fundamentals Down First

Before any advanced strategy can be implemented, teams must establish core fundamentals.

1. Ownership

All datasets and pipelines must have a defined owner as well as documented responsibilities related to that ownership to help ensure accountability.

2. Observability From Day 1

Observability must include pipeline health, freshness of data, and detection of error conditions. Adding these capabilities after the fact will add cost and create complexity.

3. Infrastructure as Code

View infrastructure as software. Control versions of configuration and have reproducible environments to help produce consistency and reliability.

4. Defined Service Level Agreements

Prior to any production environment being utilized, set expectations regarding uptime, define acceptable latency, and establish accuracy thresholds.

KEY INSIGHT:

The above fundamentals form the foundation of any data platform; without these fundamentals, it is impossible for an advanced data platform to be successful.

Practice 1: Design for Failure at the Beginning

It is inescapable that there will be failures with distributed systems; therefore don’t try to keep failures from occurring but rather design for graceful failure.

What this means is to assume that components will fail, plan for recovery, and minimize impact.

Key Techniques:

Retry and Fallback

Automatically retry upon failure; and employ fallback mechanisms when necessary.

Dead-Letter Queues

Capture bad data but do not allow them to stop the data-flow pipeline.

Circuit Breakers

Prevent cascading failures from occurring down the line; and provide protection for downstream systems.

Testing for Failure

Simulate occurring events such as:

- Pipeline interruptions

- Data corruption

- Service outages

Create a plan for if these events occur.

Alerting Strategy

Alert for SLA breaches and data anomalies; do not just alert customers for system failures.

Insight #1

Data reliability, downtime reduction via failure-focused design.

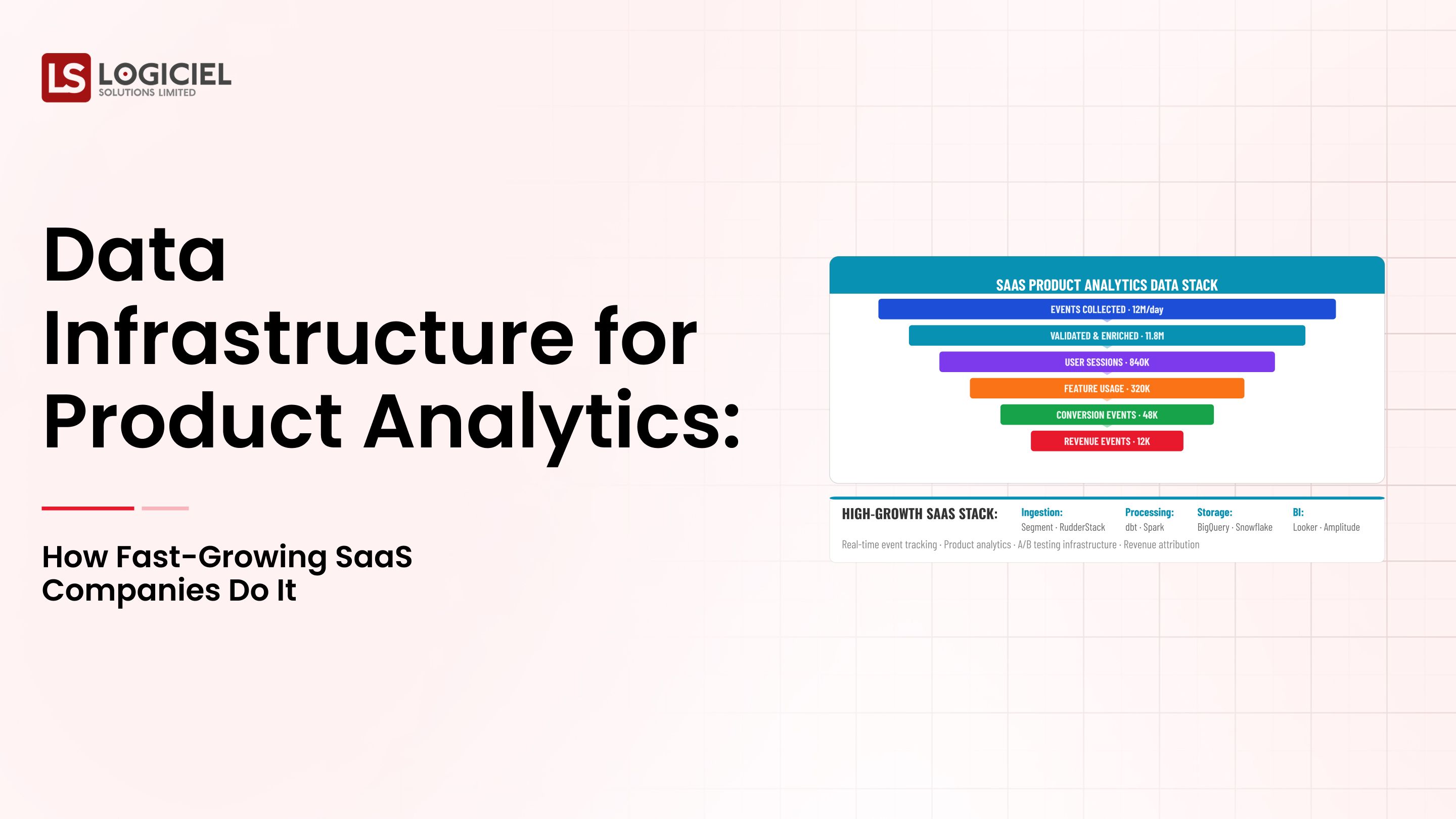

2nd Practise: Quality Assurance via Automation.

Automation of manual checks is critical for scalable data quality management.

Combining Shift Left data quality vs. Downstream Failures through the prevention of Ingestion-based and Pre-transformation-based issues.

Automated Quality Assurance Checks:

- Schema

- Nulls

- Ranges

Data Contracts

Defining the Following:

- Expected Schema

- Data Format

For consistency in production and consumption.

Before – Example:

Schema alteration anywhere will result in a silent failure downstream.

After - Example:

- Validation – detects Schema Change

- Confirmation – alerts sent immediately

Logiciel assistance results from the following:

- Automated Quality Assurance Rules

- Continuous Validation

- Real-time Alerts

Insight #2

Transforming Data Quality from Reactive to Proactive through Automation.

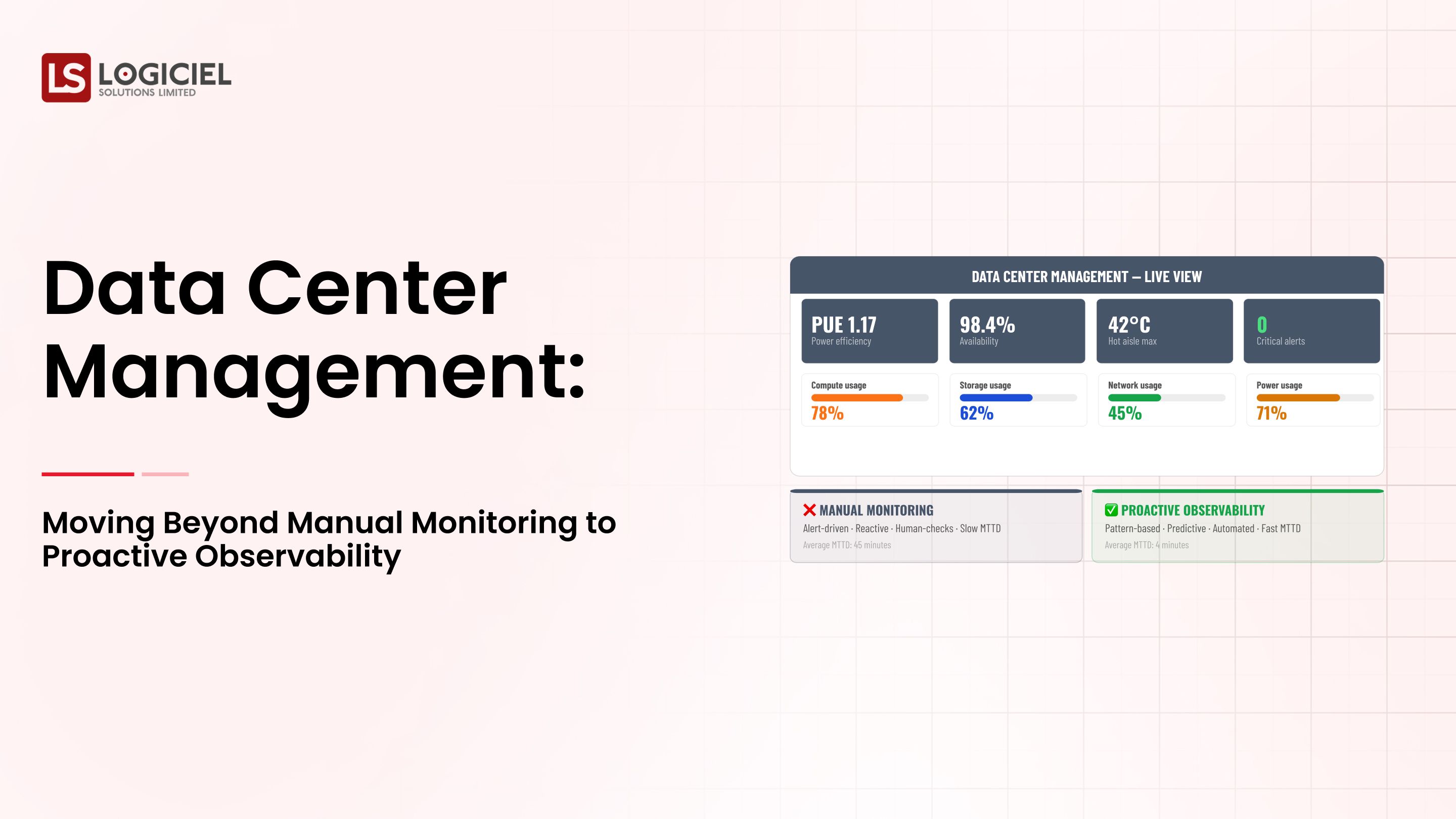

3rd Practise: Observability and Explainability of Your Stack.

Observability is the bedrock of intelligent systems.

What is Included within Observability:

- Pipeline Monitoring

- Freshness of Data

- Error Detection

Function of Lineage --Traceability as Source --> Destination

Traceability to Understand Data Dependency to facilitate Faster RCA.

Benefits of Strong Observability within Your Team:

- 60%+ reduce in INC resolution

- Improve Reliability of System

- Increase Trust of Data within System

Observability Unified as One Layer:

- Avoided = Many Individual Monitoring Tools

- Use = Integrated versus Multi-Tool for One Complete View of Pipeline health

Business Impact Through Improved Observability:

- Faster Decision Making

- Decreased Downtime

- Higher Confidence in Data

Insight #3

Observability is a core infrastructure capability versus one feature.

Anti-Patterns to Avoid: Factors That Erode Reliability

Even if you’ve implemented all best practices for your system, there are still some patterns that will cause you trouble.

1. Large, Complex Pipelines

Large, complex pipelines are difficult to debug and can lead to more extensive failures than smaller, simpler pipelines.

2. Manual Data Validation

The biggest problem with manual data validation is that it does not scale. Additionally, it has a higher likelihood of making mistakes compared to automated validation.

3. Too Many Tools

When you have too many tools being used, it creates more complicated solutions and less transparency across the ecosystem.

4. Reactive Investment

Waiting until an incident occurs to make an investment in your system can lead to a higher total cost for the infrastructure and will slow down the overall progression of your project.

Key Takeaway

Avoiding anti-patterns is just as important as implementing best practices.

Final Thought

In order to create intelligent systems, we must have more than just ways of doing things; we need discipline and design.

Key Points of Best Practices:

- Best practices provide a foundation; therefore, building an intelligent system cannot be done without them.

- Best practices keep your systems from becoming complicated; they do not create chaos.

- Automation and observability are critical to maintaining proactive systems versus merely reacting to them.

- They compound very quickly and can be expensive over time.

This is not something you can do once and forget about; it is an ongoing process.

PropTech AI Infrastructure Roadmap

They’re stuck because the data layer they need doesn’t exist yet

If your infrastructure has been implemented properly, your intelligent infrastructure will allow:

- Increased reliability

- Accelerated innovation

- Increased trust in data

To do this, call Logiciel Solutions for a consultation on how to rebuild your infrastructure.

Logiciel Solutions provides organizations with the ability to build their own AI-first intelligent data systems that can be effectively scaled.

We will work with you using:

- Technical implementation

- Intelligent automation

- Proven methodology

In order to transition you from a reactive to a predictable, high-performing infrastructure.

Frequently Asked Questions

What is intelligent data infrastructure, and how does it relate to my system?

Intelligent data infrastructure is a system built through automation, observability, and redundant building blocks, enabling effective, reliable, and scalable data operation.

Why are best practices critical to the success of my data infrastructure?

Best practices help to ensure that the data infrastructures you have in place will continue performing correctly and provide a reliable foundation from which to grow as your data systems evolve.

What is the major mistake people make?

Under-investing in observability and automation causes teams to reactively solve problems instead of developing processes that will proactively resolve issues before they become problems.

How does having a defined data lineage help improve my infrastructure?

Data lineage helps give visibility into your data flow, which assists with speedier debugging and increased compliance measures.

Where should teams begin to implement best practices?

Begin by developing ownership, establishing automated validation, and increasing observability before expanding further into your teams’ operations.