Three years ago, your team made a sensible decision in establishing batch pipelines.

These pipelines performed admirably - in, they processed datasets within one day, and then created updated dashboards every day at the same time. Nobody asked for any improvements to how these worked. They just worked.

Today, that sensible decision is now costing you business.

Stale datasets will be utilized in your AI model; product analytics cannot keep up to user activity; and your development teams spend 30-40% of their sprints trying to fix batch pipelines because these have never been designed or built to support real time processing.

ROI of AI-Ready Data Infrastructure

Inside an 8-month rebuild that turned three failed pilots into a 9:1 ROI model.

In 2023, this is an everyday occurrence in your data infrastructure and analytics.

If you are a Staff or Principal Engineer, this guide will provide:

- An understanding of what batch processing is and why it is insufficient

- A realisation of what, when, and where real-time infrastructure is necessary

- The creation of a pragmatic architecture to support scalable, dependable data infrastructures

In AI-based systems, latency is not merely a performance issue, it is a business risk.

Enterprise Data Challenge: The Fundamental Differences of New Data Infrastructure and Analytics

Enterprise data infrastructure and analytics issues today are fundamentally different to those faced in traditional enterprise data systems.

1. Complexity as a Scale Factor

Enterprise Data Systems must manage:

- Billions of daily transactions

- Many data sources

- Continuous streams of data ingestion

This creates:

- Extremely high throughput requirements

- A growing number of possible points of failure

- Extremely complex dependencies between the data being processed.

2. Real Time as an Expected Norm

Users expect:

- Instant recommendations

- Real-Time Fraud Detection

- Live Dashboards

Batch-based processing introduces latency, by its very definition.

The Sensitivity and Criticality of Data

Data has become the engine that drives:

- Decision making with finances

- Delivering experiences for customers

- Creating predictions using AI

Mistakes and failures are no longer acceptable; there is simply too much at stake.

Where Traditional Systems Fail

The "typical" enterprise stack consists of:

- Batch ingestion of data

- Scheduled data transformations

- Daily data refreshes on dashboards

The problems include:

- Data coming in too late to make informed decisions

- Pipelines silently breaking

- The time it takes to debug issues

The Stakes

In today's systems:

- 5 minutes of delay can affect your revenue

- Incorrect or bad data will damage machine learning models

- Latency diminishes user trust.

Key Takeaways

When building your data infrastructure and analytical solution, they need to be designed for immediacy and not for eventual consistency.

Regulations & Compliance Issues

Real-time solutions also create new compliance challenges.

Important Frameworks:

- SOC 2 or similar assurance frameworks

- General Data Protection Regulation (GDPR) in the EU

- Regulatory / compliance requirements for specific industries

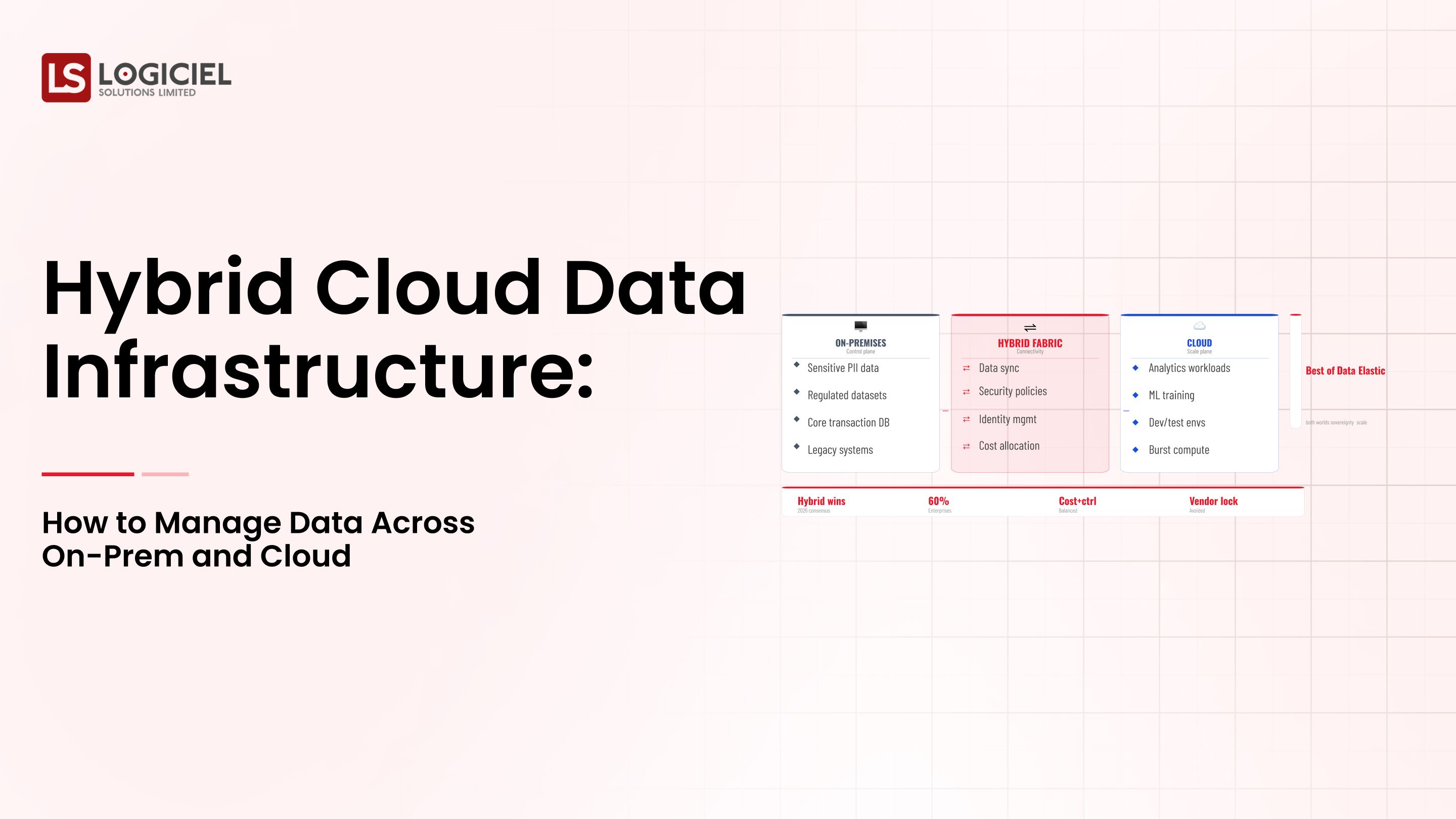

1. Data Residency

Real-time data pipelines must be designed with the following in mind:

- Where the data is stored geographically

- How data can be moved across country borders

2. Data Retention Policies

Real-time data solutions must be able to:

- Be able to store historical data

- Be able to delete historical data when required

3. Audit Trails

Every event that occurs in a system must be tracked back to:

- Where it originated from

- What transformations occurred

- How it was used

Traceability & data lineage are paramount.

4. Impact on Architecture

The compliance implications for “real-time” solutions includes but are not limited to:

- Designing the data flows for how data is used and when it is to be processed

- Demonstrating how the data is stored

- How access to that data is controlled

Building vs Retrofitting:

When you build and design your compliance in-progression, you will reduce your compliance costs and eliminate costly re-work.

Retrofitting compliance will slow the rate of development and create increased complexity.

Key Takeaway

Real-time systems must be compliant by design and not by retrofitting compliance later on.

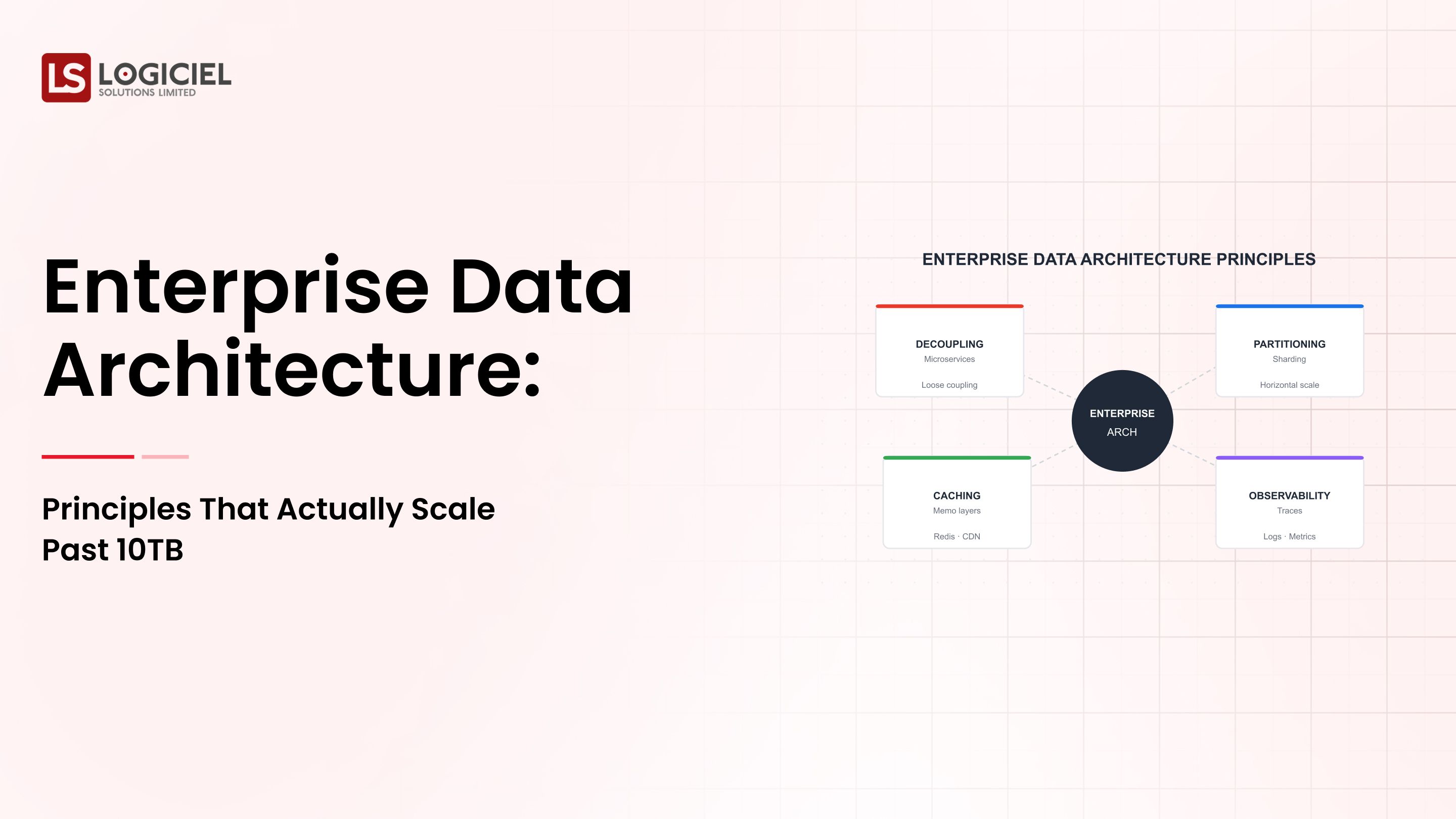

The Scalable Enterprise Data Architecture

Scalable data infrastructures and analytics solutions require an intelligent combination of batch and real-time.

Core Layer

Ingestion Layer

- Streaming and batch ingestion

- Event-driven architecture

Storage Layer

Data lakes and real-time stores

Processing Layer

- Stream processing for real-time

- Batch processing for historical

Orchestration Layer

- Workflow management

- Dependency management

Observability Layer

- Monitoring

- Data quality

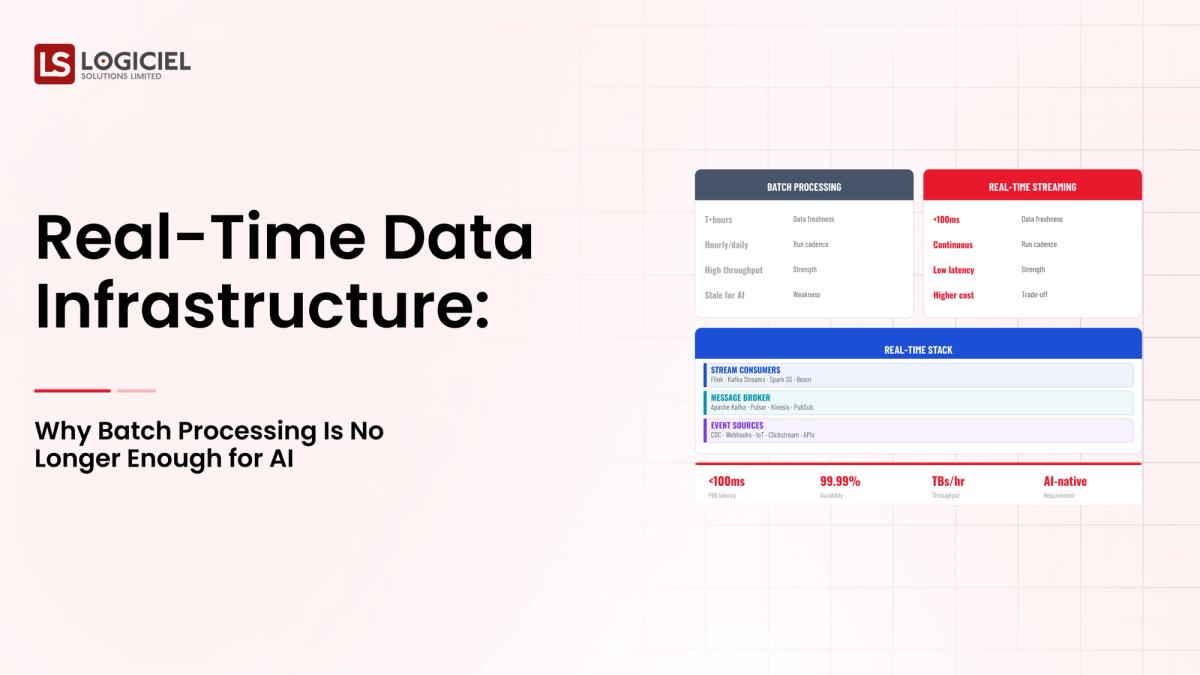

Real-time versus batch analytics

Things that should be done in real-time

- Fraud detection

- Recommendations

- Operational analytics

Things that should be done in batch

- Reporting

- Historical analysis

Multiple systems of record

Enterprise systems include:

- CRM

- Billing system

- Product data stores

Your architecture must:

- Integrate them reliably

- Maintain consistency

Key point

The best systems are not fully real time; they're hybrid systems that are optimized for specific use cases.

Common Enterprise Use Cases for Data Infrastructure and Analytics

1) Real-Time Operational Analytics

Examples

- Fraud detection

- Patient monitoring

- Inventory tracking

Requirements

- Low latency

- High reliability

2) Regulatory Reporting

Requirements

- Data accuracy

- Full audit trails

3) Customer 360 Degrees

Combines

- Behavioral data

- Transactional data

- Interactions with customers

4) AI and ML

Requirements

- Fresh data

- Consistent feature sets

- Reliable data pipelines

Key point

Each of these use cases defines your system's latency and reliability requirements.

What are Enterprise leaders doing right? Others are not.

What high-performing teams do:

1) Treat data as a product

Data is curated and reliable

2) Make early investments in observability

Proactively find issues

3) Establish cross-functional ownership

Engineering [compliance, product]

What other companies do wrong:

1) Reactive approach

Fix problems after they happen

2)What’s Causing Compliance Risks?

Governance Avoidance

Governance avoidance leads to increased risk for organizations by creating an unexpected / undesired growth in compliance risk.

Comparative Experience

Latency

Before

Latency Issues Due to Legacy Systems - application development created excessive latency resulting in inefficient operation of the applications

Occasional Lack Of Failures - Because of the legacy systems, communication was lumpy; as a result, applications would fail sporadically due to the lack of good application communications and would take a longer time to recover from the failures.

Customers Do Not Trust These Systems - Customers will stop using these ancient legacy applications because of their infrequent downtime and will only utilize these systems to meet their particular requirements.

After

- Real-Time

- Accurate

- Reliable

Note

The key insight is that success occurs from proactive system design, not from reactive fixes.

Implementation Considerations and How To Get Started

1. Start With High-Risk Flows (Critical Pipelines, Real-Time Use Cases)

2. Develop a Business Case (Cost Of Latency, Impact Of Failures, etc.)

3. Migration Strategy (Parallel Pipelines, Validation Layers, Gradual Cutover)

4. Scale Ramp (Start Small, Validate, & Expand)

5. Logiciel | Objectives

- Real-time observability

- Automated Reliability Checks

- Unified Infrastructure Management

- Reduction of Engineering Overhead

- Reduction of Frequency of Incidents

Real-time transformation will be a journey.

Conclusion: Batch Systems Built Yesterday Will Limit AI Applications Today

Timely Batch Processing

Three Things To Remember

- Real-Time Is Required For All Critical Systems

- Flexible Hybrid Architectures Are Needed To Support Scaling

- Observability & Reliability Are Two Key Foundations

Building Modern Data Infrastructure & Analytics Systems Are Complex, But Unlock Faster Decisions, Improved AI Performance, And Improved Customer/ User Experiences.

Scaling Data Team Without Scaling Headcount

Inside a 12-week overhaul that doubled output and cancelled two senior data engineering hires.

Call to Action

Call To Action: If You Are Evaluating Your Infrastructure:

- Read & Review The Following References

- Causes & Fixes For Your Data Infrastructure Breaking

- Gaps Between Data Infrastructure & Decision-Making

- Take Action:

- Request A Real-time Infrastructure Audit/ ROI Assessment

Link With Logiciel Solutions To Build Real-time AI-Driven Data Systems With Low Latency Insight, High Reliability, And Confidence In Scaling.

Frequently Asked Questions

What Is Real-time Data Infrastructure?

Real-time Data Infrastructure is a System That Processes And Deliver Data Instantaneously (With Minimum Delay) So That The User Receives Instant Insight And Can Take Action On The Insight.

Why Is Batch Processing Not Sufficient For Supporting AI Applications?

Because AI Requires Fresh And New Data In Order To Produce Accurate Results, Batch Processing Remote Delays Apply To The Data Processed And To The Result Produced, Therefore They Are Not As Effective As Real-time.

When Should Organizations Move To Real-Time Infrastructure?

When Latency Is Causing Negative Impacts And/or Costs To The Organization. Examples Include: Fraud Detection, Recommendation Systems, Operational Analytics, etc.

Is Batch Processing An Obsolete Solution?

No. Batch Processing Remains A Useful Process For Reporting And Analyzing Historical Data. Most Organizations Will Have Hybrid Systems.

What Action Should An Organization Take When Moving From Batch Processing To Real-Time Infrastructure?

Organizations Should Take The Following Steps: Work With Critical Pipelines; Establish Streaming Along With Batch Processing; and Implement A Scale Ramp.