Artificial intelligence agents are increasingly discussed as the next layer of software systems, yet the term itself is often used loosely. For technical leaders, ambiguity is dangerous. Before evaluating deployment strategy, governance, or ROI, it is necessary to establish clarity around definitions, execution models, and architectural impact.

An AI agent is not simply a chatbot. It is not merely a language model. It is not automation wrapped in natural language.

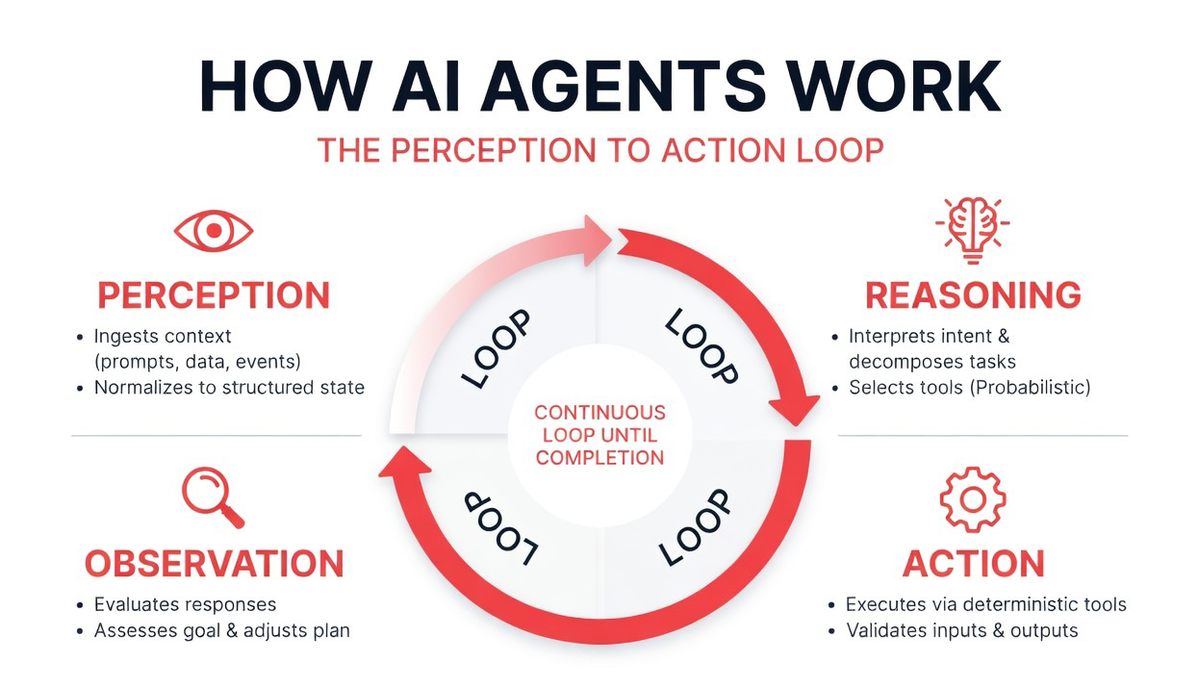

At its core, an AI agent is a goal-oriented software system capable of perceiving context, reasoning about objectives, selecting actions, executing through tools, observing outcomes, and iterating toward completion. That closed-loop behavior is what differentiates agents from static software components.

Understanding this loop and the structural implications it introduces is foundational for CTOs navigating agent adoption.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

What Is an AI Agent? A Precise Definition

An AI agent is a software system that operates autonomously or semi-autonomously in pursuit of a defined objective. It interacts with its environment, processes information probabilistically, and executes actions through structured interfaces.

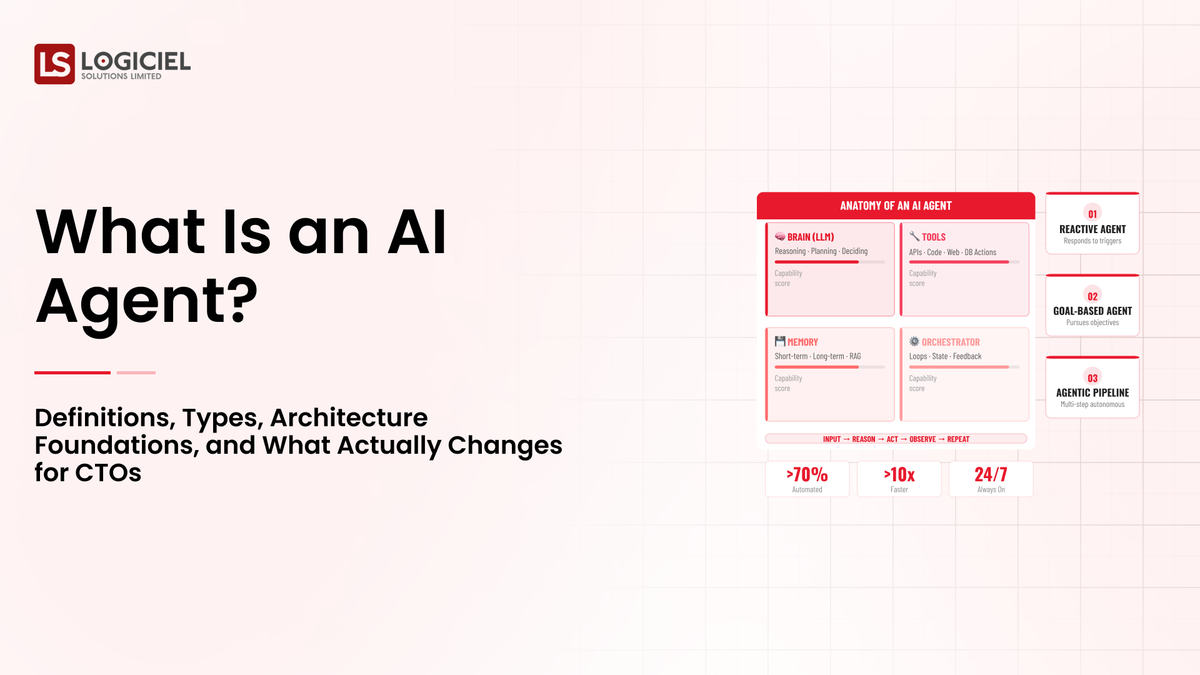

The defining characteristics of an AI agent include contextual perception, dynamic reasoning, planning capability, tool-mediated execution, and feedback-based adjustment. Unlike traditional automation pipelines that follow pre-programmed logic, agents generate execution paths at runtime based on interpreted intent.

In deterministic automation, the flow is encoded directly in code. Input A leads to action B through explicit rules. In agentic systems, input is interpreted through probabilistic reasoning, and a plan is synthesized dynamically. That plan may vary slightly depending on contextual nuance.

This does not imply unpredictability in an uncontrolled sense. Rather, it implies adaptability within bounded constraints.

From an engineering standpoint, the agent operates through a perception-reason-action-observation loop. This loop transforms static systems into adaptive ones.

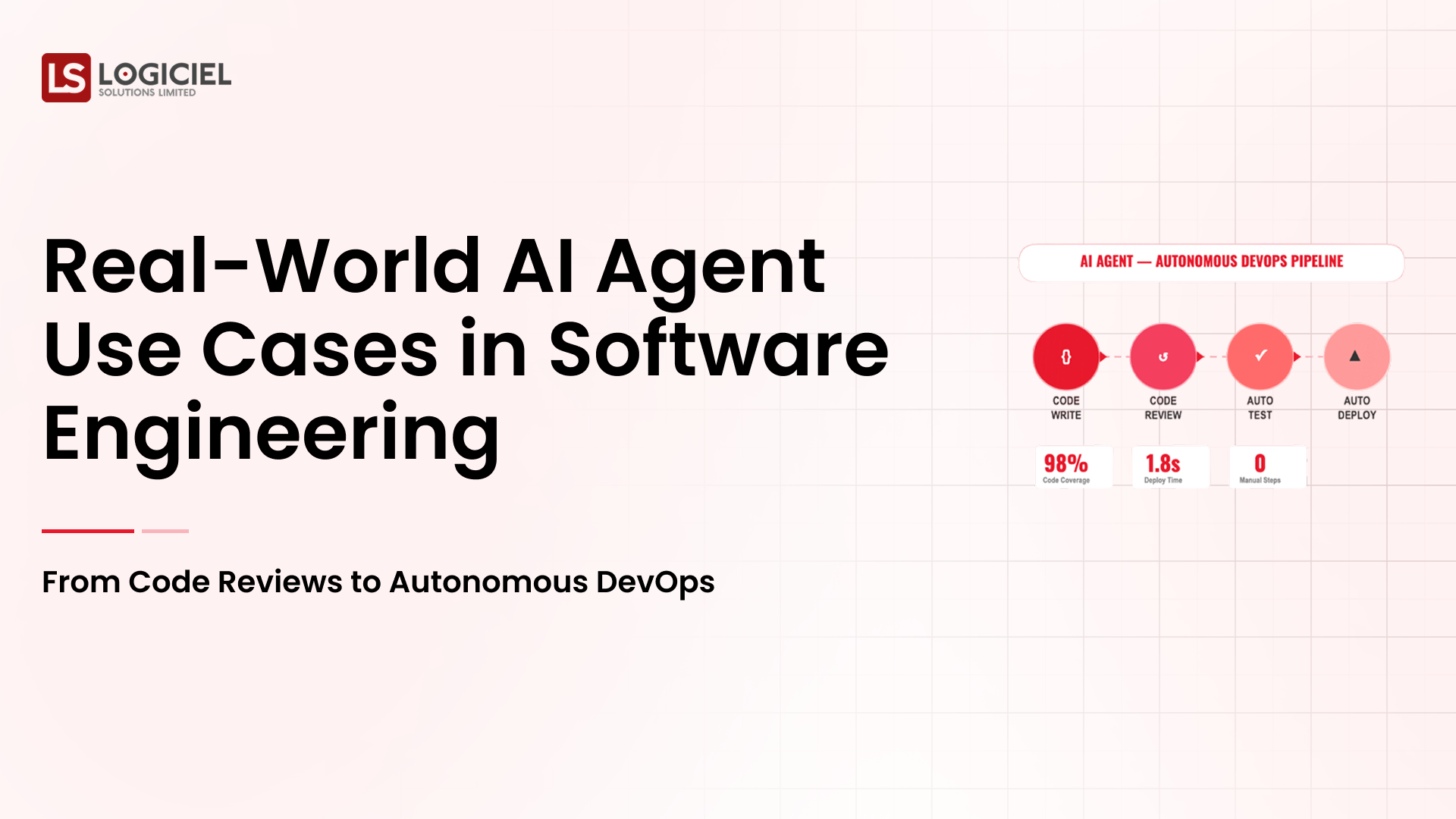

Real Examples of AI Agents in Production

Understanding AI agents requires examining how they function in real systems.

A code review agent integrated into a version control workflow may analyze a pull request, reason about potential issues, query documentation repositories, suggest refactors, and propose test coverage improvements. It is not simply generating text. It is interacting with repositories, APIs, and structured state.

An incident response agent in an operations environment may monitor logs, detect anomaly clusters, query monitoring systems, hypothesize root causes, and draft remediation steps. It observes system state, reasons about patterns, and executes information retrieval tools.

A customer support agent may retrieve CRM records, summarize prior interactions, draft contextual responses, and escalate based on sentiment analysis thresholds.

In each case, the agent is interacting with external systems through defined tools and adapting its behavior based on feedback. That closed-loop dynamic is what defines agency.

AI Agents Versus Traditional Software: What Actually Changes

The most significant architectural difference between AI agents and traditional software lies in control flow.

Traditional software systems operate through deterministic logic. Execution paths are predefined by developers. The system processes input according to encoded rules. When unexpected input occurs, failure surfaces as an unhandled exception or edge case.

AI agents introduce probabilistic reasoning into that flow. Instead of following rigid branches, the system interprets context and constructs execution sequences dynamically. The control flow is partially generated at runtime.

This shifts reliability engineering from code correctness to constraint design. In deterministic systems, developers enumerate possible states. In agentic systems, developers define boundaries within which reasoning can operate safely.

Debugging also changes. Traditional debugging involves inspecting stack traces and logic branches. Agent debugging requires examining reasoning traces, tool calls, contextual memory, and output validation.

Security posture changes as well. Deterministic systems enforce permissions around known functions. Agentic systems must enforce boundaries around tool invocation and instruction interpretation. Prompt injection becomes a risk vector. Tool escalation becomes a design concern.

Observability evolves from monitoring service uptime to tracking reasoning loops and behavioral patterns.

The core shift is this: traditional software is rule-driven. AI agents are goal-driven.

That difference reshapes system design.

Agentic AI Versus ChatGPT: Strategic Differences for Tech Leaders

The term agentic AI is often conflated with conversational models such as ChatGPT. While large language models form the reasoning core of many agents, an agent is structurally distinct from a standalone chat interface.

ChatGPT, in its basic form, generates text responses to prompts. It does not inherently execute actions, persist structured state, or autonomously pursue multi-step objectives across systems. It is stateless by default unless memory layers are added.

An AI agent, by contrast, integrates a reasoning engine with tool interfaces and orchestration logic. It can execute multi-step plans, persist workflow state, modify external systems, and iterate until objectives are met.

The strategic difference for CTOs lies in risk exposure and architectural impact.

Deploying a conversational assistant primarily affects user experience and knowledge retrieval. Deploying an agent affects system state, infrastructure behavior, and operational workflows.

Chat interfaces inform. Agents act.

That difference carries governance implications.

Types of AI Agents: Reflex, Goal-Based, Utility-Based, and Multi-Agent Systems

AI agents can be categorized according to reasoning complexity and decision criteria.

Reflex agents operate on condition-action rules. They respond directly to environmental stimuli without long-term planning. For example, a monitoring agent that triggers alerts when thresholds exceed limits operates as a reflex agent.

Goal-based agents reason about objectives. They evaluate possible actions in pursuit of a defined goal. A deployment agent that evaluates rollback conditions based on health checks operates in this mode.

Utility-based agents evaluate outcomes based on value optimization. They select actions that maximize expected benefit according to defined criteria. An agent optimizing cloud resource allocation for cost-performance tradeoffs operates as a utility-based system.

Multi-agent systems involve multiple specialized agents interacting through shared state or orchestration layers. A planner agent may generate strategy, an execution agent may perform tasks, and a validation agent may verify results.

For CTOs, the relevance of these categories lies in architectural complexity. Reflex agents introduce minimal probabilistic risk. Utility-based and multi-agent systems introduce greater coordination overhead and require more sophisticated observability and governance.

Selecting the appropriate agent type depends on workflow risk tolerance and system maturity.

How AI Agents Work: The Perception to Action Loop

The operational core of an AI agent can be understood as a perception-reason-action-observation loop.

Perception involves ingesting context. This may include user prompts, structured data, logs, or event streams. The input must be normalized into structured state for interpretation.

Reasoning involves interpreting intent and decomposing objectives into tasks. The reasoning engine selects tools and sequences actions. This layer is probabilistic.

Action occurs through deterministic tool interfaces. APIs, databases, file systems, and infrastructure services form the execution boundary. Tools must validate inputs and return structured outputs.

Observation involves evaluating tool responses and assessing whether the goal has been met. If not, the reasoning engine adjusts the plan.

This loop continues until completion or constraint.

The stability of this loop depends on memory integrity, tool determinism, and orchestration constraints.

Measuring AI Agent Autonomy in Production

Autonomy is not binary. It exists along a spectrum.

At the lowest level, agents operate in assistive mode, generating suggestions that require human approval before execution.

At intermediate levels, agents execute bounded workflows automatically but remain constrained by retry limits and escalation triggers.

At higher levels, agents operate conditionally autonomous within defined thresholds.

Measuring autonomy requires tracking specific metrics. Autonomous completion rate measures how often workflows finish without intervention. Intervention ratio quantifies human oversight frequency. Loop retries indicate reasoning stability. Tool misuse frequency reveals schema weaknesses.

Autonomy must be earned through measurable reliability. Deploying full autonomy without observability maturity introduces systemic risk.

For CTOs, autonomy scaling should follow architectural readiness, not enthusiasm.

Foundational Implications for Technical Leaders

Understanding AI agents at a definitional level reveals that they are not simply software enhancements. They introduce a structural shift in how systems execute.

They transform static control flow into dynamic plan synthesis. They move reliability from code correctness to boundary enforcement. They expand security from network perimeters to reasoning constraints. They require observability at the behavioral level.

For technical leaders, the question is not whether agents can increase productivity. The question is whether the underlying architecture can absorb probabilistic reasoning safely.

Definitions matter because they shape design decisions.

AI agents are not chat interfaces. They are reasoning systems connected to action layers.

They are not automation scripts. They are dynamic planners within bounded execution environments.

They are not deterministic components. They are probabilistic layers embedded inside deterministic systems.

Recognizing that structural distinction is the foundation for responsible deployment.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.