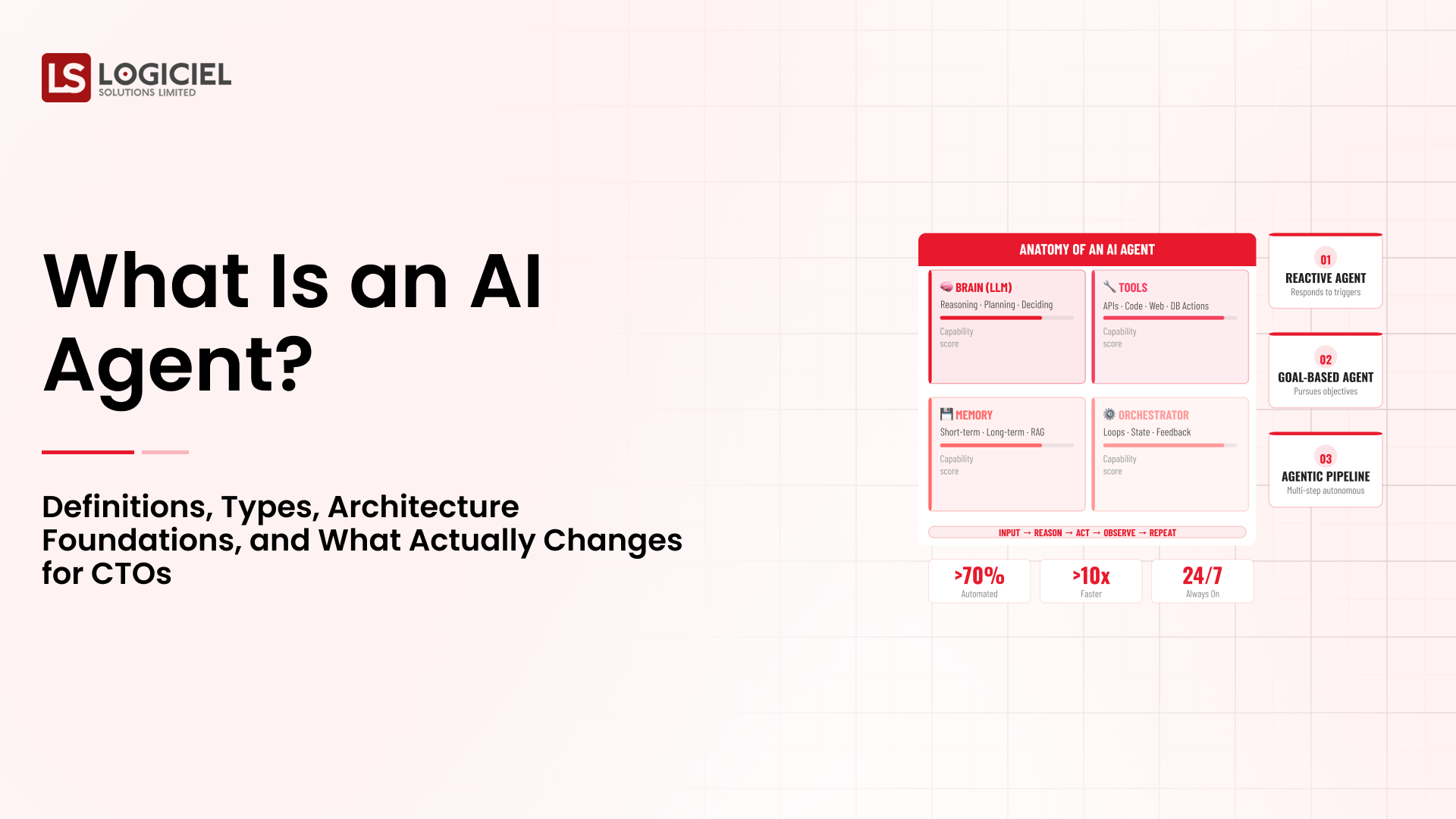

Artificial intelligence agents are rapidly becoming part of modern engineering workflows. While early discussions about AI in software development focused on code generation tools, the current wave of innovation is centered around agents capable of reasoning, interacting with tools, and executing tasks across development environments.

These systems are not limited to writing code. They can analyze repositories, monitor infrastructure, assist with debugging, and automate operational workflows. As organizations experiment with agentic systems, a growing number of real-world use cases are emerging across the software development lifecycle.

For CTOs and engineering leaders, understanding these practical applications is essential. AI agents are most valuable when they are embedded into existing engineering processes rather than used as isolated tools.

This article explores the most impactful real-world use cases for AI agents in software engineering and how organizations are deploying them in production environments.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

AI Agents for Code Review and Technical Debt Detection

One of the earliest and most widely adopted applications of AI agents in software engineering is automated code review.

In large engineering organizations, code review can consume a significant amount of developer time. Engineers must analyze pull requests, check for potential bugs, ensure adherence to coding standards, and evaluate the architectural implications of code changes.

AI agents can assist by performing preliminary analysis before human review.

For example, an agent can analyze a pull request and identify potential issues such as missing error handling, inefficient algorithms, or violations of coding standards. The agent can then generate a structured report highlighting areas that require attention.

This approach allows human reviewers to focus on higher-level design decisions rather than routine checks.

Some organizations also deploy agents that continuously analyze repositories for technical debt. These agents identify outdated dependencies, duplicated logic, and performance bottlenecks across large codebases.

Over time, these insights help engineering teams maintain healthier systems and reduce long-term maintenance costs.

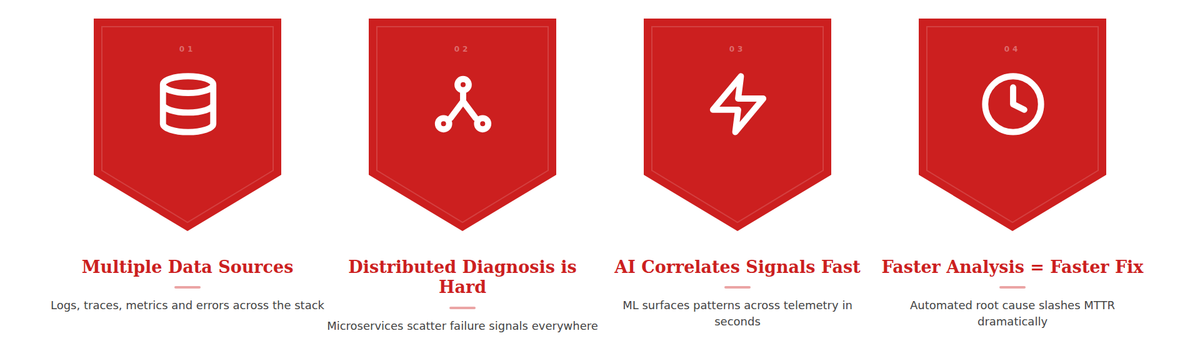

AI Agents for Debugging and Root Cause Analysis

Debugging complex software systems often requires analyzing large volumes of logs, metrics, and traces.

When a system failure occurs, engineers must investigate multiple data sources to identify the root cause. This process can be time-consuming, particularly in distributed systems where failures may propagate across services.

AI agents can accelerate this process by correlating signals across monitoring tools.

For example, when an error spike occurs, an agent may analyze recent deployment logs, infrastructure metrics, and application traces to identify potential correlations.

If the agent detects that a deployment occurred shortly before the error spike, it can highlight the associated code changes and suggest potential rollback actions.

These capabilities help engineering teams diagnose incidents more quickly and reduce mean time to resolution.

AI Agents for Automated Testing

Testing is another area where AI agents are beginning to play a significant role.

Traditional automated testing relies on predefined test cases written by developers. While effective, this approach may fail to capture edge cases or unusual system behaviors.

AI agents can generate additional test scenarios by analyzing application logic and identifying potential failure points.

For example, an agent might examine a function that processes user input and generate test cases involving unusual data formats or extreme values.

Agents can also analyze test failures to identify likely causes. Instead of simply reporting that a test failed, the agent may examine stack traces and highlight the code paths most likely responsible for the error.

These capabilities improve test coverage and accelerate debugging workflows.

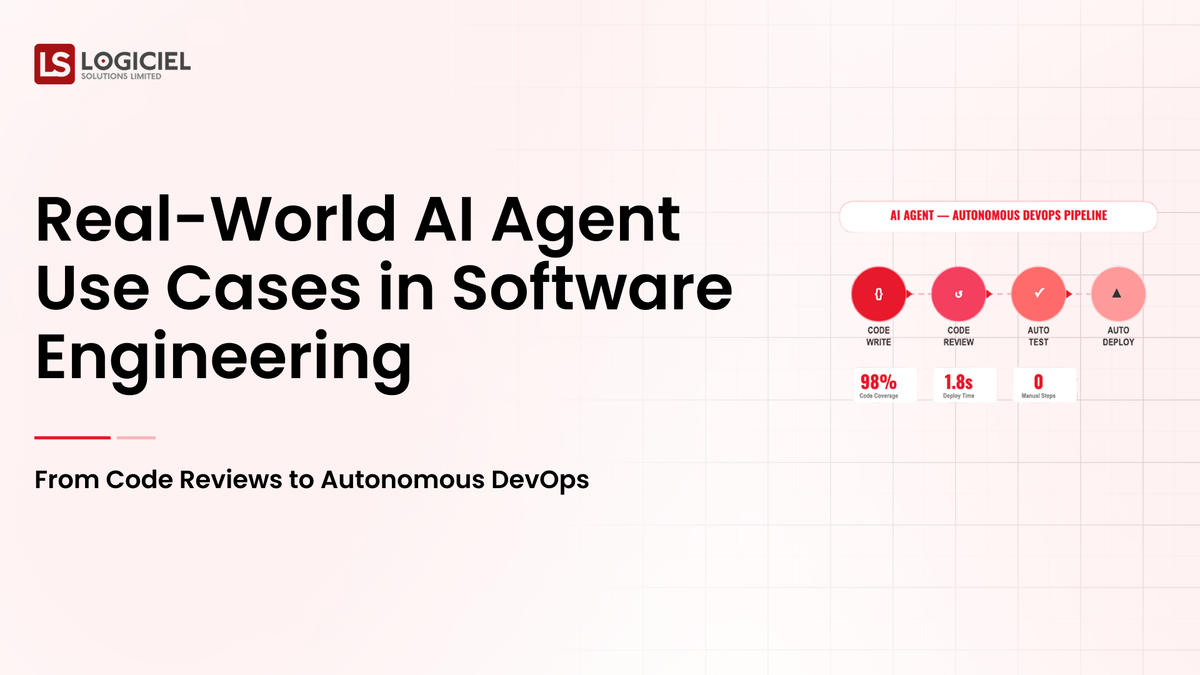

AI Agents in DevOps and CI/CD Pipelines

DevOps pipelines generate large volumes of operational data during builds, tests, and deployments.

When pipelines fail, engineers must interpret logs and identify the root cause. This process can be particularly challenging when multiple services and dependencies are involved.

AI agents can analyze CI/CD pipeline logs to identify failure patterns.

For example, if a build fails due to dependency conflicts, the agent may analyze version compatibility across services and propose configuration changes.

Agents can also assist with deployment validation. After a deployment, an agent may monitor system metrics to detect anomalies such as increased latency or error rates.

If unusual patterns emerge, the agent can notify engineers and recommend investigative steps.

These capabilities transform CI/CD pipelines from static automation systems into adaptive operational tools.

AI Agents for Infrastructure Monitoring

Modern infrastructure environments generate vast amounts of telemetry data.

Monitoring systems track metrics such as CPU usage, memory consumption, request latency, and error rates. While these metrics provide valuable insights, interpreting them requires significant manual effort.

AI agents can analyze telemetry streams to detect patterns and anomalies.

Instead of triggering alerts based solely on predefined thresholds, agents evaluate multiple signals simultaneously.

For example, an agent might detect that a gradual increase in latency coincides with rising memory usage in a specific service. It may then recommend investigating potential memory leaks.

This context-aware monitoring approach reduces alert noise and helps engineering teams focus on meaningful issues.

AI Agents in Developer Knowledge Retrieval

Large engineering organizations often maintain extensive internal documentation.

However, developers frequently struggle to locate relevant information when navigating unfamiliar systems.

AI agents can assist by acting as intelligent knowledge retrieval systems.

When a developer asks a question about a particular service or architecture component, the agent can search internal documentation, code repositories, and design documents to provide a concise answer.

Some agents also provide contextual explanations within development environments. For example, when a developer opens a file, the agent may summarize its purpose and highlight related modules.

These capabilities reduce onboarding time for new engineers and improve knowledge accessibility across teams.

AI Agents for Customer Support Engineering

Customer support teams often handle technical inquiries that require engineering expertise.

Support engineers must analyze error messages, reproduce issues, and identify potential solutions.

AI agents can assist by analyzing incoming support tickets and identifying likely causes.

For example, if a customer reports an error message, the agent may search internal logs and documentation to identify similar incidents.

The agent can then generate a summary explaining the issue and recommend troubleshooting steps.

This approach reduces the workload on support engineers and ensures faster responses for customers.

AI Agents in Security Monitoring

Security operations represent another area where AI agents are gaining traction.

Security teams must monitor systems for unusual behavior patterns that may indicate vulnerabilities or attacks.

AI agents can analyze system logs and access patterns to detect anomalies.

For example, if an agent observes repeated login attempts from unusual locations or unexpected access to sensitive resources, it may trigger alerts for security teams.

Agents can also assist with vulnerability analysis by scanning codebases for potential security issues and recommending mitigation strategies.

These capabilities help organizations maintain stronger security postures while reducing manual monitoring effort.

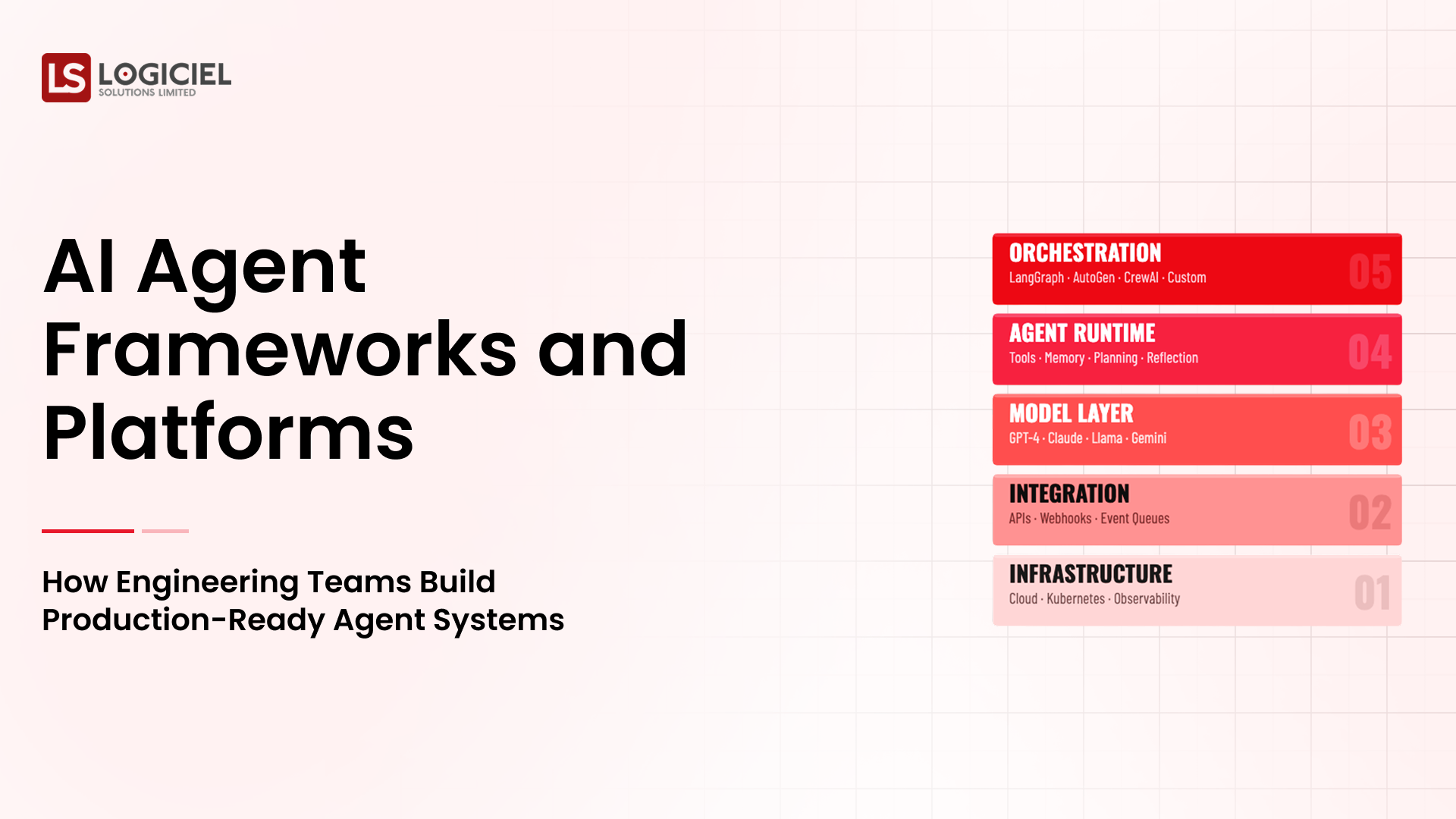

Lessons from Early Enterprise Deployments

Organizations that have successfully deployed AI agents in engineering environments share several common practices.

First, they integrate agents into existing workflows rather than introducing entirely new systems. This ensures that agents complement human expertise rather than disrupting established processes.

Second, they implement strong governance frameworks. Agents operate within defined boundaries, and critical actions require human approval.

Third, they invest in observability. Engineering teams monitor agent behavior and analyze reasoning traces to ensure reliability.

These practices help organizations scale agent adoption responsibly.

The Expanding Role of AI Agents in Engineering

As AI technology continues to evolve, the range of applications for agents in software engineering will expand.

Future agents may assist with architectural design decisions, coordinate development tasks across teams, and optimize system performance automatically.

However, the most successful implementations will remain collaborative.

Human engineers will continue to provide strategic oversight, architectural expertise, and creative problem solving. AI agents will augment these capabilities by handling repetitive analysis and operational tasks.

Together, this partnership can significantly enhance engineering productivity and system resilience.

Closing Perspective

AI agents are beginning to reshape how software systems are built, operated, and maintained.

From automated code review to infrastructure monitoring, these systems provide powerful capabilities that complement human expertise.

However, successful adoption requires thoughtful integration into engineering workflows, strong governance frameworks, and robust observability systems.

Organisations that approach AI agents strategically will gain significant advantages in engineering productivity and operational efficiency.

Those that treat them as experimental tools may struggle to unlock their full potential.

As agentic systems continue to mature, they will play an increasingly important role in the future of software engineering.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.