Artificial intelligence agents are rapidly moving from experimental tools to foundational components of modern software systems. While early adoption focused on developer productivity tools and conversational assistants, enterprise organizations are now exploring how agentic systems can operate across infrastructure, data platforms, DevOps pipelines, and product workflows.

However, deploying AI agents at enterprise scale introduces architectural challenges that differ fundamentally from traditional software design. Agents reason probabilistically, execute dynamically across multiple tools, and operate within evolving contexts. These characteristics require new approaches to system architecture, observability, reliability, and governance.

For CTOs and enterprise architects, the critical challenge is not whether AI agents are powerful. It is whether the organization can design systems capable of integrating reasoning-based automation without compromising reliability, security, or operational clarity.

This pillar examines how enterprise-grade AI agent architectures are designed, how multi-agent systems operate, how orchestration layers govern reasoning systems, and how organizations build reliable and observable AI infrastructures.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.

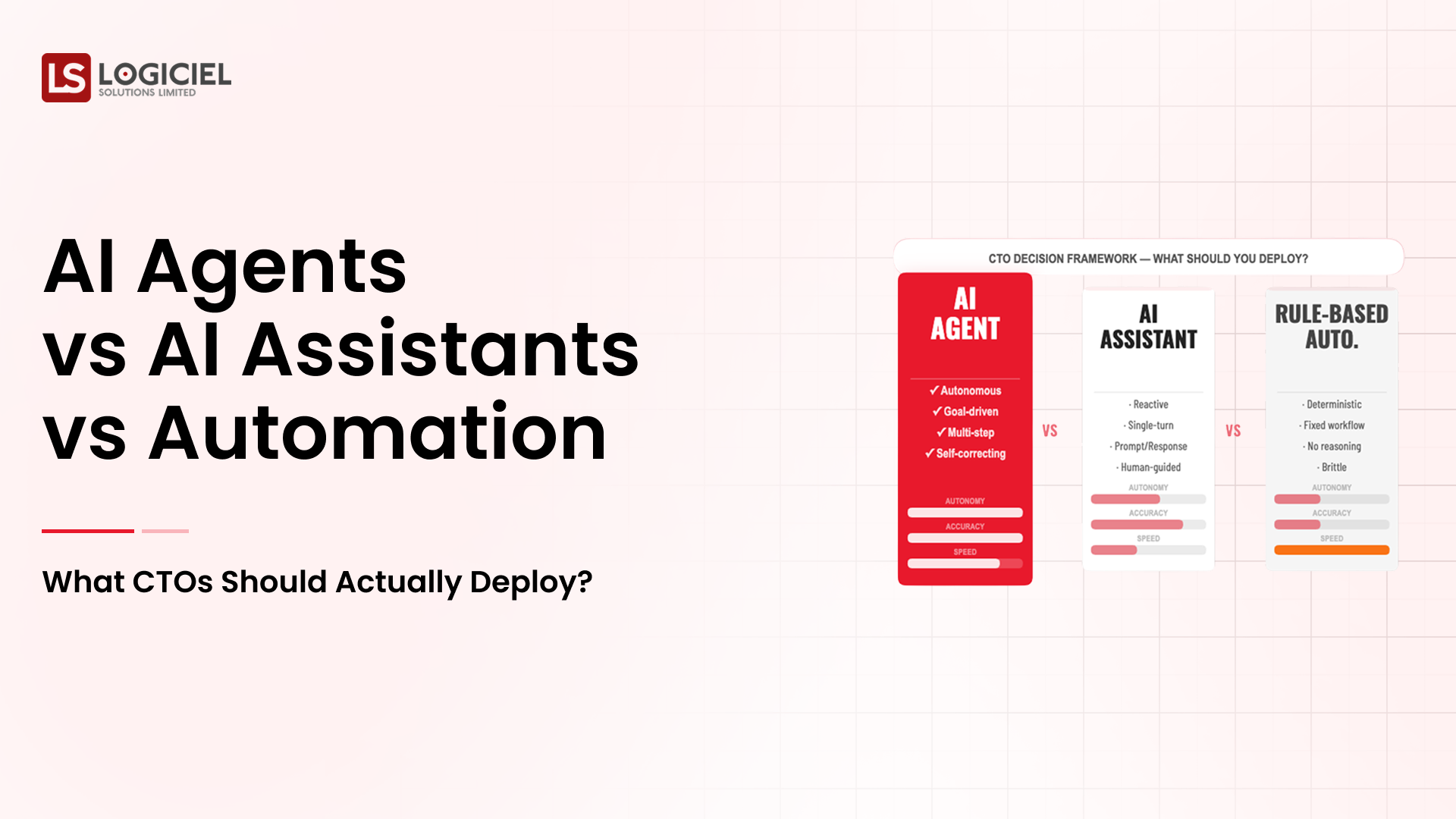

The Shift from Automation to Agentic Architecture

Traditional enterprise automation systems rely on deterministic pipelines. Workflows are defined explicitly, and execution follows predefined rules.

AI agents introduce a different paradigm.

Instead of encoding workflows directly into scripts, organizations can deploy reasoning systems that interpret goals, generate plans, and execute actions dynamically. These agents can analyze context, call tools, retrieve information, and adapt their strategies based on feedback.

This flexibility creates powerful opportunities for automation across complex environments. However, it also introduces variability that must be contained through architecture.

Enterprise agent systems must therefore combine probabilistic reasoning with deterministic control layers.

The reasoning layer provides intelligence and adaptability. The control layer ensures stability, security, and governance.

This dual-layer architecture is the foundation of enterprise agent systems.

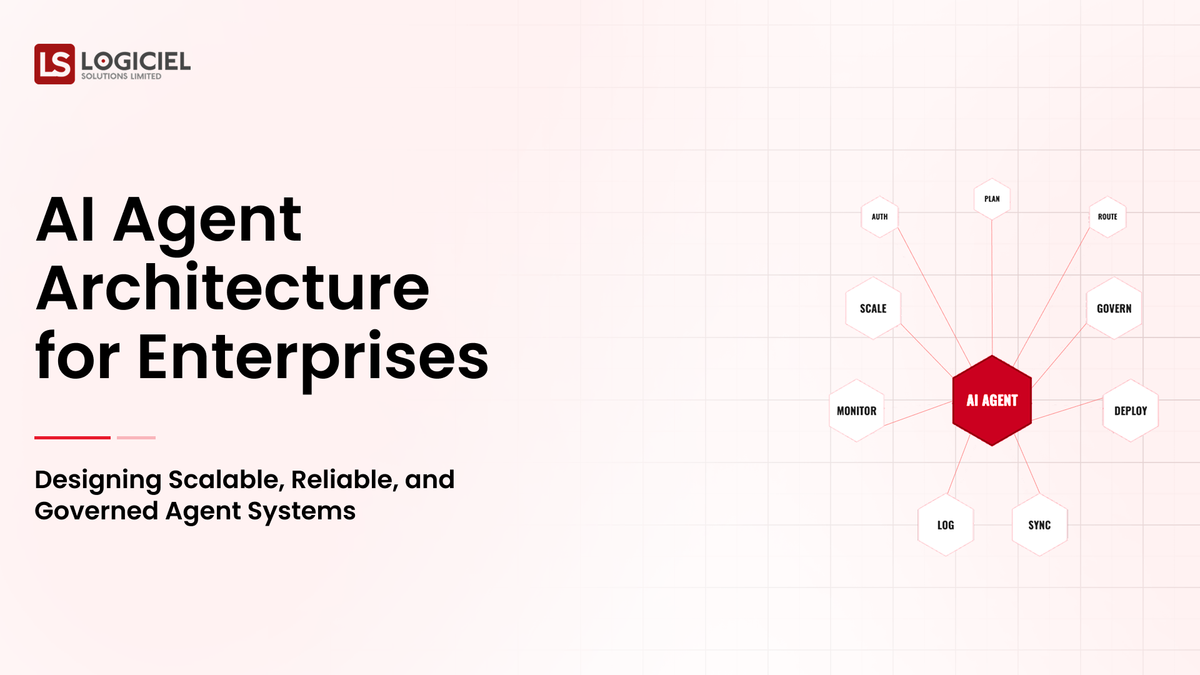

Core Components of Enterprise AI Agent Architecture

A production-grade agent architecture typically includes several distinct components working together.

The first component is the reasoning engine. This layer interprets goals and generates execution strategies. Large language models or similar reasoning systems typically power this component.

The second component is context management. Context includes the data and signals that inform the agent’s reasoning process. This may include user instructions, historical system state, logs, documentation, and memory stores.

The third component is tool integration. Tools provide the agent with the ability to interact with external systems. These tools may include APIs, databases, infrastructure management platforms, and version control systems.

The fourth component is orchestration. The orchestration layer coordinates interactions between reasoning engines, memory systems, and tools. It governs execution order, retries, and fallback mechanisms.

The fifth component is observability infrastructure. Observability systems record reasoning traces, tool interactions, and system outcomes. This visibility is essential for debugging and governance.

Together, these components form the backbone of enterprise AI agent architecture.

Multi-Agent Systems in Enterprise Environments

As agent deployments mature, organizations often transition from single-agent systems to multi-agent architectures.

In a multi-agent system, specialized agents collaborate to complete complex tasks.

One agent may focus on planning tasks. Another may execute tool interactions. A third may validate results or enforce policy constraints.

For example, in a software development environment, a planning agent might analyze a bug report and propose a fix strategy. A coding agent could generate code changes. A validation agent could run tests and verify that the proposed fix resolves the issue.

This separation of responsibilities reduces cognitive complexity within each agent while improving system modularity.

However, multi-agent systems introduce coordination challenges. Communication protocols must be defined. Shared context must remain synchronized. Orchestration logic must ensure agents operate in coherent sequences.

Enterprise architectures must therefore balance the flexibility of multi-agent systems with the simplicity of single-agent deployments.

The Role of Orchestration in Agent Systems

Orchestration is the structural backbone of enterprise AI agents.

While reasoning engines generate ideas, orchestration determines how those ideas translate into action.

The orchestration layer manages task execution across tools and agents. It ensures that workflows progress logically and safely. It enforces limits on retries, prevents runaway loops, and escalates uncertain outcomes.

In enterprise systems, orchestration often incorporates rule-based constraints alongside probabilistic reasoning.

For example, a DevOps agent may analyze logs and propose remediation actions. However, the orchestration layer may require human approval before infrastructure changes occur.

Orchestration frameworks therefore serve as the governance layer of agentic systems.

They convert reasoning into controlled execution.

Memory Systems in Enterprise Agent Architectures

Memory plays a central role in agent performance.

Without memory, agents operate statelessly, reacting only to immediate inputs. With structured memory systems, agents can maintain continuity across tasks and interactions.

Enterprise architectures typically include multiple forms of memory.

Short-term memory stores contextual information relevant to a specific interaction or task. This allows agents to maintain conversational continuity or track ongoing workflows.

Long-term retrieval memory stores knowledge repositories such as documentation, past incidents, or architectural guidelines. Vector databases often power this retrieval layer.

Structured state memory tracks the progress of workflows. This deterministic memory ensures agents understand which steps have been completed and which remain pending.

Combining these memory layers allows agents to reason more effectively while maintaining operational clarity.

Observability and Debugging in Agent Systems

One of the most challenging aspects of deploying AI agents in enterprise environments is debugging.

Traditional software debugging relies on deterministic execution paths. Engineers can trace errors through stack traces and logs.

Agent systems operate differently. Execution paths may vary depending on reasoning outcomes.

Observability systems must therefore capture detailed reasoning traces.

These traces include prompts, tool calls, memory retrievals, and execution results. By examining these traces, engineers can reconstruct how an agent arrived at a particular decision.

Observability platforms also track performance metrics such as task completion rates, retry frequency, and tool usage patterns.

This visibility allows engineering teams to identify inefficiencies and refine system behavior over time.

Without observability, agent systems become opaque and difficult to govern.

Reliability Engineering for AI Agents

Reliability in traditional systems focuses on uptime and error rates.

In agent systems, reliability also includes behavioral stability.

An agent that produces inconsistent reasoning or unpredictable execution patterns can undermine system trust.

Reliability engineering for agents includes several strategies.

Testing frameworks should simulate real-world workflows to validate agent behavior. Regression tests ensure that model updates do not introduce unexpected behavior changes.

Fallback mechanisms provide deterministic alternatives when reasoning fails. For example, if an agent cannot confidently resolve a support ticket, the system may escalate the issue to a human operator.

Reliability engineering ensures that agents enhance system stability rather than compromise it.

Governance and Policy Enforcement

Enterprise agent deployments must operate within strict governance frameworks.

Agents must comply with organizational policies regarding security, data access, and operational boundaries.

Policy enforcement layers monitor agent actions and prevent unauthorized behavior.

For example, an agent interacting with infrastructure systems should not be able to modify production configurations without explicit authorization.

Governance frameworks often integrate with existing identity and access management systems. Role-based permissions ensure that agents operate within defined scopes.

Compliance monitoring ensures that agent activity aligns with regulatory requirements.

Strong governance frameworks transform AI agents from experimental tools into trustworthy operational systems.

Scaling Agent Infrastructure

As organizations expand their use of AI agents, infrastructure demands increase.

Reasoning engines require compute resources. Memory systems require storage and retrieval optimization. Observability systems must process large volumes of trace data.

Scaling agent infrastructure requires careful planning.

Organizations must monitor token consumption, latency, and throughput. Resource allocation strategies must balance performance and cost.

Infrastructure teams may introduce caching layers, distributed memory systems, and load balancing mechanisms to support large-scale agent operations.

Scalable infrastructure ensures that agent adoption can grow without introducing operational bottlenecks.

Security Considerations for Enterprise Agents

Security remains a critical concern in agentic systems.

Because agents interact with multiple tools and data sources, they can become vectors for unintended access or data leakage.

Enterprise security architectures must implement guardrails that constrain agent behavior.

These guardrails include input validation layers, output filtering mechanisms, and permission boundaries around tool interactions.

Security monitoring systems should track anomalous behavior patterns. If an agent attempts to access restricted systems or retrieve sensitive data outside normal workflows, alerts should trigger immediate investigation.

By integrating security controls into the architecture itself, organizations can mitigate risks associated with probabilistic reasoning systems.

Strategic Implications for Enterprise Technology Leaders

AI agents represent more than a new category of developer tooling. They represent a shift in how enterprise software systems operate.

Instead of relying solely on deterministic automation, organizations can deploy reasoning-based systems that interpret goals and execute tasks dynamically.

This capability can dramatically increase operational efficiency and system responsiveness.

However, the benefits emerge only when organizations design architectures capable of supporting agentic behavior.

CTOs must therefore view AI agent adoption as an infrastructure initiative rather than a product feature.

Investments in orchestration, observability, reliability engineering, and governance determine whether agent systems become strategic assets.

The Future of Enterprise Agent Systems

Over the next decade, enterprise software ecosystems will increasingly incorporate agentic components.

Engineering workflows will include agents that assist with development and debugging. Operations environments will include agents that monitor systems and propose remediation strategies. Product teams will deploy agents that analyze customer data and recommend improvements.

These systems will operate alongside human teams rather than replacing them.

Organizations that design robust agent architectures early will gain structural advantages. They will be able to scale reasoning-based automation safely across their operations.

Those that treat agents as experimental tools without architectural discipline will struggle to move beyond isolated use cases.

The future of enterprise AI will belong to organizations that combine intelligent agents with disciplined engineering practices.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.