Software engineering is entering a new architectural phase. For decades, productivity improvements came from better tooling-version control systems, CI/CD pipelines, containerization, and cloud infrastructure. Each wave improved delivery velocity by removing friction from the development lifecycle.

AI agents represent a different kind of shift. They do not merely accelerate existing tools. They introduce reasoning layers into the engineering workflow itself.

Instead of static automation pipelines, engineering organizations can now deploy systems that analyze codebases, interpret developer intent, generate implementation strategies, and execute multi-step workflows across repositories, documentation, and infrastructure services.

For CTOs and engineering leaders, the opportunity is significant-but so is the complexity. AI agents can increase development velocity, but only when they are deployed inside disciplined engineering environments. Without proper architecture, governance, and workflow integration, the same systems that promise acceleration can introduce instability.

Understanding how AI agents reshape software development-from code generation to DevOps automation-is essential for organizations looking to build sustainable engineering advantage.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

How AI Agents Are Transforming Software Engineering

The earliest wave of AI adoption in software engineering focused on code generation tools. Developers used AI to autocomplete functions, draft boilerplate code, or generate documentation. These tools were valuable, but they were fundamentally reactive. They responded to prompts rather than actively participating in engineering workflows.

AI agents expand that role dramatically.

Instead of generating isolated code snippets, agents can now interact with entire development environments. They can analyze repositories, search documentation, inspect logs, run test suites, and orchestrate development tasks across multiple tools.

Consider the difference between a traditional code assistant and an engineering agent.

A code assistant generates a function when prompted. An AI agent can analyze a failing test, inspect the relevant code path, generate a patch, run tests locally, and propose a pull request with an explanation of the fix.

This shift-from reactive assistance to goal-oriented execution-marks the beginning of agentic engineering systems.

When integrated correctly, AI agents compress cognitive overhead for developers. They automate repetitive reasoning tasks, accelerate diagnostics, and reduce the time engineers spend navigating large codebases.

However, the transformation occurs only when agents are embedded within engineering workflows rather than used as optional developer tools.

;-[ analyze build failures, identify configuration errors, and propose remediation strategies.

Finally, there are workflow orchestration agents that coordinate multiple engineering tools. These agents may analyze support tickets, map issues to relevant repositories, generate reproduction steps, and initiate fixes across teams.

Together, these agents form an emerging AI-powered development layer embedded across the engineering stack.

The objective is not to automate engineering entirely but to distribute reasoning capabilities across the lifecycle.

From Prompt to Production: Deploying AI Coding Agents Safely

Many organizations experiment with AI coding tools but struggle to transition from experimentation to production deployment.

The primary challenge lies in reliability.

Code generation systems can produce functional code quickly, but integrating them into production pipelines requires governance. Without guardrails, AI-generated code may introduce subtle bugs, security vulnerabilities, or maintainability issues.

Safe deployment of AI coding agents begins with bounded execution.

Agents should operate inside controlled environments where their outputs are validated before merging into production codebases. Pull request workflows provide a natural integration point. AI-generated patches can be submitted for human review rather than automatically deployed.

Testing infrastructure also plays a crucial role. Agents should trigger automated test suites after generating code modifications. Failed tests provide immediate feedback on the reliability of the generated solution.

Organizations must also establish tool permission boundaries. Coding agents should not have unrestricted access to production systems or sensitive configuration environments.

Instead, they should operate within sandboxed environments designed for experimentation and validation.

Transitioning from prompt-based code generation to production-ready agent systems requires engineering discipline.

The goal is not speed alone. It is safe acceleration.

Optimizing Your Codebase for AI Coding Agents

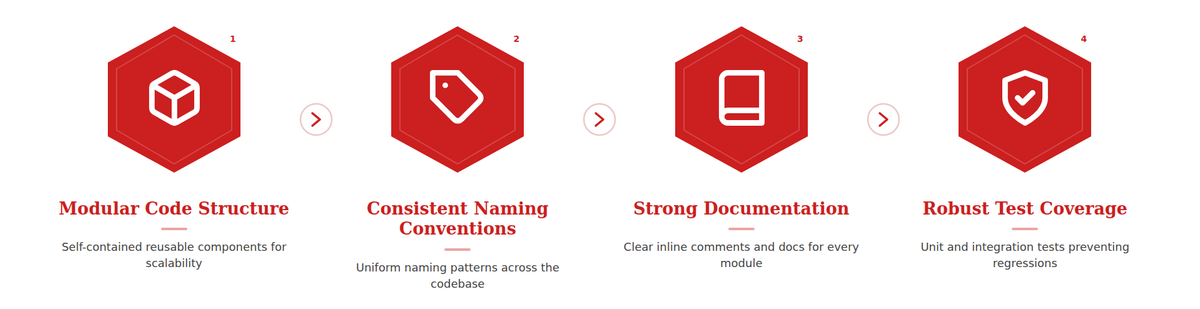

AI agents perform best in structured environments. Codebases that are modular, well-documented, and consistently organized provide stronger context for reasoning systems.

Conversely, poorly structured repositories create ambiguity that agents struggle to interpret.

CTOs looking to adopt AI agents effectively should evaluate their codebase architecture.

Clear module boundaries allow agents to identify the scope of changes more accurately. Consistent naming conventions improve context interpretation. Comprehensive documentation provides the retrieval context agents use to understand system behavior.

Testing coverage also becomes more important in agent-assisted environments. Strong test suites act as validation layers for AI-generated changes.

Without robust testing infrastructure, agents may introduce regressions that remain undetected.

Optimizing a codebase for AI agents is therefore closely aligned with general engineering best practices.

Clean architecture improves both human and machine comprehension.

AI Agents and DevOps Automation: Beyond Copilot

DevOps automation has historically relied on deterministic pipelines. Scripts executed predefined tasks such as building containers, running tests, and deploying services.

AI agents introduce reasoning capabilities into these pipelines.

Instead of simply executing scripts, DevOps agents can analyze pipeline failures and identify root causes. They can interpret log outputs, detect misconfigurations, and recommend corrective actions.

For example, if a deployment fails due to dependency conflicts, an agent may analyze the dependency graph and propose compatible version changes.

Similarly, monitoring agents can analyze anomaly patterns in infrastructure metrics. Rather than triggering static alerts, they can propose potential causes and remediation strategies.

This evolution moves DevOps from rule-based automation toward adaptive infrastructure management.

However, the same principles of bounded autonomy apply here as well. Infrastructure agents should operate within controlled environments, and critical actions should require human validation.

DevOps agents are most effective when they augment operational teams rather than replace them.

The Engineering Discipline Behind AI Agent Adoption

The success of AI agents in software development ultimately depends on engineering discipline.

Agents do not compensate for weak architecture, poorly documented systems, or inconsistent workflows. In many cases, they expose these weaknesses more clearly.

Organizations with structured codebases, robust testing frameworks, and disciplined CI/CD pipelines benefit most from agent integration.

For these organizations, AI agents compress development cycles, reduce debugging time, and increase engineering throughput.

For organizations with fragmented engineering practices, agents may introduce additional complexity.

Adopting AI agents therefore requires both technical infrastructure and operational maturity.

Building an AI-Augmented Engineering Organization

As agent adoption increases, engineering teams will evolve.

Developers will spend less time navigating large codebases manually and more time defining architectural goals and validating AI-generated implementations.

DevOps engineers will focus less on manual incident investigation and more on designing automated diagnostic workflows.

Engineering leadership will oversee hybrid teams composed of human engineers and AI agents collaborating across development pipelines.

This transition does not eliminate human expertise. Instead, it amplifies it.

The most effective organizations will be those that design workflows where agents handle repetitive reasoning tasks while engineers focus on system design, innovation, and long-term maintainability.

The Strategic Outlook for CTOs

AI agents will not replace software engineers, but they will reshape how engineering teams operate.

The organizations that benefit most will not be those that simply experiment with AI tools. They will be those that embed agentic systems into disciplined development pipelines.

From code generation to DevOps diagnostics, AI agents introduce reasoning layers that compress engineering workflows and increase delivery velocity.

For CTOs, the challenge is not whether to adopt AI agents but how to integrate them safely into production environments.

Architecture, governance, and workflow design will determine whether these systems become strategic assets or short-lived experiments.

When deployed with engineering discipline, AI agents become powerful accelerators of software innovation.

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.