Artificial intelligence agents are quickly becoming an important component of modern engineering systems. While many developers experiment with AI tools such as code assistants or chat-based programming interfaces, building a production-ready AI agent requires a deeper understanding of architecture, orchestration, and system integration.

Unlike simple prompt-based interactions with language models, AI agents operate as structured software systems. They can retrieve contextual information, interact with external tools, execute multi-step workflows, and adapt their behavior based on feedback.

For engineering teams, learning how to design these systems is becoming an increasingly valuable skill. Organizations that build reliable agent architectures early will be better positioned to leverage intelligent automation across development workflows.

This guide explains how to design and deploy a simple but production-ready AI agent for software engineering tasks.

RAG & Vector Database Guide

Build the quiet infrastructure behind smarter, self-learning systems. A CTO’s guide to modern data engineering.

Understanding the Core Architecture of an AI Agent

Before writing code, it is important to understand how AI agents differ from traditional applications.

A conventional application follows deterministic logic. Inputs are processed through predefined rules to produce predictable outputs.

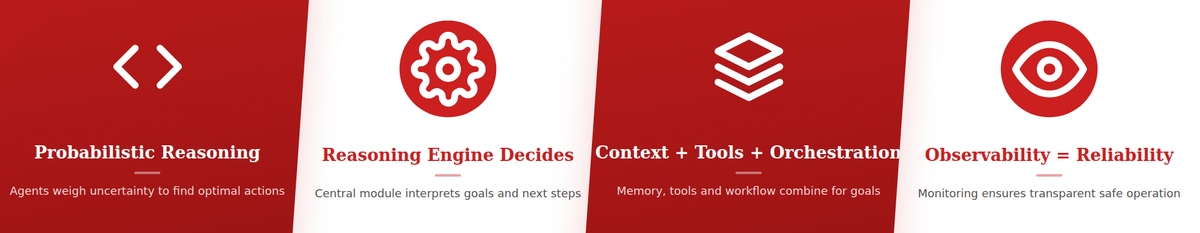

An AI agent introduces probabilistic reasoning into this process. Instead of relying entirely on static rules, it interprets context and generates actions dynamically.

Most agent systems consist of five core components.

The reasoning engine interprets instructions and generates responses. Large language models often power this layer.

The context layer provides the information the agent needs to make decisions. This may include documentation, logs, code repositories, or historical data.

The tool layer enables the agent to interact with external systems such as APIs, databases, or development tools.

The orchestration layer coordinates interactions between reasoning, context retrieval, and tool execution.

Finally, the observability layer records how the agent operates, enabling engineers to monitor and debug its behavior.

Understanding these components helps engineers design agents that are maintainable and reliable.

Step 1: Define the Agent’s Purpose

The first step in building an AI agent is defining a clear purpose.

Agents perform best when they operate within well-defined scopes. Attempting to build a general-purpose agent often leads to complexity and unreliable behavior.

For software engineering teams, useful starting points include tasks such as code review assistance, debugging support, documentation retrieval, or CI/CD pipeline diagnostics.

For example, an agent designed to analyze pull requests might examine code changes, identify potential issues, and generate a review summary.

Defining a narrow use case allows the engineering team to build a focused architecture that can later be expanded.

Step 2: Choose a Reasoning Model

The reasoning engine is the core component of the agent.

Most AI agents today rely on large language models capable of interpreting instructions and generating structured responses.

When selecting a model, engineering teams should consider factors such as accuracy, latency, and cost.

High-capability models provide stronger reasoning abilities but may introduce higher computational expense. Smaller models may be suitable for tasks involving structured workflows.

In many production systems, the reasoning engine is accessed through APIs, allowing organizations to scale workloads dynamically.

The reasoning model determines how effectively the agent interprets tasks and generates actions.

Step 3: Build the Context Retrieval Layer

AI agents require context to perform meaningful reasoning.

For software engineering agents, relevant context may include:

- Source code repositories

- Technical documentation

- System logs

- Infrastructure metrics

- Historical incident reports

Retrieval systems allow the agent to access this information when needed.

One common approach involves embedding documents into vector databases. When the agent receives a query, it retrieves the most relevant information based on semantic similarity.

This approach ensures that the reasoning model operates with accurate and up-to-date knowledge.

Context retrieval dramatically improves the usefulness of AI agents in real engineering environments.

Step 4: Integrate External Tools

Agents become powerful when they interact with external systems.

For example, a debugging agent might query monitoring systems, retrieve logs, and inspect code repositories.

Tool integrations allow agents to move beyond conversation and perform meaningful actions.

Common tools for engineering agents include:

- Git repositories for code analysis

- CI/CD platforms for pipeline diagnostics

- Monitoring systems for infrastructure metrics

- Issue tracking systems for incident reports

Each tool should expose structured interfaces that the agent can call programmatically.

Well-designed tool integrations transform AI agents from assistants into operational systems.

Step 5: Implement the Orchestration Layer

Orchestration coordinates how the agent interacts with its components.

When the agent receives a request, the orchestration layer determines the sequence of actions required to fulfill that request.

For example, if a developer asks an agent to investigate a failing deployment, the orchestration layer may instruct the agent to:

- Retrieve recent CI/CD logs

- Analyze error messages

- Search documentation for related issues

- Generate a diagnostic summary

This step-by-step coordination ensures that the agent performs structured reasoning rather than producing isolated responses.

Frameworks such as agent orchestration platforms can simplify this process.

Step 6: Add Observability and Monitoring

Observability is essential for maintaining production AI agents.

Because agents rely on probabilistic reasoning, their behavior may vary depending on context.

Engineering teams must therefore monitor how agents operate.

Observability systems should capture execution traces including prompts, retrieved context, tool interactions, and outputs.

These traces allow engineers to understand why an agent produced a particular result.

Monitoring metrics such as task success rates, response latency, and error frequency helps teams evaluate system performance.

Strong observability ensures that AI agents remain transparent and manageable.

Step 7: Implement Guardrails and Safety Controls

AI agents must operate within defined boundaries.

Without guardrails, agents may generate incorrect outputs or attempt actions outside their intended scope.

Guardrails can include permission checks, validation layers, and human approval mechanisms for critical actions.

For example, an agent analyzing infrastructure metrics may generate recommendations but should not modify production systems without authorization.

Safety controls ensure that AI-driven automation remains accountable.

Step 8: Test the Agent in Controlled Environments

Before deploying an AI agent in production environments, teams should conduct extensive testing.

Testing should simulate realistic scenarios that the agent will encounter.

For example, a debugging agent might be tested against historical incident logs to evaluate whether it can identify root causes correctly.

Testing frameworks can also evaluate edge cases, such as incomplete context or ambiguous instructions.

These tests help engineering teams identify weaknesses in the agent’s reasoning workflow.

Iterative testing and refinement are essential for building reliable agent systems.

Step 9: Deploy and Monitor in Production

Once testing is complete, the agent can be deployed into production workflows.

However, initial deployments should operate in assistive mode.

In this mode, the agent provides recommendations while human engineers validate outputs.

As confidence in the system grows, organizations may gradually increase automation levels.

Continuous monitoring ensures that the agent performs reliably and that unexpected behavior is detected early.

Common Pitfalls When Building AI Agents

Engineering teams building AI agents often encounter several common challenges.

One issue is overloading agents with too many responsibilities. Narrow use cases lead to more reliable systems.

Another challenge involves insufficient context retrieval. Agents perform poorly when they lack access to relevant information.

A third issue is weak observability. Without execution traces, debugging agent behavior becomes extremely difficult.

Avoiding these pitfalls requires careful architectural planning.

The Future of Engineering Agents

As AI technology evolves, engineering agents will become increasingly capable.

Future systems may collaborate with developers to design architectures, generate test suites automatically, and manage operational workflows across infrastructure environments.

However, the most successful implementations will remain collaborative rather than fully autonomous.

Human engineers will continue to guide system design and strategic decisions, while AI agents provide analytical and operational support.

Organizations that invest in agent architectures today will gain valuable experience as this technology continues to mature.

Closing Perspective

Building AI agents for software engineering is not simply a matter of connecting a language model to a prompt interface.

It requires designing structured systems capable of retrieving context, interacting with tools, and coordinating workflows.

By following a disciplined architectural approach, engineering teams can build agents that provide real value across development and operational environments.

Agent-to-Agent Future Report

Understand how autonomous AI agents are reshaping engineering and DevOps workflows.