There is a CTO debating two AI implementation partners on the shortlist. Both demos went well, both reference lists looked strong, both pricing models are similar. The difference is in the engineering practices each brings to the engagement, and you cannot evaluate that without a structured checklist.

This is more than a procurement decision. It is a multi-quarter bet on a partner whose engineering practices will live inside your team.

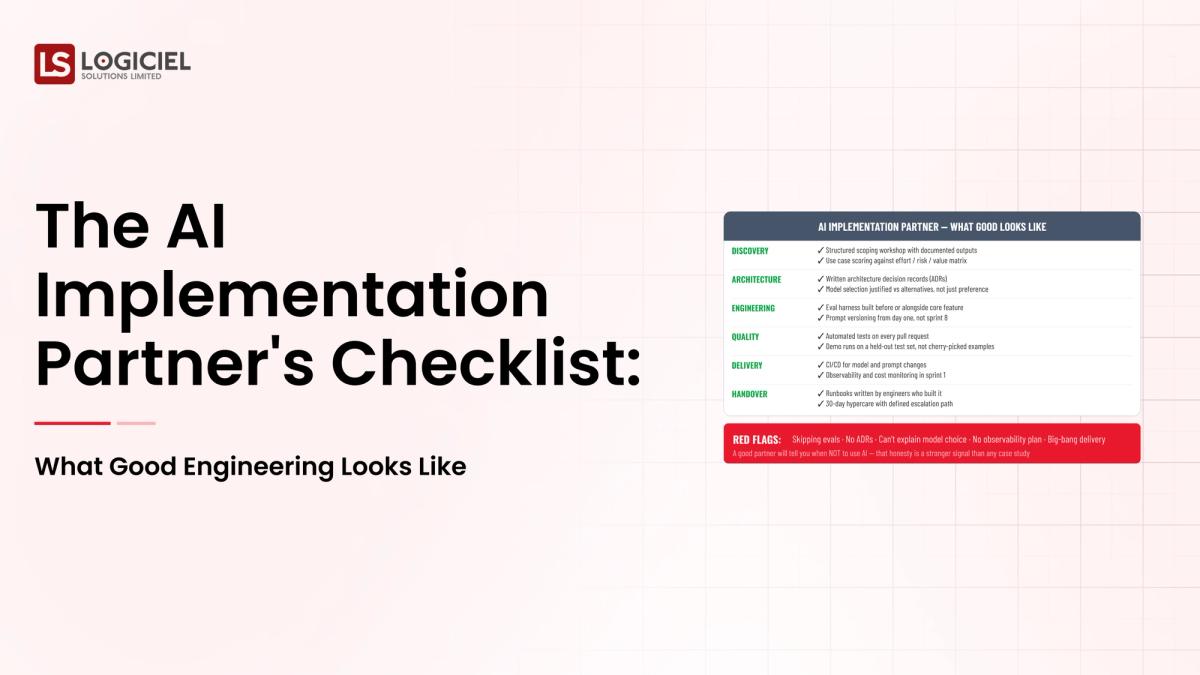

A modern AI implementation partner checklist covers capability, method, governance, cost shape, and exit planning. The checklist is the engineering equivalent of a code review for the partner.

AI Velocity Blueprint

Measure and multiply engineering velocity using AI-powered diagnostics and sprint-aligned teams.

However, most procurement processes lean on demos and reference calls, both of which are curated to look good. The checklist surfaces what they hide.

If you are a CTO and are responsible for building or scaling your AI implementation partner program, the intent of this article is:

- Define what good AI implementation partner engineering looks like

- Walk through the checklist across capability, method, governance, cost, and exit

- Lay out the scoring rubric that turns the checklist into a decision

To do that, let's start with the basics.

What Is AI Implementation Partner? The Basic Definition

At a high level, an AI implementation partner is a professional services firm that helps an enterprise design, build, and operate AI systems in production. Good engineering means delivery practices that match production-grade discipline.

To compare:

If a vendor demo is the brochure of a contractor, the partner checklist is the building inspection. The brochure shows the kitchen; the inspection shows the wiring.

Why Is AI Implementation Partner Necessary?

Issues that AI Implementation Partner addresses or resolves:

- Surfacing engineering practices that demos hide

- Comparing partners on a structured rubric

- Producing documentation that defends the decision in board review

Resolved Issues by AI Implementation Partner

- Provides shared scorecard between procurement and engineering

- Forces governance and exit conversations before signing

- Builds organizational pattern-matching across multiple partner evaluations

Core Components of AI Implementation Partner

- Capability assessment across the layered AI stack

- Method assessment of phase delivery and eval discipline

- Governance and audit posture review

- Cost shape evaluation under multiple scenarios

- Exit planning and knowledge transfer commitments

Modern AI Implementation Partner Tools

- Capability scorecards calibrated for AI consulting

- Phase delivery checklists tied to deliverables

- Governance posture review templates

- Cost-curve modeling spreadsheets

- Exit planning templates with named alternatives

Tools support the checklist; the discipline of running it honestly is the differentiator.

Other Core Issues They Will Solve

- Reduces decision risk on the multi-year commitment

- Strengthens negotiating position at signing and renewal

- Builds defensible documentation for board review

In Summary: The AI implementation partner checklist is the structured discipline that turns a procurement decision into a defensible engineering bet.

Importance of AI Implementation Partner in 2026

Partner checklists matter more in 2026 because AI engineering practices vary widely. Four reasons.

1. Capability gaps hide behind demos.

Demos show capability; capability gaps show up in operations. The checklist surfaces what demos hide.

2. Method discipline is the differentiator.

Most partners can build; few can operate. The method assessment is where evaluations should focus.

3. Governance posture varies widely.

Some partners produce audit-grade evidence by default; many do not. The difference matters in regulated industries.

4. Exit planning protects the future.

Partners that resist exit planning are partners to avoid. The checklist forces the conversation.

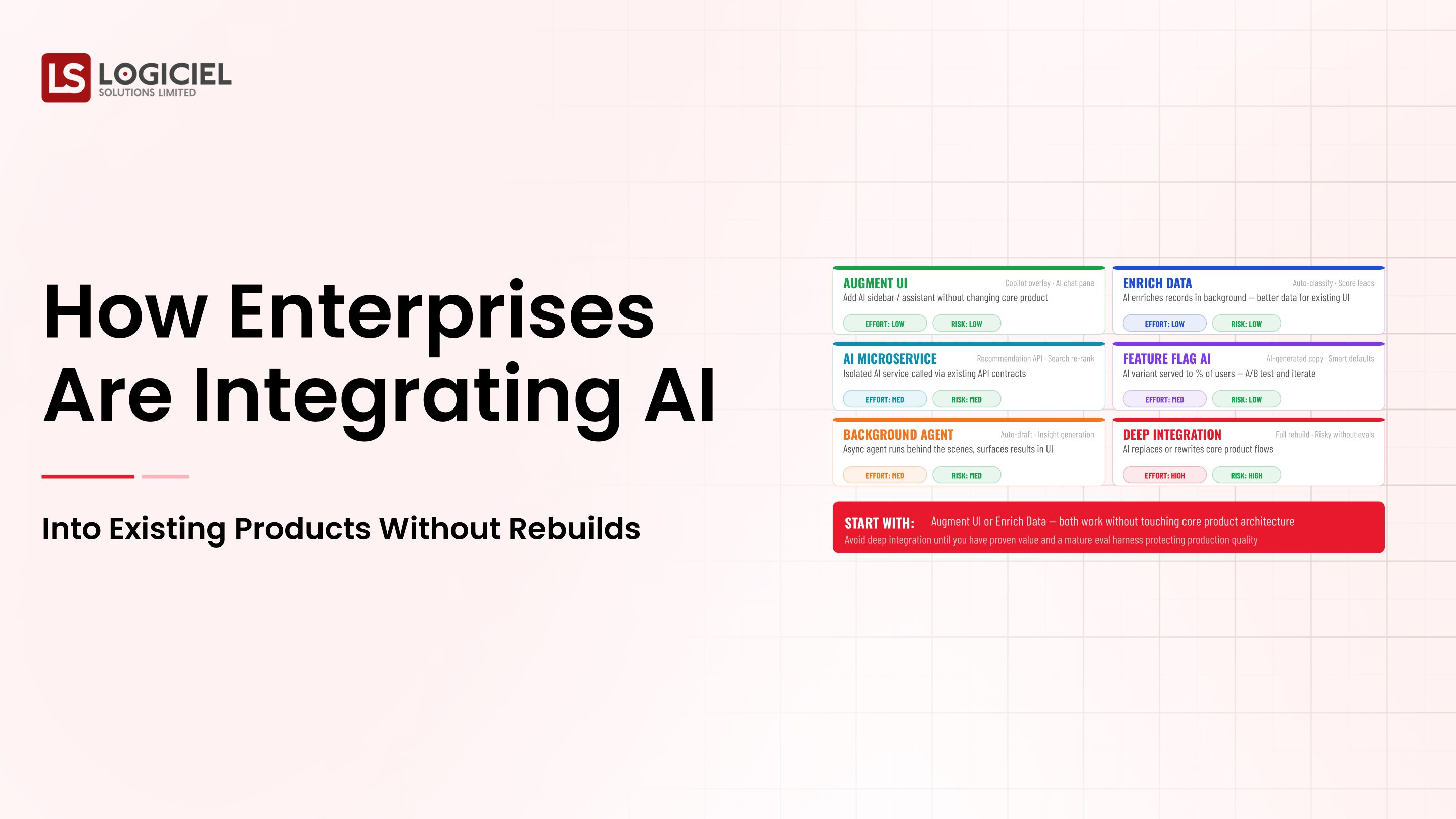

Traditional vs. Modern AI Implementation Partner Concepts

- Demo-led evaluation vs. structured checklist scoring

- Curated reference calls vs. non-curated calls with engineering

- Annual contract review vs. quarterly partner check-in

- Procurement-led decisions vs. engineering-led technical evaluation

In summary: The checklist is the engineering equivalent of a building inspection for partner selection.

Details About the Core Components of AI Implementation Partner: What Are You Designing?

Let's go through each layer.

1. Capability Assessment

What the partner can actually do.

Capability checks:

- Reference architecture for a customer your size

- Last three customer-impacting incidents and response shape

- Operating-model deliverables in prior engagements

2. Method Assessment

How they deliver.

Method checks:

- Phase delivery cadence with documented deliverables

- Eval harness shipped to client by end of engagement

- Documentation handoff and knowledge transfer practices

3. Governance Assessment

How they handle audit and risk.

Governance checks:

- SOC 2 Type II report and qualified opinions

- Incident response process and audit-trail design

- Approach to regulatory regimes relevant to you

4. Cost Shape Assessment

Run their pricing against your usage curve.

Cost checks:

- Year one through year three cost shape

- Sensitivity analysis under multiple scenarios

- Hidden cost lines and change-order practices

5. Exit Planning

What it would take to remove them.

Exit checks:

- Named alternative partners per layer

- Migration shape in dollars and weeks

- Knowledge-transfer commitments in the contract

Benefits Gained from Capability and Method Assessment

- Reduced risk on the multi-year commitment

- Stronger negotiating position at signing

- Documentation that defends the decision in board review

How It All Works Together

Capability surfaces what the partner can do. Method surfaces how they deliver. Governance protects audit posture. Cost shape protects unit economics. Exit planning protects optionality. Together, the five assessments produce a decision your future self can defend.

Common Misconception

Partner evaluation is procurement work that engineering supports.

Procurement runs the contract; engineering runs the technical evaluation. The capability and method checks are engineering's domain.

Key Takeaway: Each assessment surfaces a different class of risk. Programs that skip assessments discover the missed risk later.

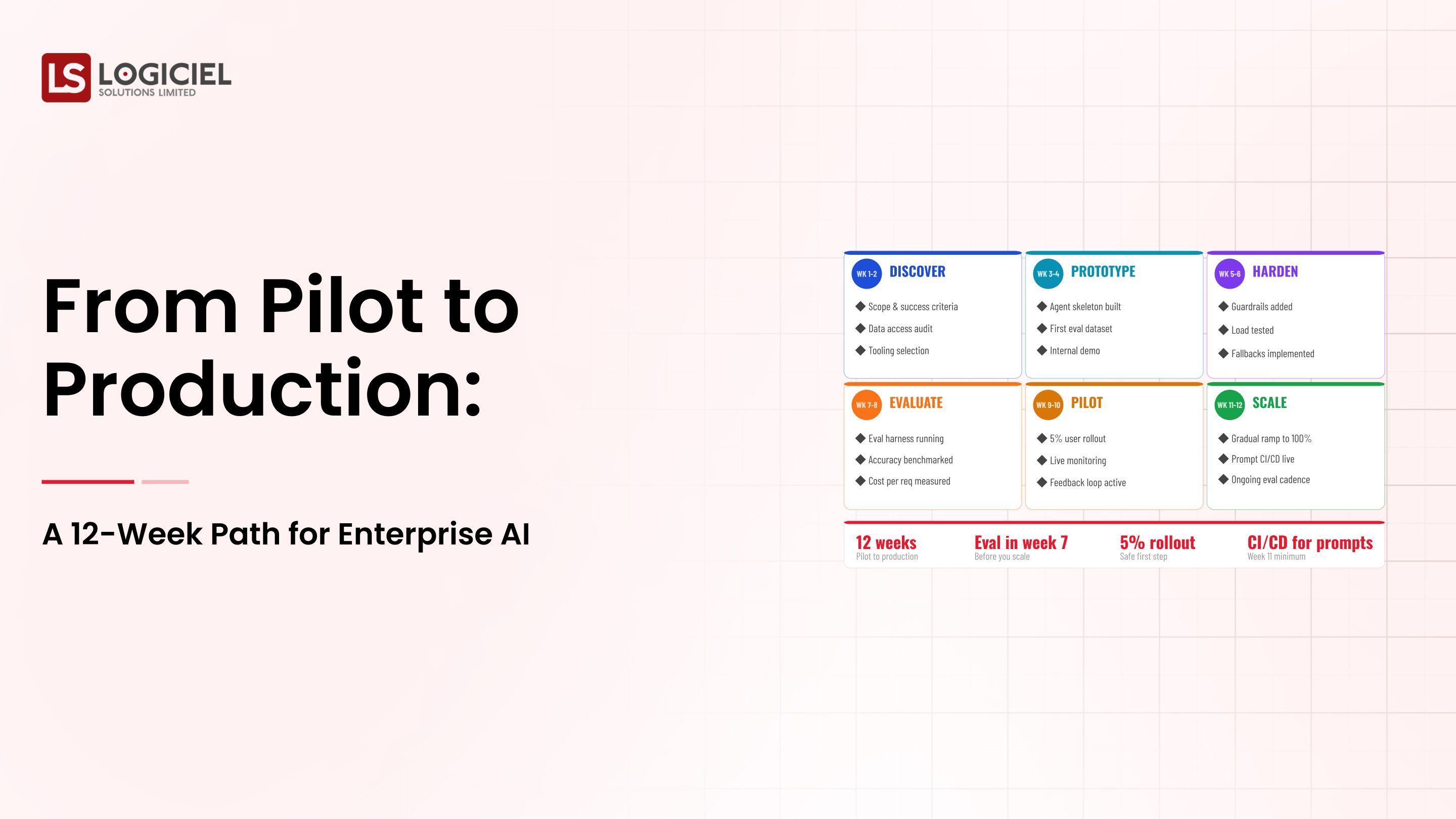

Real-World AI Implementation Partner in Action

Let's take a look at how AI implementation partner operates with a real-world example.

We worked with a CTO running a partner selection across three shortlisted firms, with these constraints:

- Eighteen-month engagement

- Multi-region deployment with audit requirements

- Internal team that would inherit the system

Step 1: Run the Capability Assessment

Reference architecture, incident walkthrough, operating-model track record.

- Reference architecture for customer your size

- Last three incidents reviewed

- Operating-model deliverables sampled

Step 2: Run the Method Assessment

Phase delivery, eval discipline, knowledge transfer.

- Phase deliverables documented

- Eval harness shipped to clients

- Documentation handoff samples

Step 3: Run the Governance Assessment

SOC 2, incident response, regulatory approach.

- SOC 2 Type II review

- Incident response sample

- Regulatory regime alignment

Step 4: Run the Cost and Reference Calls

Cost sensitivity; non-curated calls with engineering.

- Sensitivity under multiple scenarios

- Non-curated reference calls

- Engineering-on-engineering questions

Step 5: Write the Exit Plan and Score

Named alternatives; migration cost; knowledge transfer; final scorecard.

- Documented exit cost

- Named alternatives per layer

- Knowledge-transfer commitments

Where It Works Well

- Engineering-led technical evaluation

- Non-curated reference calls

- Exit plan written before signing

Where It Does Not Work Well

- Procurement-led evaluation

- Curated reference calls only

- Multi-year contracts without exit plans

Key Takeaway: The partner you would still pick after the structured checklist is the partner whose engagement actually delivers in eighteen months.

Common Pitfalls

i) Demo-led evaluation

Demos are sales artifacts. The signal lives in incident walkthroughs and reference calls.

- Demand reference architecture for customer your size

- Demand incident walkthrough

- Demand non-curated reference calls

ii) Curated reference calls

Vendors prepare references. Ask for two specific customers similar to you, talk to engineering, ask hard questions.

iii) Skipping the exit plan

Multi-year contracts without exit plans are bets your future self has to pay for.

iv) Pricing on today's curve

Run sensitivity analysis. The vendor with the cleanest curve under worst case is often the right answer.

Takeaway from these lessons: Most partner-selection regrets trace to skipped checklist items, not bad partners. Run the checklist; trust the structure.

AI Implementation Partner Best Practices: What High-Performing Teams Do Differently

1. Engineering owns the technical evaluation

Capability and method are engineering's domain. Procurement runs the contract.

2. Demand reference architecture

For a customer your size, in your industry, with your data shape.

3. Run non-curated reference calls

Engineering on the call. Twelve-plus months live. Hard questions.

4. Run sensitivity on cost

Three scenarios. Multiple providers. The cleanest curve under worst case wins.

5. Write the exit plan before signing

Named alternatives, migration shape, knowledge-transfer commitments. Vendors that resist this conversation are vendors to avoid.

Logiciel's value add is helping CTOs run structured partner evaluations using the layered checklist, including the governance, cost sensitivity, and exit planning that procurement processes often skip.

Takeaway for High-Performing Teams: High-performing CTOs treat partner evaluation as engineering, not procurement. The checklist is the deliverable.

Signals You Are Designing AI Implementation Partner Correctly

The signals below distinguish programs that are working from programs that look like they're working. Worth checking yours against the list.

The team describes failure modes without theater. They know the last three things that broke. They know why. They know what changed.

Cost is current. The dashboard shows yesterday's spend, broken out by feature, with someone whose job it is to explain it.

Change is unremarkable. Deploys ship, rollbacks happen, models swap, and nobody panics. Drama in production deploys is a sign that the system isn't yet running like infrastructure.

Eval runs continuously. Daily at minimum. Regression blocks deploy. Quality is a number on a screen, not an opinion in a meeting.

The team has done the lock-in math. The cost of removing each major dependency is documented in dollars and weeks. They didn't wait for the painful renewal to figure that out.

Adjacent Capabilities and Connected Work

Programs like this never run alone. They share infrastructure with the data platform, share alert noise with whatever observability stack the SRE team runs, and share a security review queue with everything else trying to ship that quarter.

They also share team capacity, which is the part that gets lost in planning. Platform engineering, applied ML, and SRE all carry pieces of this work. So does whatever leadership has marked as the next big AI initiative. Naming the overlap on day one prevents a year of "I thought your team had that."

If you take one thing from this section, take this: the integration with the data platform is your problem, not theirs. Same for the security review. Same for the on-call rotation. Treating those as someone else's job pushes work onto teams that didn't plan for it, and it comes back as a delay or an incident. Own what you depend on; partner where it makes sense; share the timeline.

Stakeholder Considerations and Communication

The same program will be evaluated by four or five audiences who don't share vocabulary. Worth getting ahead of.

Board questions: risk, ROI, competitive position. CFO: unit economics, forecast under multiple usage scenarios. CISO: threat model, audit defensibility. Engineering: scope, buy/build, on-call load. Line of business: when value lands, what users experience. None of these questions are unreasonable. They're just easy to fail when you're answering them in real time without prep.

The fix is boring but it works. Build a one-page brief for each major stakeholder. Update quarterly. Have it ready before the meeting where you need it. The cost of writing them is low; the cost of not having them is the meeting where the program loses its sponsor.

The communication cadence question is the same idea, applied to time. Weekly during delivery. Monthly during operation. Every incident, every meaningful change. The teams that protect the cadence keep their stakeholders. The teams that go silent between milestones surprise people, and surprises in this context are rarely good news.

Metrics That Tell You AI Implementation Partner Is Working

Below the surface signals above are some operational metrics that are worth tracking weekly. They're not the metrics that make it into board decks. They're the ones that tell you, internally, whether the program is on the path or running in place.

Time from idea to production is the most useful single number. New use cases moving faster every quarter is the cleanest sign the platform is paying back. New use cases taking longer than they did six months ago is a sign that something has accreted that nobody is fixing.

Cost per unit of value is next. Spending less per output each quarter is the leading indicator that the platform layer is amortizing. Spending more is the leading indicator that you're carrying complexity nobody has audited.

Incident severity over time should trend downward. Operating models mature; runbooks improve; on-call gets better at triage. Flat severity is fine for a quarter; flat severity for a year says the team has stopped learning from incidents.

Reuse rate across programs is the metric most CTOs forget to track. What fraction of program one is in program two? In program three? High reuse is what compounds. Low reuse is what makes the second program as expensive as the first.

Stakeholder confidence is harder to measure but easier to feel. The proxies: budget approved, scope expanding rather than contracting, sponsor asking for more rather than asking you to defend. None of these are vanity. All of them tell you whether the program has runway.

Conclusion

Partner evaluation for AI implementation is structured engineering work. The checklist is well known; the discipline of running it honestly is the differentiator.

Key Takeaways:

- Five assessments: capability, method, governance, cost, exit

- Engineering owns technical evaluation; procurement owns contract

- Exit planning protects the future

When the partner checklist is run with discipline, the benefits compound:

- Reduced risk on the multi-year commitment

- Stronger negotiating position

- Documentation that defends the decision in board review

- Knowledge-transfer that protects internal team capability

Evaluation Differnitator Framework

Why great CTOs don’t just build they evaluate. Use this framework to spot bottlenecks and benchmark performance.

Call to Action

If you are evaluating AI implementation partners, the move this month is to run the five-assessment checklist and demand the exit plan.

Learn More Here:

- Hybrid Delivery Model Ctos AI First Engineering 2026

- AI and Data Engineering Services

- AI Powered Product Engineering Teams

At Logiciel Solutions, we work with CTOs on partner evaluation, including the capability scorecard, governance review, and exit planning that protect multi-year AI investments.

Explore how to evaluate your AI implementation partner.

Frequently Asked Questions

What is an AI implementation partner?

A professional services firm that helps an enterprise design, build, and operate AI systems in production.

What are the most important checks?

Reference architecture for customer your size, last three incidents, cost sensitivity, exit plan, two non-curated reference calls.

Who should run the evaluation?

Engineering owns the technical evaluation; procurement owns the contract; both report to the CTO.

How long should the engagement be?

Twelve to eighteen months for the first; longer only with documented exit plan and successful partnership.

What is the biggest mistake in partner evaluation?

Skipping the exit plan. A multi-year contract without exit terms is a bet your future self pays for.