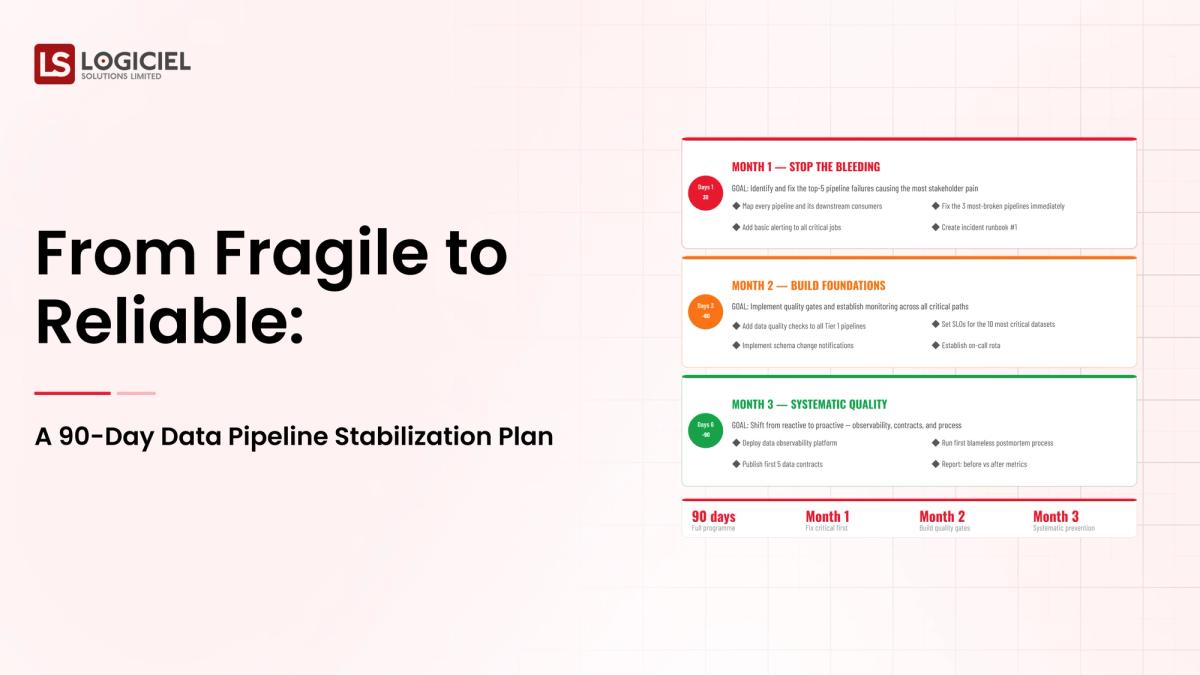

There is a data team being asked to stabilize fifty fragile pipelines in a quarter. The team is talented; the pipelines are tangled; the timeline feels impossible. The 90-day plan exists for exactly this.

This is more than a delivery question. It is a failure of data pipeline stabilization discipline when handled poorly, and a multiplier when handled well.

A modern approach to data pipeline stabilization is more than tooling. It is the engineered systems that move data from sources to consumers with discipline, supported by the operating model that keeps it current.

However, many teams treat data pipeline stabilization as a one-off project and discover the discipline gap when production exposes the gaps the lab hid. Pipelines that ran clean for a year break the day a vendor pushes a schema change at 11pm Pacific. You know this from experience.

If you are a Data Eng Lead and are responsible for building or scaling your data pipeline stabilization program, the intent of this article is:

- Define what data pipeline stabilization actually means in production

- Walk through the patterns that work and the ones that look smart and quietly fail

- Lay out the operating model that turns data pipeline stabilization from a project into infrastructure

To do that, let's start with the basics.

What Is Data Pipeline Stabilization? The Basic Definition

At a high level, data pipeline stabilization is the engineered systems that move data from sources to consumers with discipline.

To compare:

If most teams treat data pipeline stabilization as a tooling decision, mature teams treat it as a system design problem with the tooling as one input among several.

Why Is Data Pipeline Stabilization Necessary?

Issues that Data Pipeline Stabilization addresses or resolves:

- Bringing data pipeline stabilization work under engineering discipline rather than improvisation

- Surfacing failure modes before customers or auditors do

- Building the platform that compounds across future programs

Resolved Issues by Data Pipeline Stabilization

- Provides explicit contracts and ownership

- Captures evidence of behavior for audit and review

- Establishes the cadence that prevents drift

Core Components of Data Pipeline Stabilization

- Foundational layer that data pipeline stabilization depends on

- Operating layer that sustains the program

- Observability across the system

- Governance and policy enforcement

- Cadence and review process

Modern Data Pipeline Stabilization Tools

- Industry-standard platforms in this category

- Open-source alternatives where appropriate

- Observability tooling tuned for this workload

- Internal abstractions over vendor APIs

- Audit and compliance tooling

Tools support the discipline; the operating practice is the differentiator.

Other Core Issues They Will Solve

- Reduces incident severity through earlier detection

- Provides defensible evidence for board and audit conversations

- Builds reusable patterns across the program portfolio

In Summary: Data Pipeline Stabilization is the operating discipline that turns a tooling question into a system question.

100 CTOs. Real Expectations

This report shows what actually predicts delivery success and what CTOs discover too late.

Importance of Data Pipeline Stabilization in 2026

Data Pipeline Stabilization matters more in 2026 than it did even two years ago. Four reasons explain why.

1. Stakes have risen.

What used to be a back-office question is now a board-level program for data pipeline stabilization.

2. Operating models have not caught up.

Most enterprises still run this work as a project rather than infrastructure. The mismatch shows up in the second year.

3. Reuse compounds.

The platform built for the first program rides under every subsequent one. The first one is expensive; the fifth feels obvious.

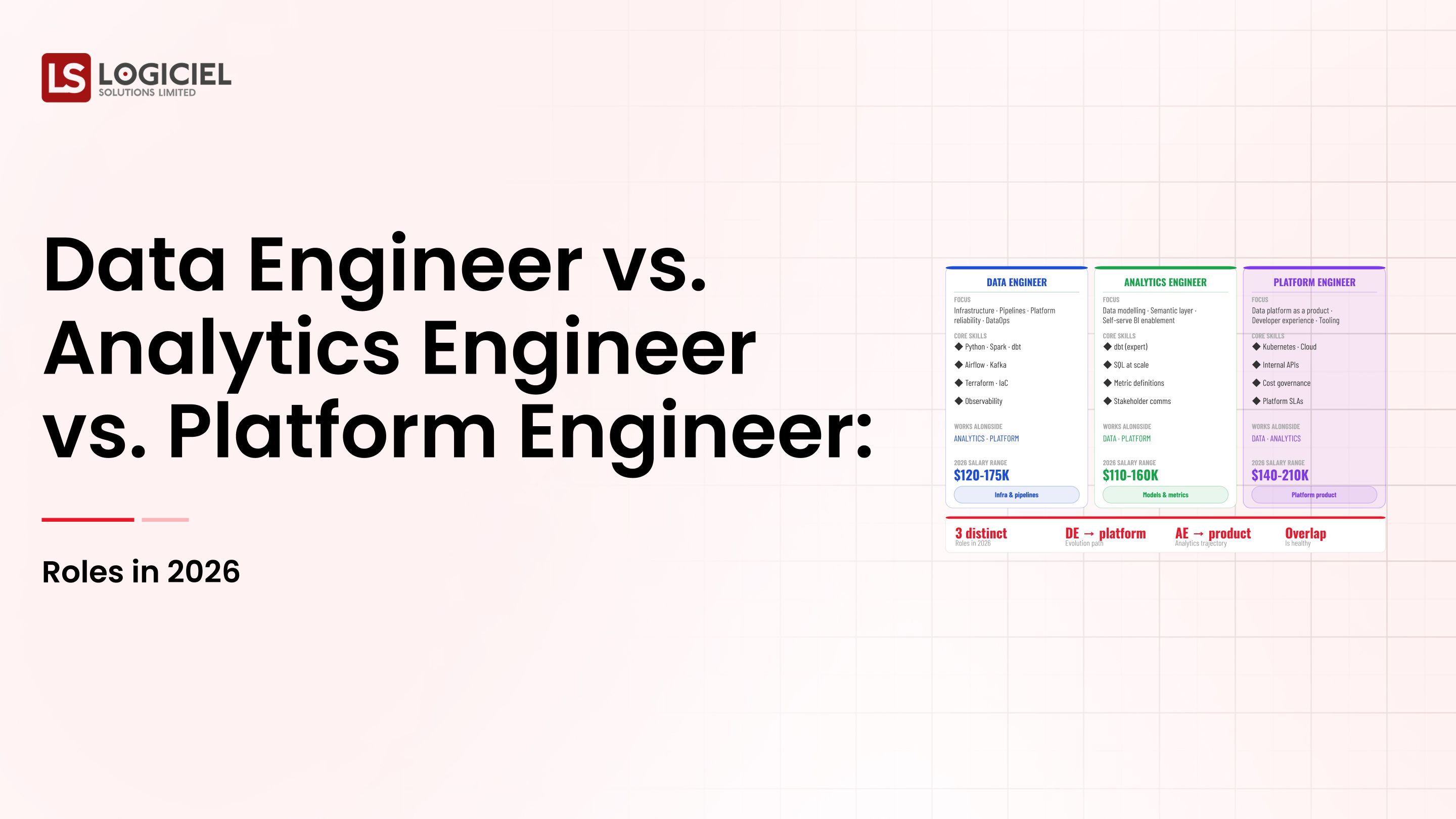

4. Talent is scarce.

Hiring through the problem rarely works. Building the operating model first lets fewer people deliver more.

Traditional vs. Modern Data Pipeline Stabilization Concepts

- Project-based data pipeline stabilization vs. platform-based data pipeline stabilization

- Implicit contracts vs. explicit contracts with testing

- Reactive incident response vs. observability-first operating model

- Annual review cadence vs. weekly or quarterly cadence

In summary: Data Pipeline Stabilization is the foundation every modern program in this space rests on.

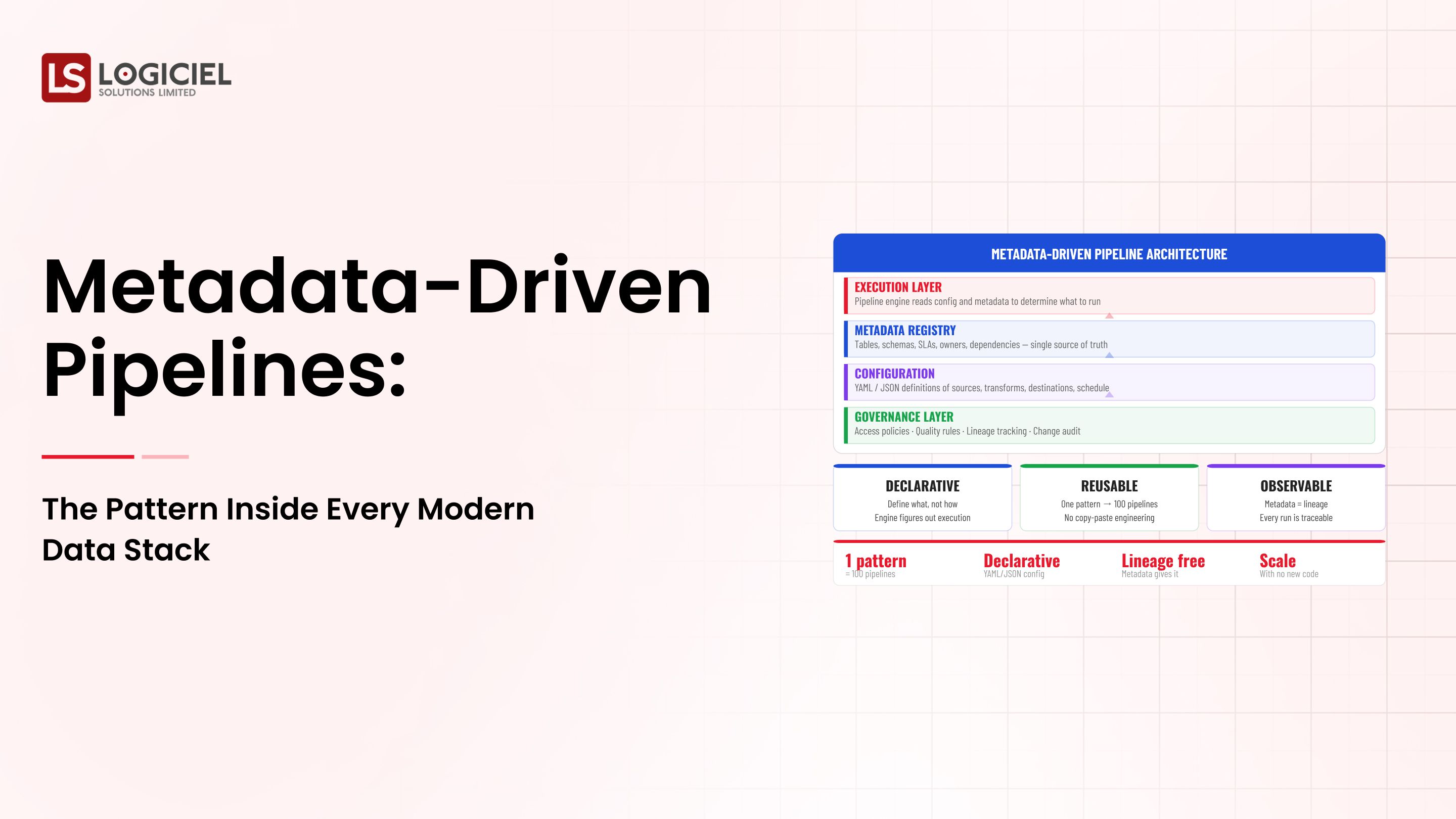

Details About the Core Components of Data Pipeline Stabilization: What Are You Designing?

Let's go through each layer.

1. Data Pipeline Stabilization Foundation Layer

What everything else rests on.

Foundation concerns:

- Architecture decisions that scale with usage

- Source-of-truth definitions

- Access patterns and contracts

2. Operating Layer

How the program is run day to day.

Operating components:

- On-call rotation and runbooks

- Cadence and review process

- Sunset criteria for capabilities not pulling weight

3. Observability Layer

Knowing what the program is doing.

Observability concerns:

- Quality and freshness signals

- Cost and unit economics

- Drift and anomaly detection

4. Governance Layer

How standards and policy are enforced.

Governance components:

- Policy enforced at runtime, not in documents

- Evidence captured automatically

- Quarterly review of policy and controls

5. Operating Cadence Layer

What keeps the program from eroding.

Cadence components:

- Weekly or monthly review on the dashboard

- Quarterly architecture review

- Incident-driven updates

Benefits Gained from Operating Discipline and Observability

- Predictable delivery without rework

- Faster recovery when things break

- Reusable platform layer for the next program

How It All Works Together

The foundation layer holds the system up. The operating layer runs it day to day. Observability surfaces what's happening. Governance keeps policy in force. Operating cadence keeps the layers current. Together, the layers turn data pipeline stabilization from a question into a working program.

Common Misconception

Data Pipeline Stabilization is just a tooling decision.

Data Pipeline Stabilization is a system and operating decision. Tooling is one input among several. The discipline is the difference.

Key Takeaway: Each layer addresses a different class of risk. Programs that under-invest in any layer have predictable gaps.

Real-World Data Pipeline Stabilization in Action

Let's take a look at how data pipeline stabilization operates with a real-world example.

We worked with a team running data pipeline stabilization for a multi-business-unit enterprise, with these constraints:

- Mixed workloads across multiple teams

- Strict audit and compliance requirements

- Cost shape sensitive to usage growth

Step 1: Inventory the Current State

Where the program is today, what works, what doesn't.

- Per-component assessment

- Gap analysis

- Documented current state

Step 2: Pick the Architecture

Match the architecture to the workload mix and operating model.

- Documented choice with tradeoffs

- Reusable pattern definitions

- Migration path documented

Step 3: Build the Foundation

Foundation layer first, operating layer second, observability and governance alongside.

- Foundation in place

- Operating model documented

- Observability instrumented

Step 4: Pilot, Iterate, Scale

Ship to a controlled population; absorb learning; scale.

- Pilot with named users

- Daily review of outcomes

- Scale after first-month learning

Step 5: Operate the Cadence

Weekly or monthly review on the dashboard; quarterly architecture review.

- Weekly cost and quality review

- Quarterly architecture review

- Named owner for the program

Where It Works Well

- Foundation layer designed for reuse across programs

- Operating model documented before launch

- Cadence sustained quarter after quarter

Where It Does Not Work Well

- Vendor-led decisions without architecture review

- Operating model invented during the first incident

- Annual review when systems change quarterly

Key Takeaway: The team that builds data pipeline stabilization as infrastructure ships faster and recovers quicker than the team that builds it as a project.

Common Pitfalls

i) Treating Data Pipeline Stabilization as a tooling decision

The tooling matters less than the operating model. Pick the tool after the design.

- Design before tooling

- Document tradeoffs

- Plan for change over time

ii) Skipping the operating model

Operating models invented during the first incident are operating models invented too late.

iii) No cadence

Without weekly or quarterly cadence, the program drifts. Schedule the review; protect the time.

iv) Hiring through the problem

Adding headcount to an unclear program slows it down. Diagnose first; hire second.

Takeaway from these lessons: Most failures are operating-model gaps, not technology gaps. The cadence is the work.

Data Pipeline Stabilization Best Practices: What High-Performing Teams Do Differently

1. Design the foundation before the tools

Architecture and operating model first. Tools second.

2. Document the operating model

On-call rotation, runbooks, postmortems, sunset criteria. Built in, not bolted on.

3. Build observability streaming

Quality, cost, and freshness signals. Continuous, not periodic.

4. Run quarterly cadence

Architecture review, cost review, operating-model review. Without cadence, the program erodes.

5. Treat data pipeline stabilization as a platform

Each new use case rides on the platform built for the first one. Reuse compounds.

Logiciel's value add is partnering with engineering and data leaders on data pipeline stabilization programs, including the foundation, operating model, and cadence work that turns a one-off project into a multiplier.

Takeaway for High-Performing Teams: High-performing teams treat data pipeline stabilization as infrastructure with quarterly cadence. The discipline is the difference.

Signals You Are Designing Data Pipeline Stabilization Correctly

How do you know this is working? Not in a board deck. In the daily evidence the team produces. The signals below are the ones that separate programs on the path from programs that just look like progress.

The team can name failure modes without flinching. People who actually run these systems will tell you the last three things that broke. People who only read about them won't.

Cost is observable. Today, the team can tell you how much they spent yesterday and what drove the change. Not at the end of the quarter. Today.

Change is boring. Deploys are routine, rollbacks are routine, model swaps are routine. Heroic deploys are a sign of an immature system, not a heroic team.

Eval runs daily, not quarterly. There's a live dashboard with numbers, not a slide with vibes.

Vendor lock-in is a number. The team can tell you the rip-and-replace cost in dollars and weeks. They've done the math. They haven't pretended the question doesn't exist.

Adjacent Capabilities and Connected Work

This work doesn't sit alone. It depends on, and pushes back into, several other capabilities your team is probably already running. Most teams notice this only when one of the adjacent surfaces breaks and the program inherits the cleanup.

The usual neighbors are the data platform, the observability stack, and whatever security review process gets dragged into anything new. Then there's the team-shape question: platform engineering, applied ML, and SRE all share capacity here, and so does whatever AI initiative is next on the roadmap. Worth naming these upfront so leadership sees a portfolio, not a one-off.

The mistake I keep watching teams make is treating the neighbors as someone else's problem. They aren't. The integration with the data platform is yours. So is the security review of the runtime, and so is the on-call rotation that covers what you ship. The work shows up either way, just later and more expensive if you ducked it. Better to own those handoffs and pay the timeline cost upfront.

Stakeholder Considerations and Communication

Different rooms ask different questions, and the answers don't translate well between them.

The board wants to know about risk, ROI, and whether this puts you ahead of competitors. Your CFO wants unit economics and a forecast that holds up under sensitivity. The CISO wants the threat model and a defensible audit posture. Engineering wants to know what's in scope, what's bought, and what they're going to be on call for. The line of business wants a date the value lands on, and a description of what users will see.

Programs that prepare for these audiences move faster, full stop. A one-page brief per stakeholder, updated quarterly, costs almost nothing to produce. Not having those briefs is what turns a quarterly review into the meeting where sponsor confidence quietly leaks out.

Communication cadence also matters more than people think. Weekly during active delivery. Monthly during steady-state. Always after an incident or a meaningful change. Programs that go quiet between milestones end up surprising leadership in ways that are not flattering. Pick a cadence at kickoff and protect it.

Metrics That Tell You Data Pipeline Stabilization Is Working

Beyond the success signals above, these are the leading indicators worth watching week over week. They're not vanity numbers. They distinguish programs that are compounding from programs that are running in place.

Time from idea to production. How long does it take a new use case to get from concept to something a customer actually sees? Programs that are working see this number drop quarter over quarter. Programs that aren't see it grow.

Cost per unit of value. Are you spending less per unit of output each quarter, or more? This is the cleanest leading indicator that the platform layer is amortizing.

Incident severity over time. Severity drops as the operating model matures. Flat or rising severity says the operating model has gaps you haven't named yet.

Reuse rate across programs. What fraction of what you built for program one shows up in program two and program three? High reuse means the first investment is paying back. Low reuse means you're rebuilding.

Sponsor confidence trend. Hard to measure directly. Easier to read in approved budget, in strategic emphasis, and in whether your sponsor is asking for more or asking you to slow down.

Conclusion

Data Pipeline Stabilization is the discipline that separates programs that compound from programs that run in place. The layers are well known; the operating model is the work; the cadence is the multiplier.

Key Takeaways:

- Data Pipeline Stabilization is system design plus operating discipline, not a tooling decision

- Foundation, operating, observability, governance, and cadence are co-equal layers

- Cadence prevents drift; reuse compounds across programs

When data pipeline stabilization is built and operated correctly, the benefits compound:

- Predictable delivery and recovery

- Defensible audit and board posture

- Reusable platform that compounds across programs

- Stronger team morale and sponsor confidence over time

60% Overhead Reduction Guide

Inside a one-quarter overhead audit that pulled a five-person data team back from 67% firefighting.

Call to Action

If your data pipeline stabilization program is feeling fragile, the move this quarter is to inventory the layers you have, build the ones that are missing, and operate the cadence.

Learn More Here:

- Data Pipeline Observability Why Your Pipelines Break and You Re the Last to Know

- Ci Cd Pipeline Architecture Fast Reliable Pipelines

- Data Pipeline

At Logiciel Solutions, we work with engineering and data leaders on data pipeline stabilization programs that turn one-off projects into platform investments.

Explore how to modernize your data pipeline stabilization program.

Frequently Asked Questions

What is data pipeline stabilization?

The engineered systems that move data from sources to consumers with discipline, run as a discipline rather than a one-off project.

When does this matter most?

When the workload, scale, or audit requirements push past what improvisation can handle.

Who should own the program?

An engineering leader paired with the line of business. Joint ownership prevents the program from stalling at the first hard tradeoff.

How long does it take to build out?

Eight to sixteen weeks for a first useful version with disciplined scope. Programs that take longer almost always missed it at the framing stage.

What is the biggest mistake in data pipeline stabilization?

Treating it as a one-off project rather than a platform investment. The first program builds the platform; the platform compounds.